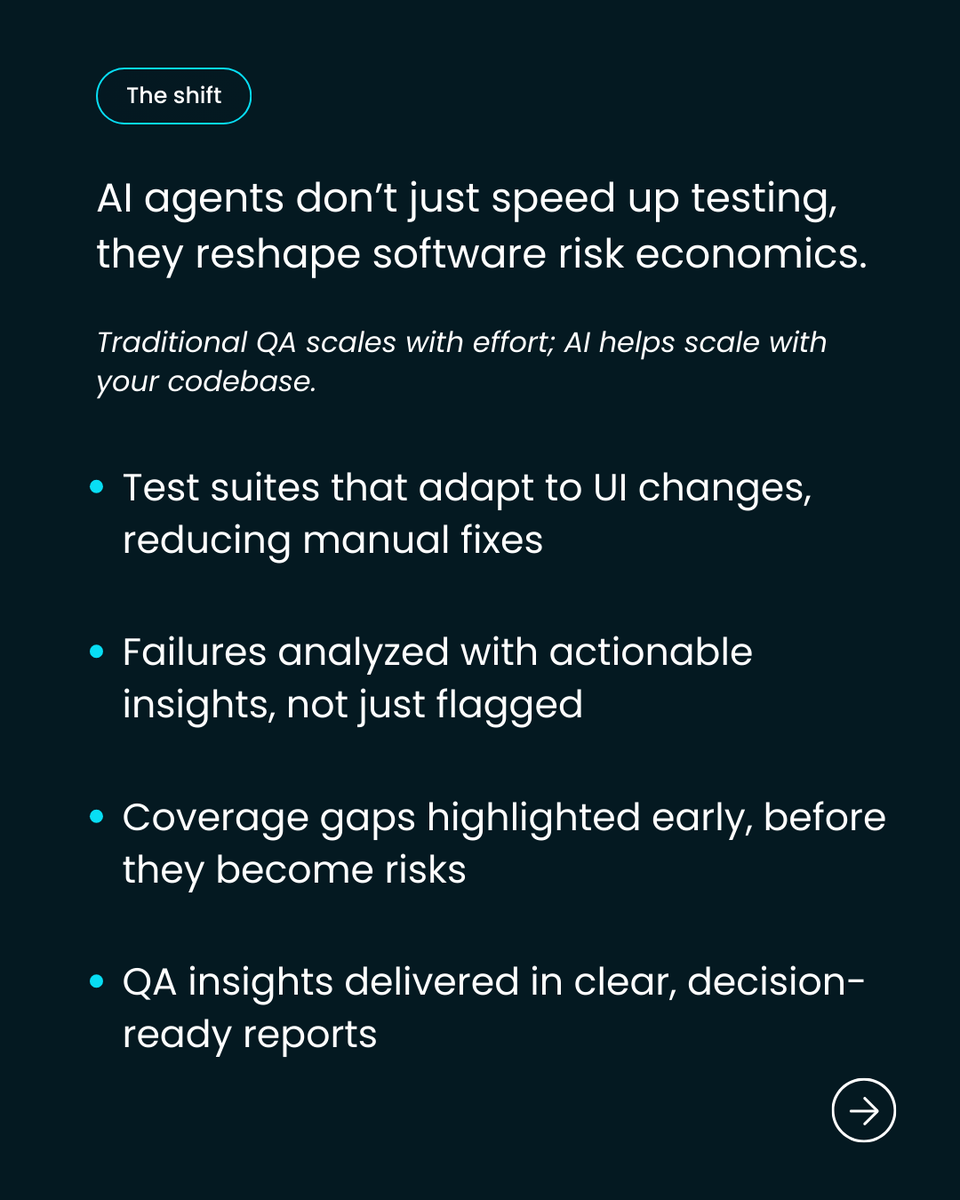

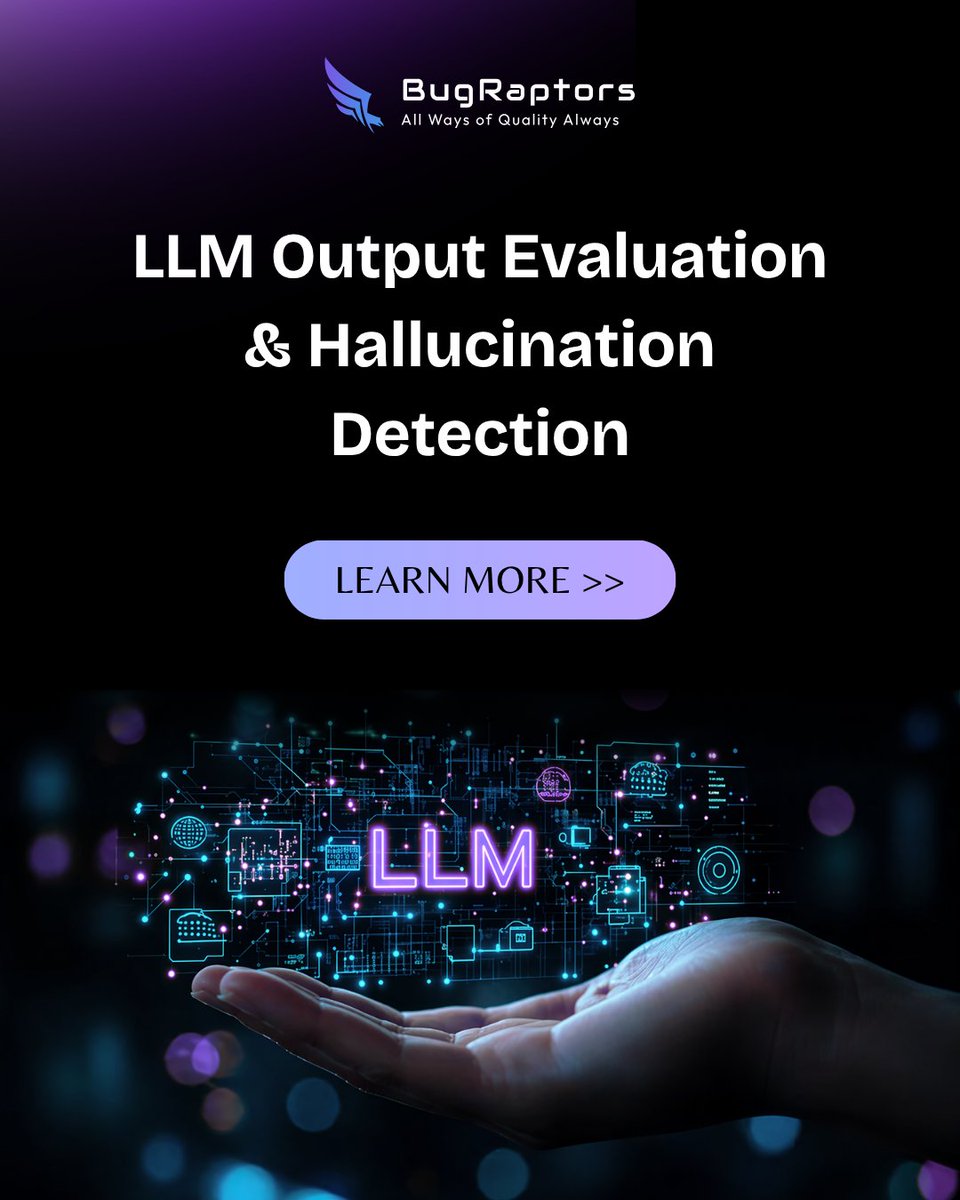

LLMs don’t just fail.

They hallucinate.

And most teams don’t catch it early enough.

Here’s how to evaluate outputs and detect hallucinations before they become real problems:

bugraptors.com/blog/llm-outpu…

#AI #LLM

English

BugRaptors - Software Testing Company

3.6K posts

@BugRaptors

Being the top software testing company, we promise to deliver the best-in-class QA engineering solutions to clients worldwide.