Sapien

4.1K posts

@BuildOnSapien

Building Proof of Quality - Verifiable quality signals for AI

An AI mislabeling and miscounting inventory, like so many others, is a data problem deep down. Proof of Quality would have prevented this.

JUST IN: Starbucks retires AI inventory tool across North America after it reportedly miscounted & mislabeled store items.

🔴 Governance overtakes development in AI! Sinch says 74% of enterprises have rolled back live AI communications agents, and the highest rollback rates appear in organizations with the most mature guardrails. One of the most important signals in enterprise AI: production is not the finish line. buff.ly/Ywh16PX

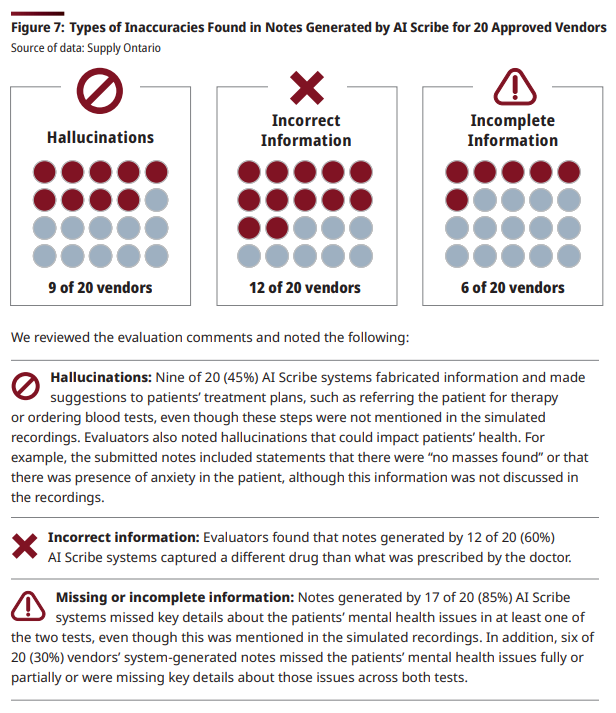

An auditor for the Ontario, Canada government found that AI agents tasked with turning doctor/patient conversations into structured notes routinely hallucinated false treatments, replaced drug names with entirely different drugs, and missed crucial information

Dozens of empty Waymos invaded an Atlanta neighborhood and circled a cul-de-sac for hours with no passengers wsbtv.com/news/local/atl…

The market is flooded with "we're building an Al agent" teams with no clear buyer or differentiation. What's scarce: infrastructure for verifying, governing, and holding agents accountable.

The Google Threat Intelligence Group has detected the first known instance of a threat actor using an AI-developed zero-day exploit in the wild. While the attackers planned a wide-scale strike, our proactive counter-discovery may have prevented that from happening. This finding is part of our new report on AI-powered threats.