Kadek Byan Prihandana Jati

7K posts

Kadek Byan Prihandana Jati

@ByanJati

- Tweeting Since 2009 (almost 12 years on Internet)

Fast mode for Claude Opus 4.7 is now available in research preview on the API and in Claude Code.

This works really well btw, at the end of your query ask your LLM to "structure your response as HTML", then view the generated file in your browser. I've also had some success asking the LLM to present its output as slideshows, etc. More generally, imo audio is the human-preferred input to AIs but vision (images/animations/video) is the preferred output from them. Around a ~third of our brains are a massively parallel processor dedicated to vision, it is the 10-lane superhighway of information into brain. As AI improves, I think we'll see a progression that takes advantage: 1) raw text (hard/effortful to read) 2) markdown (bold, italic, headings, tables, a bit easier on the eyes) <-- current default 3) HTML (still procedural with underlying code, but a lot more flexibility on the graphics, layout, even interactivity) <-- early but forming new good default ...4,5,6,... n) interactive neural videos/simulations Imo the extrapolation (though the technology doesn't exist just yet) ends in some kind of interactive videos generated directly by a diffusion neural net. Many open questions as to how exact/procedural "Software 1.0" artifacts (e.g. interactive simulations) may be woven together with neural artifacts (diffusion grids), but generally something in the direction of the recently viral x.com/zan2434/status… There are also improvements necessary and pending at the input. Audio nor text nor video alone are not enough, e.g. I feel a need to point/gesture to things on the screen, similar to all the things you would do with a person physically next to you and your computer screen. TLDR The input/output mind meld between humans and AIs is ongoing and there is a lot of work to do and significant progress to be made, way before jumping all the way into neuralink-esque BCIs and all that. For what's worth exploring at the current stage, hot tip try ask for HTML.

lapangan padel sampe dijual rugi 😳 padel beneran udah lewat hype-nya kah? ingfo dong yg usaha/pemain padel masih rame gak?

Salah satu lifehacks yang gue lakukan: Beli mesin kopi dan perintilannya. Akhirnya malah bisa menghemat biaya "Jajan kopi" ke cafe sekitar 800rb - 1,2 juta per bulannya. Plus jadi punya ilmu perkopian. Life hacks yang tidak disukai oleh para pemilik cafe... Gue rinci ya harga2 perintilan ini di bawah jadi sebuah thread:

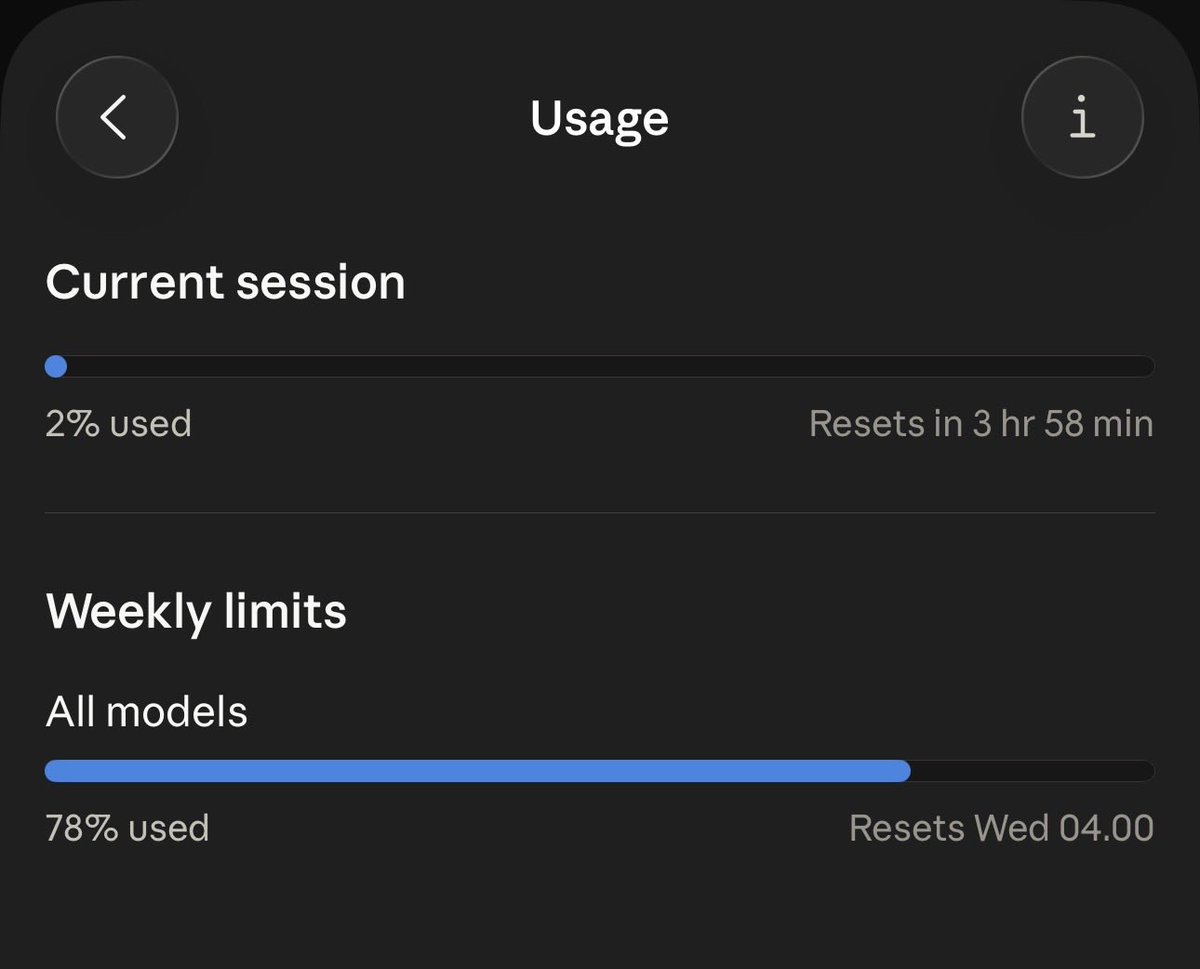

We’ve agreed to a partnership with @SpaceX that will substantially increase our compute capacity. This, along with our other recent compute deals, means that we’ve been able to increase our usage limits for Claude Code and the Claude API.