Carlos Andrés Padilla Jaramillo

213 posts

@CAPjaramillo

Biólogo Magister en Química

How To Choose a Good Scientific Problem

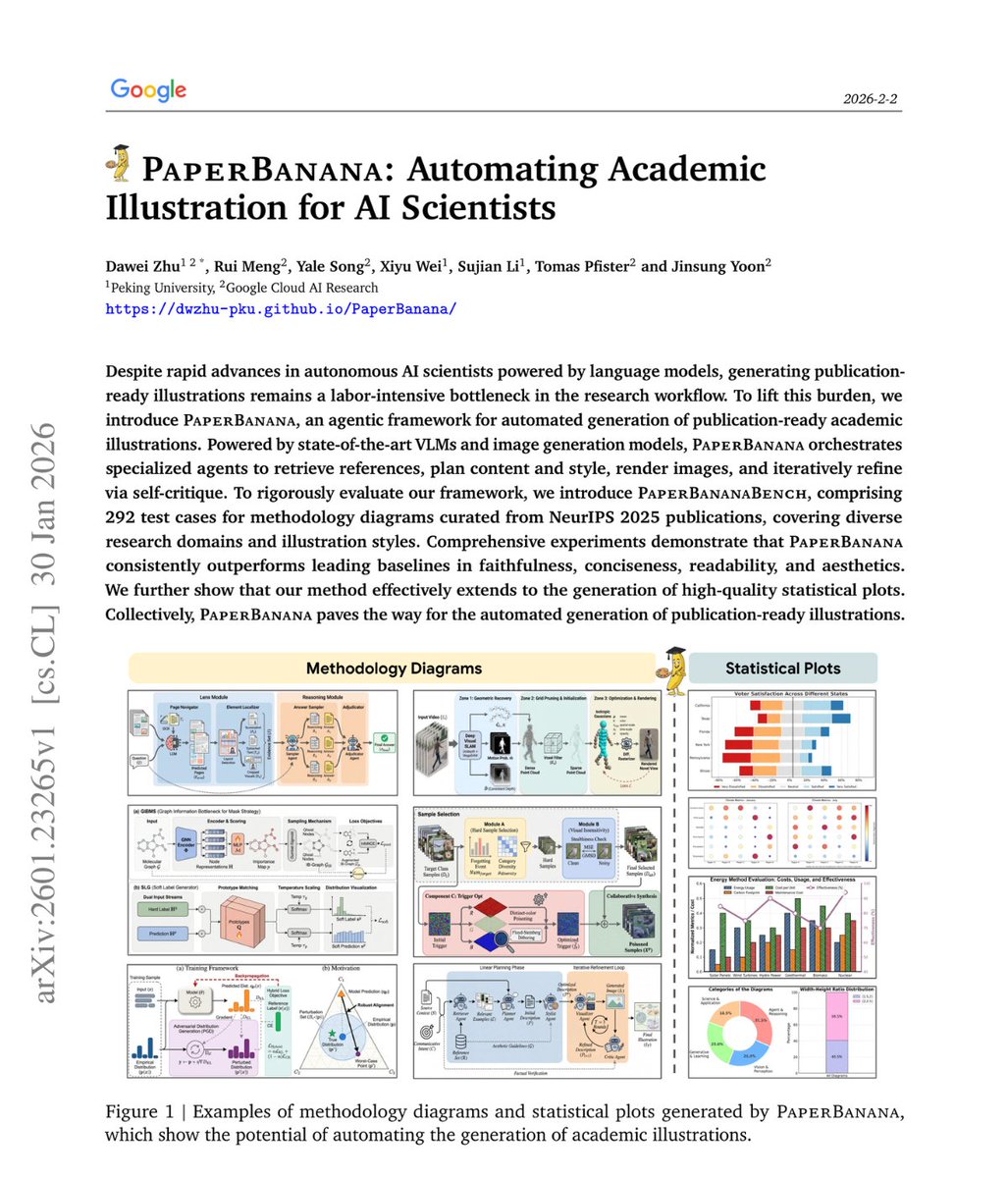

The use of AI in scientific research is evolutionary, not revolutionary. Ever since the pocket calculator, we have been delegating to machines tasks at which they excel: From arithmetic and simulation to database search, information retrieval and data analysis. Modern AI tools extend this trend by accelerating information retrieval and pattern recognition, but they do not fundamentally alter the epistemic foundations of scientific inquiry. They change the speed and scale of research, not its nature.

Fitness Landscape for Antibodies 2: Benchmarking Reveals That Protein AI Models Cannot Yet Consistently Predict Developability Properties 1. A new study benchmarks the performance of 30 AI and biophysical models in predicting the developability properties of antibodies. The study finds that most models fail to produce statistically significant correlations for the majority of datasets, highlighting the challenges in using AI for antibody design. 2. The study introduces FLAb2, the largest public therapeutic antibody design benchmark to date, containing data on over 4 million antibodies across 32 studies. It evaluates seven key properties: thermostability, expression, aggregation, binding affinity, pharmacokinetics, polyreactivity, and immunogenicity. 3. The research shows that no single AI model can consistently predict all developability properties. While some models like IgLM, ProGen2, and ESM2 show significant correlations for certain datasets, they fail to generalize across all properties or similar datasets. 4. The study finds that model architecture has less impact on zero-shot performance than the training data composition. Models incorporating protein structure perform better than sequence-only models, indicating that structural information is crucial for accurate predictions. 5. The authors also investigate the germline bias in protein language models, revealing that evolutionary signals significantly influence model predictions. On average, germline edit distance accounts for 40% of the apparent predictive power, suggesting that models rely heavily on evolutionary patterns rather than biophysical mechanisms. 6. Fine-tuning models with sufficient data (10^3 points) can improve performance, but the study shows that even simple one-hot encoding models can match the performance of billion-parameter models when provided with enough developability data. 7. The study concludes that while AI models show promise in certain areas, they are not yet capable of generalizable zero-shot or few-shot prediction of antibody developability. The authors recommend further research to integrate richer sources of information and reduce germline bias. 💻Code: github.com/Graylab/FLAb 📜Paper: biorxiv.org/content/10.648… #AntibodyDesign #AIBenchmarks #ProteinEngineering #DevelopabilityPrediction