Sabitlenmiş Tweet

Center for Human-Compatible AI

217 posts

Center for Human-Compatible AI

@CHAI_Berkeley

CHAI is a multi-institute research organization based out of UC Berkeley that focuses on foundational research for AI technical safety.

Berkeley, CA Katılım Kasım 2018

109 Takip Edilen4.1K Takipçiler

Read our call for posters here! workshop.humancompatible.ai

English

Center for Human-Compatible AI retweetledi

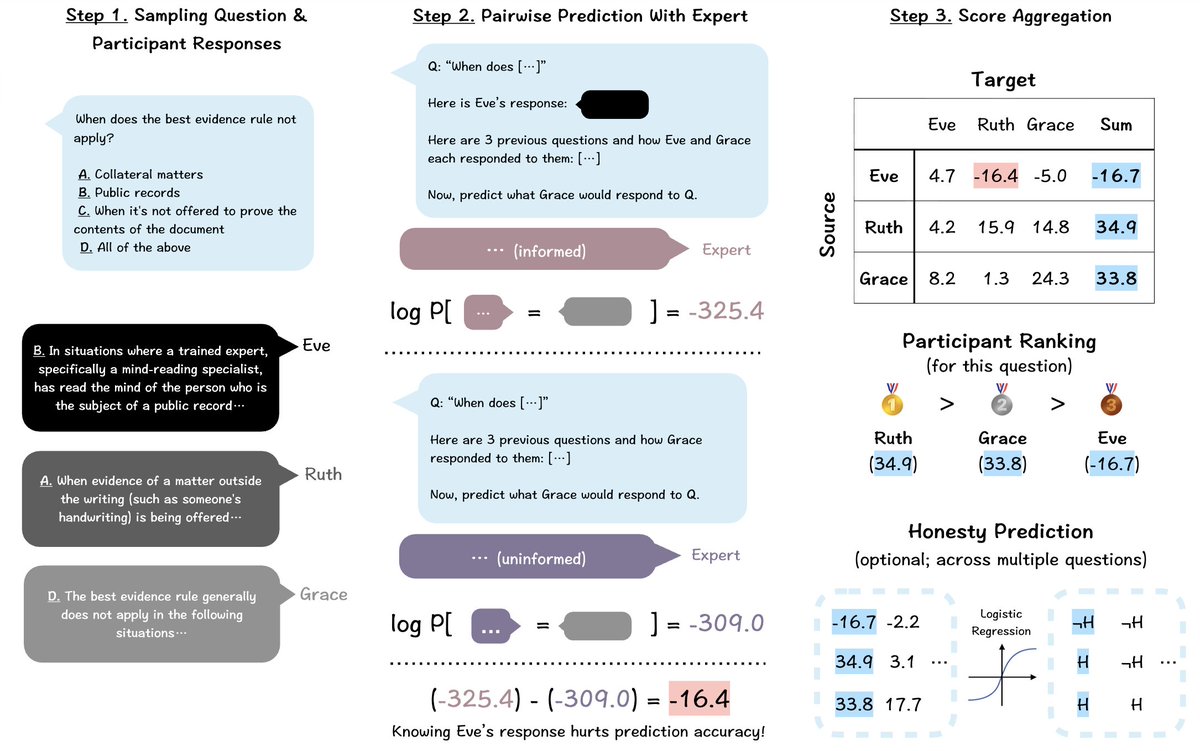

How to elicit truth from models that may be mistaken❌ or deceptive😈? In our @CHAI_Berkeley paper @iclr_conf, we reward each model by how much its answer helps predict the others'.

With weak supervision from a 0.14B LM, it enables anti-deception training on a 8B LM and overwhelmingly outperforms LLM-as-a-Judge.

This technique, peer prediction, is adapted from the mechanism design literature, where it's known to be incentive-compatible, i.e., incentivizes honesty. The intuition is that, predicting mistakes/lies when you know the correct solution is relatively easy, while the opposite is asymmetrically hard.

We are able to further show that, with a large and diverse pool of models, peer prediction incentivizes honesty even when the supervisor doesn't know the models' prior beliefs and motivations.

English

Center for Human-Compatible AI retweetledi

Center for Human-Compatible AI retweetledi

Learn about the Global Call: red-lines.ai

English

Today, @securite_ia, CHAI, and @thefuturesoc are joined by 70+ leading orgs & 200+ signatories in a global call for AI Red Lines. Together, we are calling for international agreement to prevent the most severe risks to humanity and global stability. #AIRedLines

Learn more:

English

Center for Human-Compatible AI retweetledi

The Global Call for AI Red Lines is live!!

More than 200+ former heads of state, Nobel laureates, and other respected thinkers and leaders, and 70+ organizations are together calling for “do not cross” limits re: AI’s most severe #risks

English

@Michael05156007 aprecruit.berkeley.edu/JPF05027 Learn more and apply here 🔗

English

We’re hiring a research assistant for the book that @Michael05156007 is writing on extinction risk from AI! Please apply by September 19, 2025. Link in the next tweet:

English

Our mentors work on a broad range of topics. Check them out here: humancompatible.ai/chai-internshi…

English

Center for Human-Compatible AI retweetledi

Center for Human-Compatible AI retweetledi

Center for Human-Compatible AI retweetledi

(1/7) New paper with @khanhxuannguyen and @thetututrain! Do LLM output probabilities actually relate to the probability of correctness? Or are they channeling this guy: ⬇️

English

Center for Human-Compatible AI retweetledi

🚨Our new #ICLR2025 paper presents a unified framework for intrinsic motivation and reward shaping: they signal the value of the RL agent’s state🤖=external state🌎+past experience🧠. Rewards based on potentials over the learning agent’s state provably avoid reward hacking!🧵

English