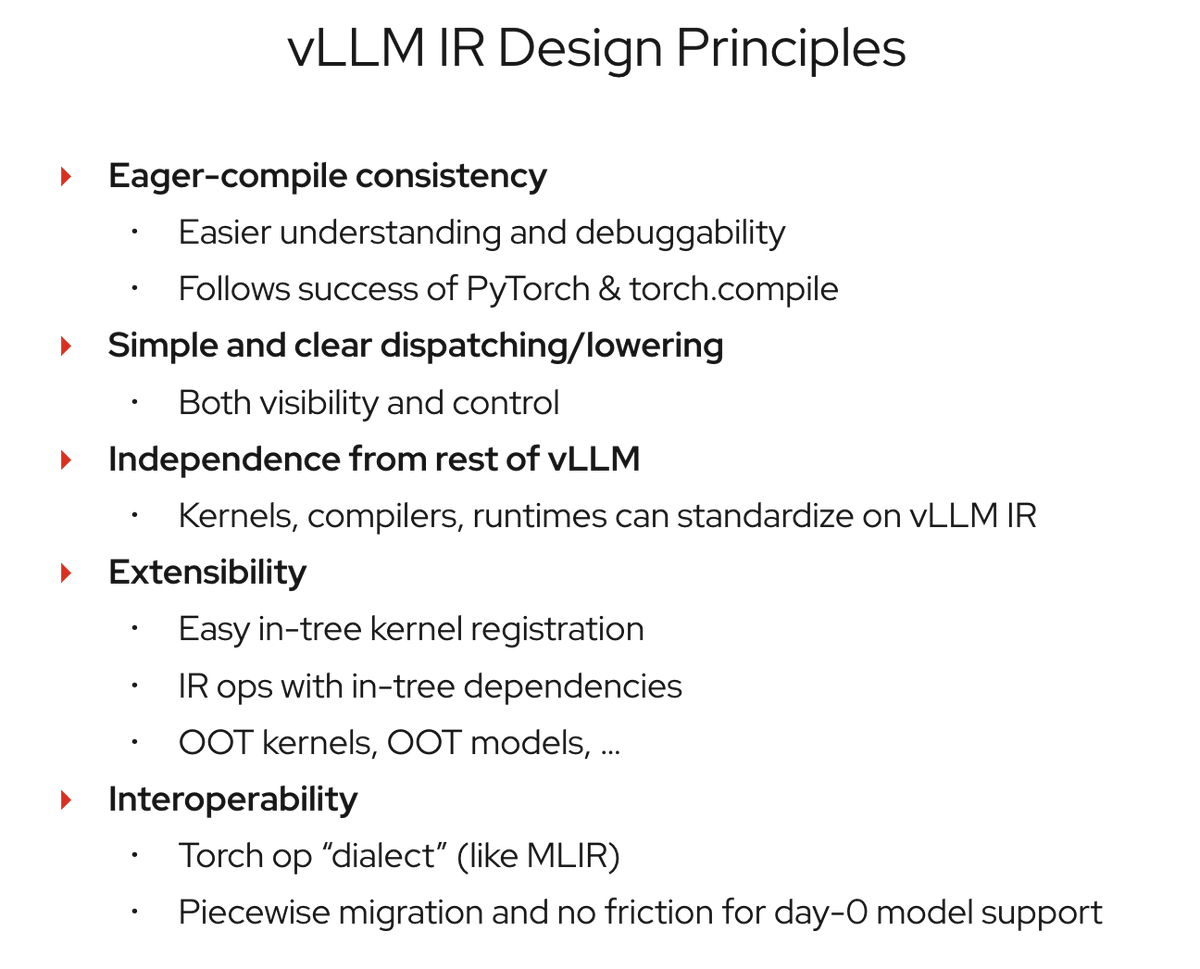

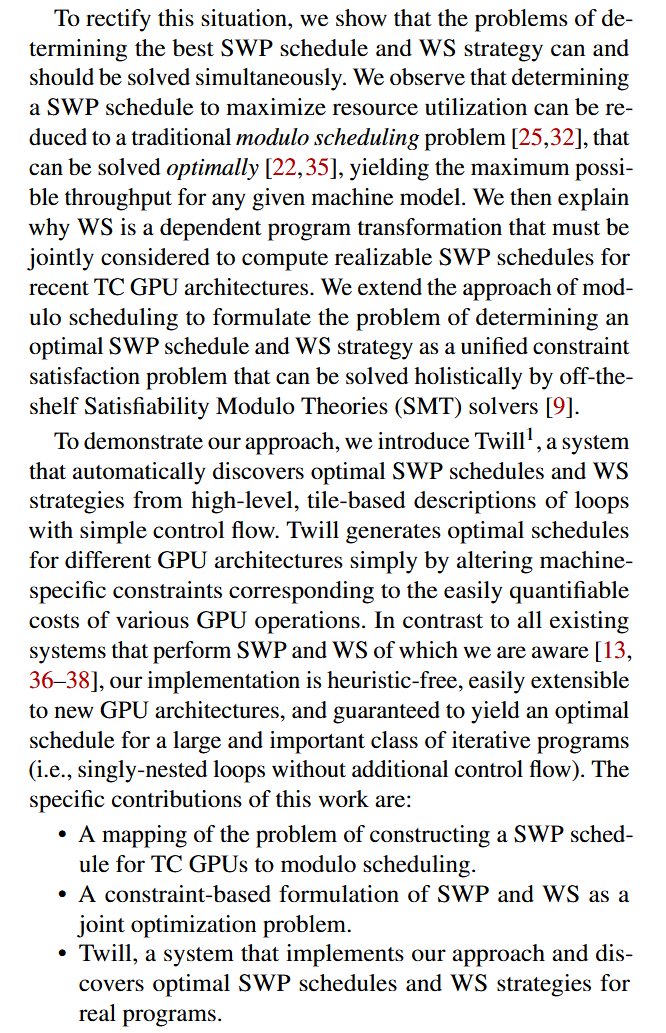

FSD (Supervised) v14.3 (HW4; Models S/3/X/Y/CT) rewrites the AI runtime with MLIR for a 20% faster reaction time and improves emergency-vehicle & small-animal handling — meaning fewer disengagements and safer supervised driving. teslahubs.com/blogs/tips/tes… #FSD