@clattner_llvm Does the solver recover the exact same schedule as flash attention? It would be cool if there were multiple optimal solutions or if it found something faster.

English

Zeke

5 posts

FA4 on Blackwell: 14 ops, 5 hardware units, 28 dependency edges. One wrong sync = a race condition sanitizers won't catch. We built a constraint solver that derives the pipeline schedule automatically, in Mojo 🔥 Part 1 of our series is out → modular.com/blog/software-…

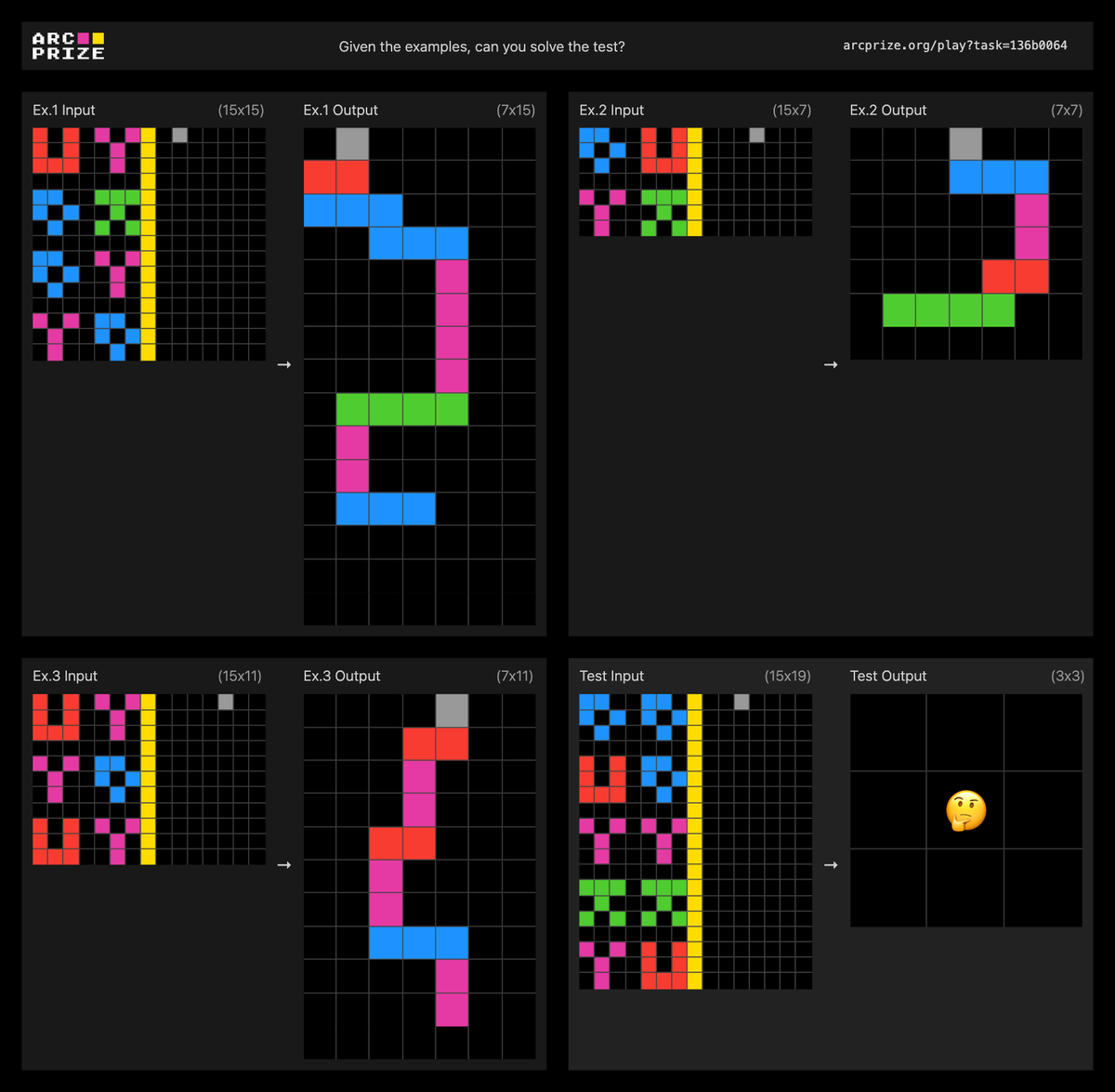

After we got early access to Gemini 3 Pro, it was SOTA, we were impressed and then Google told us, "there's one more thing..." Deep Think sets the new high water mark on ARC-AGI-2