Sabitlenmiş Tweet

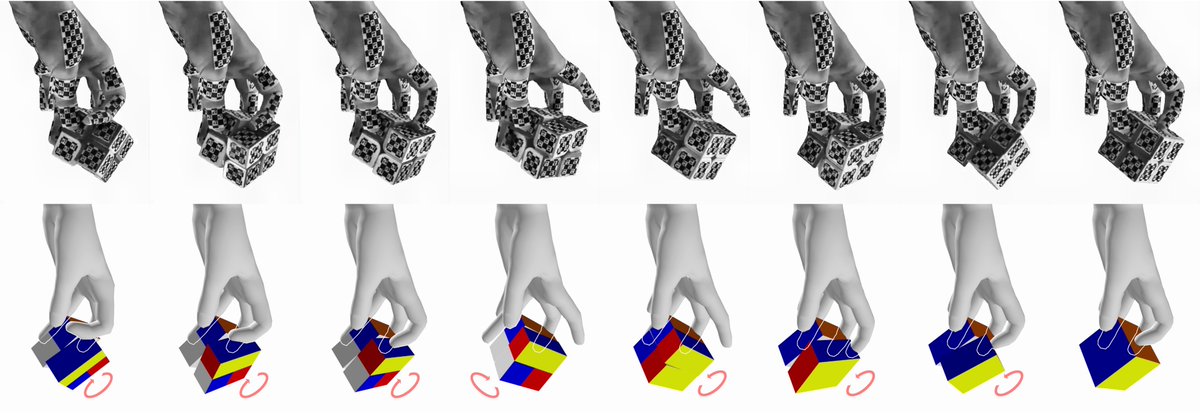

🚀Introducing GMT — a general motion tracking framework that enables high-fidelity motion tracking on humanoid robots by training a single policy from large, unstructured human motion datasets.

🤖A step toward general humanoid controllers.

Project Website: gmt-humanoid.github.io

Paper PDF: gmt-humanoid.github.io/resources/gmt.…

Code & Examples: github.com/zixuan417/huma…

This work is co-led by me and @jimazeyu, in collaboration with @xuxin_cheng, @Xuanbin_Peng, @xbpeng4, and @xiaolonw

English