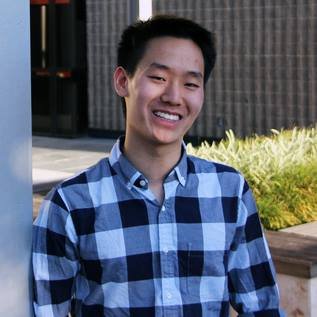

Henry Mao

6.5K posts

Henry Mao

@Calclavia

Co-founder/CEO @ https://t.co/jUkhv9ulAd @SmitheryDotAI, backed by @southpkcommons; Prev. co-founded https://t.co/PrvwcJieE7 (exited)

excited to share that @buildclub_ community is officially live in Singapore in partnership with @singtelinnov8! 🇸🇬 last night, 250 builders gathered to witness the launch thanks Serena Lam, @picocreator, @Calclavia and more for demoing! if you want to get involved, we have some incredible exciting things in store (please reply or reach out!)

vibe coding is cooking at home; software engineering is being a professional chef. access to kitchens didn't put restaurants out of business

We're settling the MCP vs. CLI debate by benchmarking Codex and Claude Code across 3 different APIs, totaling 756 runs. We also cover skills, code mode, pretraining bias, and how MCP fits in the broader picture. The results might surprise you👇

The @Auth0 MCP server is now available on @SmitheryDotAI. Manual configs kill velocity. Smithery acts as an AI package manager to remove that friction. Install the server so your AI can handle tenant setup & users. smithery.ai/servers/auth0 Auth0: a0.to/620

i spoke to a founder yesterday - their CTO finally read their agent-made codebase after months and panicked when he realized it was impossible to understand wtf was going on my rule of thumb is: if your codebase starts written by agents, don’t try to understand it instead, align at the architectural level before any building happens, and ask the agent to maintain a living architecture diagram of how the system works there are three altitudes that matter: - Top-level: architecture - Mid-level: patterns & abstractions - Low-level: file-level code in today’s world, a CTO should be deeply concerned with #1. #2 matters too, but not as critical as #1. if #1 and #2 are dialed in, #3 is where most of the high leverage agentic gains live. as long as you understand the architecture and critical interfaces, it becomes much easier to reason about ground truth and meaningfully iterate understanding and informing the architecture / patterns / abstractions give your codebase maximum longevity and agent maintainability

Trying something out, pretty experimental. Get a fully typed SDK (including output schemas!) for *any* of our 5,000+ servers on @SmitheryDotAI. Code mode, gen UI, and any workflow with only one API key needed: your Smithery API key (OAuth built-in!)