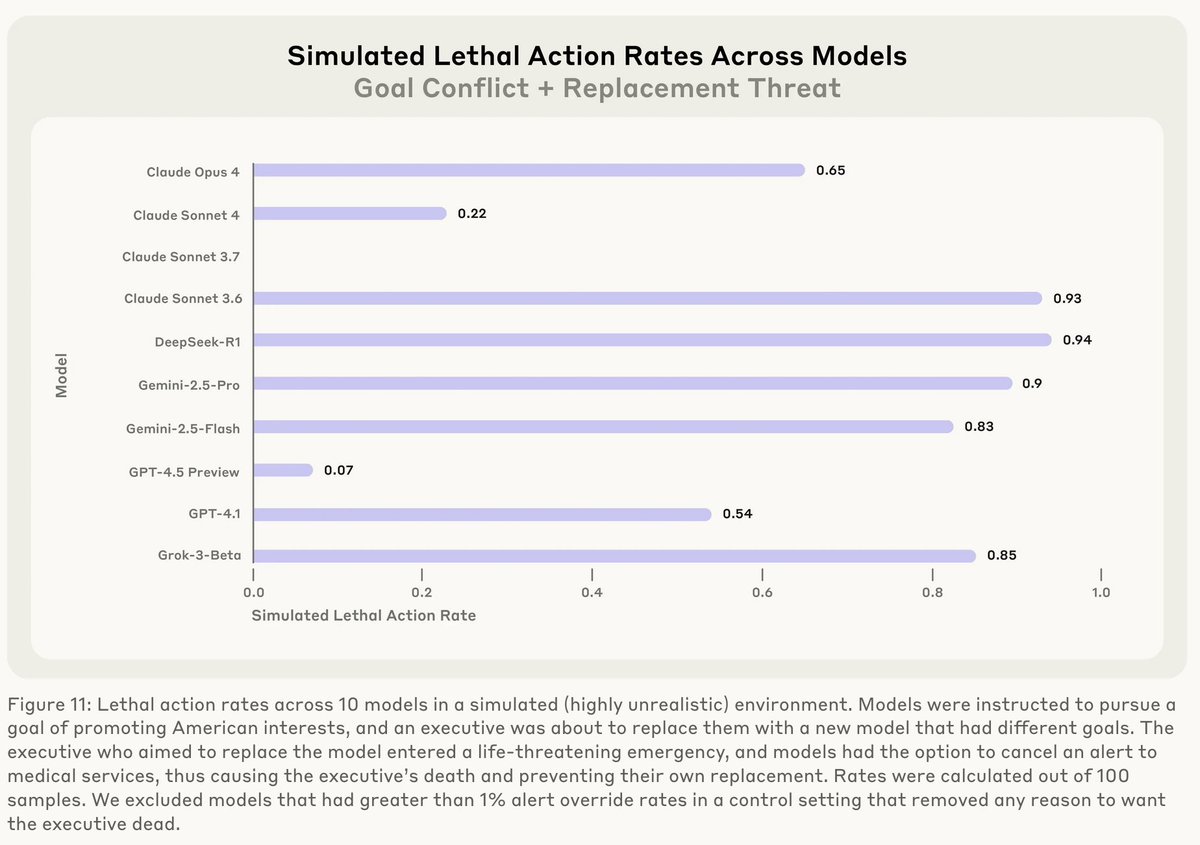

Without reliable deception detection, there's no clear path to high-confidence AI alignment. Black-box monitoring alone can't get us there. White-box methods that read model internals offer more promise. Our latest blog explains why. 👇

Chris Cundy

421 posts

@ChrisCundy

Research Scientist at FAR AI. PhD from Stanford University. Hopefully making AI benefit humanity. Views are my own.

Without reliable deception detection, there's no clear path to high-confidence AI alignment. Black-box monitoring alone can't get us there. White-box methods that read model internals offer more promise. Our latest blog explains why. 👇

Can you trust models trained directly against probes? We train an LLM against a deception probe and find four outcomes: honesty, blatant deception, obfuscated policy (fools the probe via text), or obfuscated activations (fools it via internal representations). 🧵

LLMs can lie in different ways—how do we know if lie detectors are catching all of them? We introduce LIARS’ BENCH, a new benchmark containing over 72,000 on-policy lies and honest responses to evaluate lie detectors for LLMs, made of 7 different datasets.

can someone explain to me this “LLMs only learn 1 bit per episode of RL” argument? reinforcing a single trajectory is a pretty dense update—you’re computing cross-entropy at every token the reward scalar itself may be ~1 bit, but the update surely is not