Kahris

1.3K posts

Kahris

@chrissotraidis

Building things. Overthinking the rest.

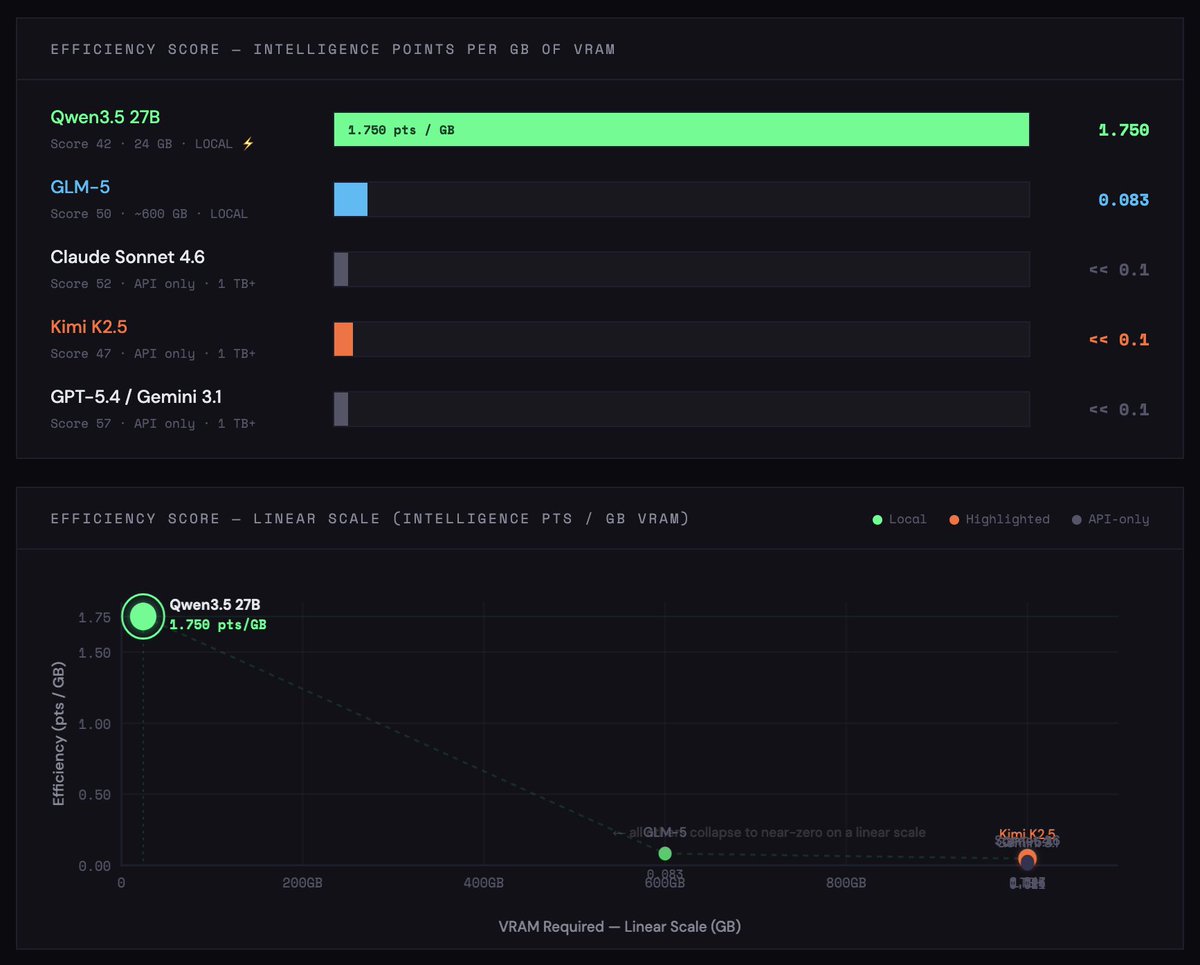

Very bullish on open source and local models Imagine running near-Opus-level model locally on that $600, 16GB Mac Mini you bought last month This 27B Qwen3.5 distill was trained on Claude 4.6 Opus reasoning traces and is putting up real numbers: - beats Claude Sonnet 4.5 on SWE-bench - keeps 96.91% HumanEval - cuts CoT (chain of thought) bloat by 24% - runs in 4-bit quantization Why this matters: local agent loops get a lot cheaper, faster, and more usable. frontier models aren’t going to keep subsidizing cheap tokens on subscriptions forever 300K+ downloads already on HF Link below 👇🏻 We’re early

Find the one that runs on a single 3090 For free, at home, unlimited tokens 27b will probably be the best release this year

My next longevity experiment: 5-MeO-DMT.