Chris Staudinger

10.7K posts

@ChrisStaud

Co-Founder of Level Up Coding

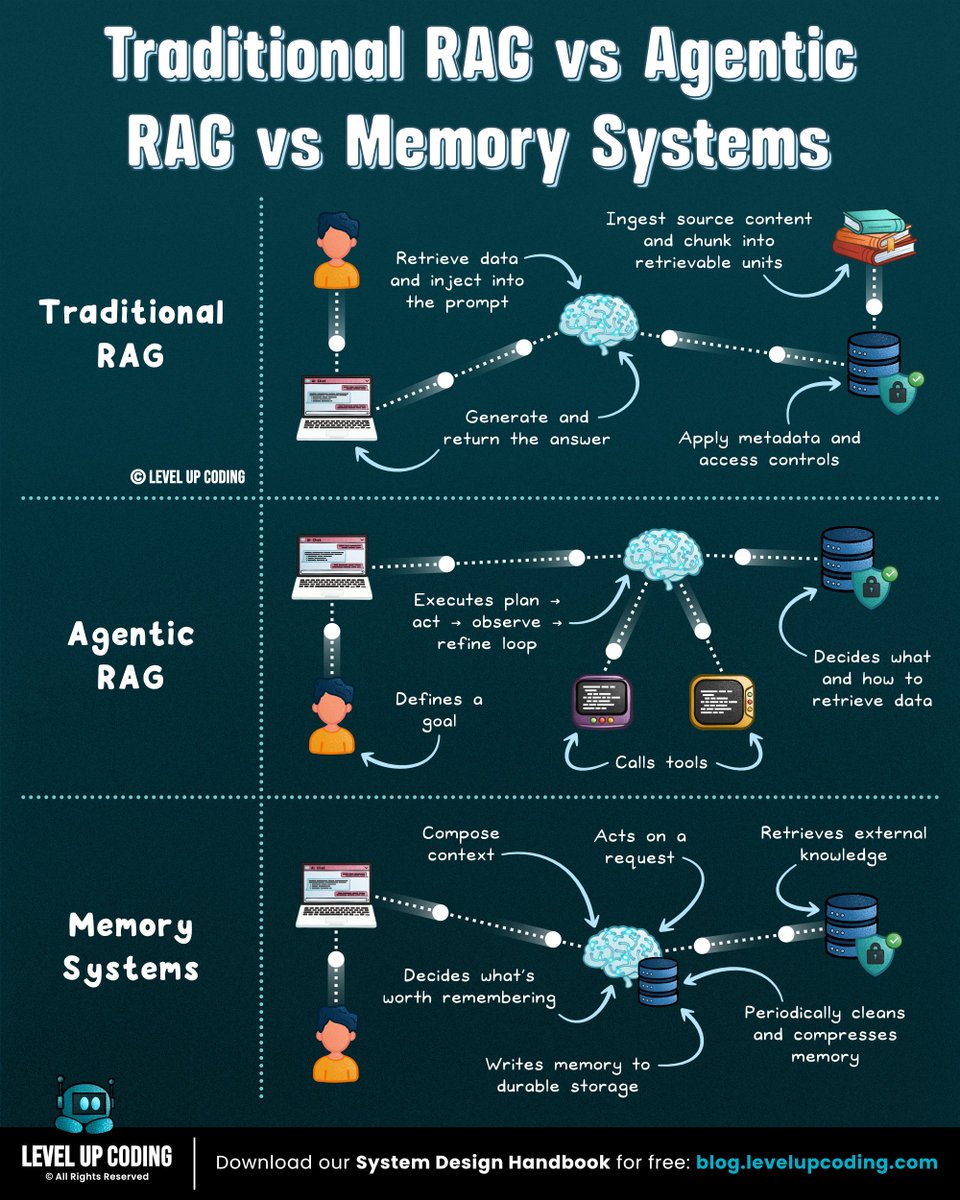

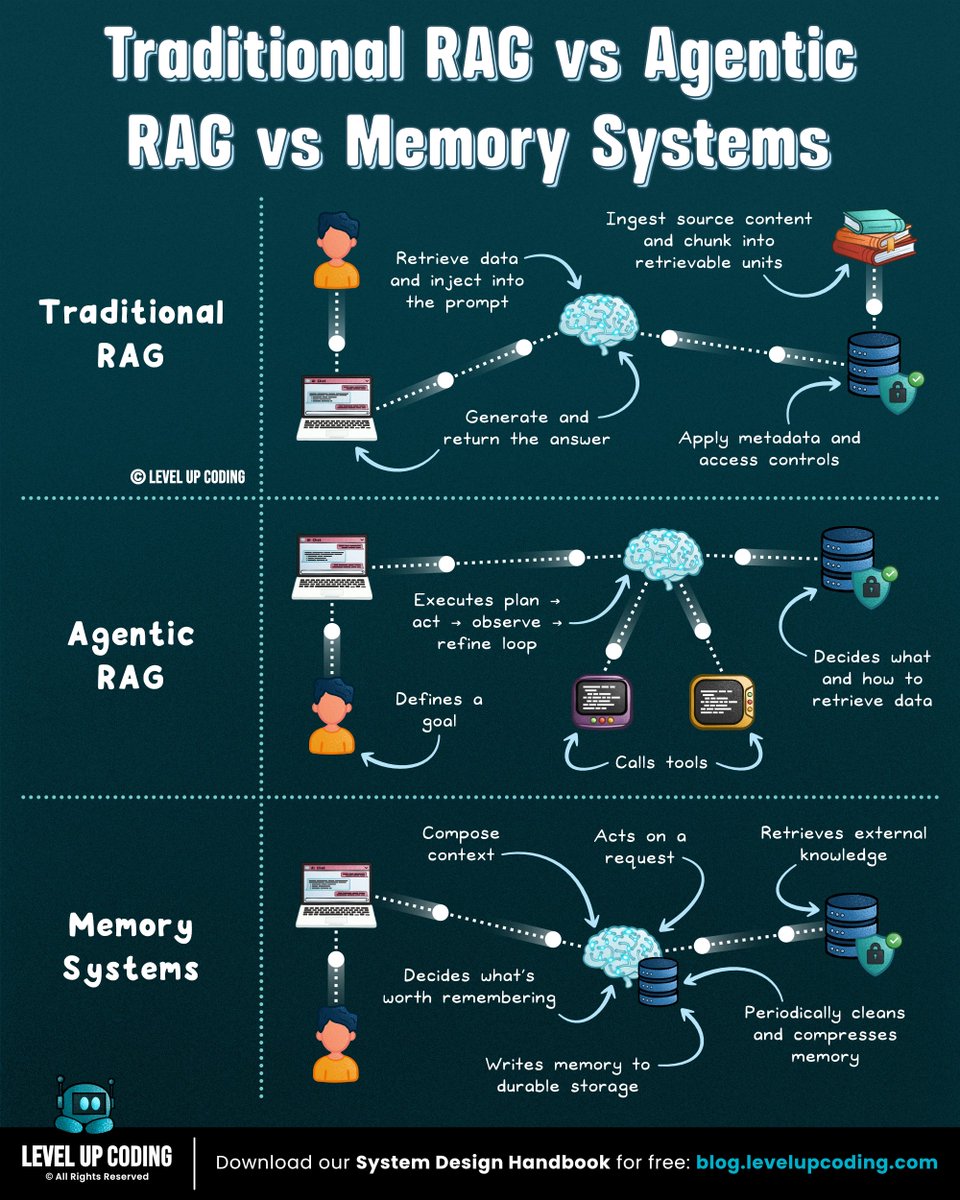

Traditional RAG vs Agentic RAG vs Memory Systems Most people mix these up: Traditional RAG → Agentic RAG → Memory Systems Each step solves a different limitation. Here’s the breakdown (save this): 𝟭) 𝗧𝗿𝗮𝗱𝗶𝘁𝗶𝗼𝗻𝗮𝗹 𝗥𝗔𝗚 The classic way to ground LLMs. Pipeline: Retrieve → Inject context → Generate answer Best for docs and knowledge search. But it has a key limitation: Every request is stateless. Here’s a great piece explaining why this stateless design creates problems for agents:lucode.co/agent-memory-a… 𝟮) 𝗔𝗴𝗲𝗻𝘁𝗶𝗰 𝗥𝗔𝗚 Now the system adds an agent loop. Instead of a single retrieval step, the model can: Plan → Retrieve → Observe → Refine → Repeat Agentic RAG is great for complex problem solving. But there’s still a gap. Once the session ends, the agent loses all context. 𝟯) 𝗠𝗲𝗺𝗼𝗿𝘆 𝗦𝘆𝘀𝘁𝗲𝗺𝘀 This is where the future is heading. Instead of stateless assistants, you build stateful agents. They can: ↳ Write memories: store facts and decisions from interactions ↳ Consolidate knowledge: summarize long histories ↳ Recall context: retrieve important memories later Best for assistants that need to remember users, decisions, and ongoing work. In short: RAG retrieves knowledge. Memory preserves context. Teams building agents are discovering the same issue: The hardest problem isn’t reasoning. It’s memory. Here’s a great article that breaks down this problem and how to fix it: lucode.co/agent-memory-a… What else would you add? ♻️ Repost to help others learn AI. 🙏 Thanks to @Oracle for sponsoring this post.

Traditional RAG vs Agentic RAG vs Memory Systems Most people mix these up: Traditional RAG → Agentic RAG → Memory Systems Each step solves a different limitation. Here’s the breakdown (save this): 𝟭) 𝗧𝗿𝗮𝗱𝗶𝘁𝗶𝗼𝗻𝗮𝗹 𝗥𝗔𝗚 The classic way to ground LLMs. Pipeline: Retrieve → Inject context → Generate answer Best for docs and knowledge search. But it has a key limitation: Every request is stateless. Here’s a great piece explaining why this stateless design creates problems for agents:lucode.co/agent-memory-a… 𝟮) 𝗔𝗴𝗲𝗻𝘁𝗶𝗰 𝗥𝗔𝗚 Now the system adds an agent loop. Instead of a single retrieval step, the model can: Plan → Retrieve → Observe → Refine → Repeat Agentic RAG is great for complex problem solving. But there’s still a gap. Once the session ends, the agent loses all context. 𝟯) 𝗠𝗲𝗺𝗼𝗿𝘆 𝗦𝘆𝘀𝘁𝗲𝗺𝘀 This is where the future is heading. Instead of stateless assistants, you build stateful agents. They can: ↳ Write memories: store facts and decisions from interactions ↳ Consolidate knowledge: summarize long histories ↳ Recall context: retrieve important memories later Best for assistants that need to remember users, decisions, and ongoing work. In short: RAG retrieves knowledge. Memory preserves context. Teams building agents are discovering the same issue: The hardest problem isn’t reasoning. It’s memory. Here’s a great article that breaks down this problem and how to fix it: lucode.co/agent-memory-a… What else would you add? ♻️ Repost to help others learn AI. 🙏 Thanks to @Oracle for sponsoring this post.

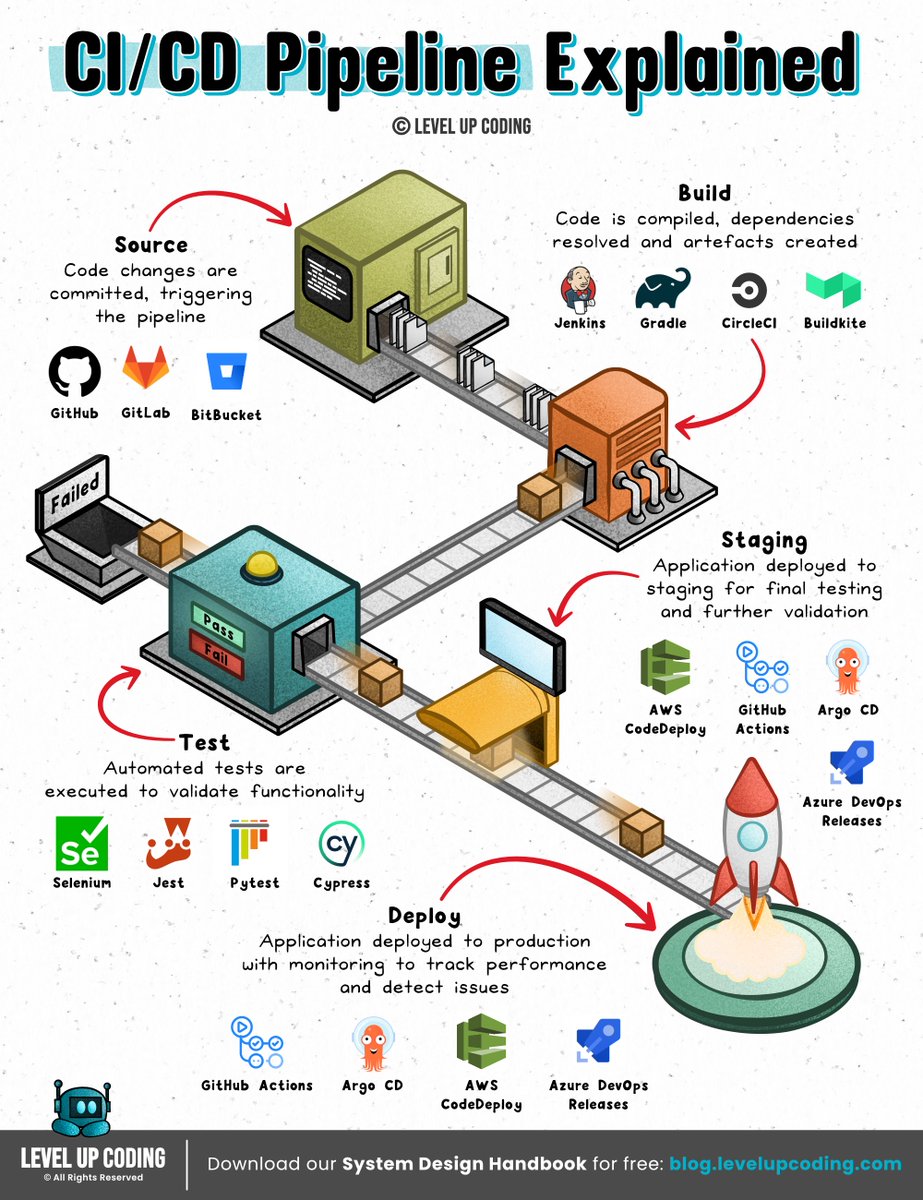

Most developers picture the process like this: Plan → Build → Test → Release But every stage depends on something often overlooked. That process above is typically described through the SDLC. 𝟭. 𝗣𝗹𝗮𝗻 ↳ Define requirements and architecture 𝟮. 𝗕𝘂𝗶𝗹𝗱 ↳ Implement features and integrate systems 𝟯. 𝗧𝗲𝘀𝘁 ↳ Validate behavior, performance, and reliability 𝟰. 𝗥𝗲𝗹𝗲𝗮𝘀𝗲 ↳ Deploy safely to production 𝟱. 𝗠𝗮𝗶𝗻𝘁𝗲𝗻𝗮𝗻𝗰𝗲 ↳ Monitor systems, fix issues, and iterate But every stage ultimately depends on the same foundation: a reliable development environment. 𝗜𝗳 𝗲𝗻𝘃𝗶𝗿𝗼𝗻𝗺𝗲𝗻𝘁𝘀 𝗱𝗶𝗳𝗳𝗲𝗿 between developers, CI, and production, teams start seeing: → broken CI pipelines → onboarding delays → dependency conflicts → “works on my machine” bugs That’s why many teams are starting to treat environments as 𝗽𝗮𝗿𝘁 𝗼𝗳 𝘁𝗵𝗲 𝗦𝗗𝗟𝗖 𝗶𝘁𝘀𝗲𝗹𝗳, not just a setup step. Tools like Flox help with this by letting teams define reproducible development environments, so the same setup runs across developers, CI, and production. Because when environments are reproducible, the SDLC becomes much smoother from build to release. Try it out for free: lucode.co/flox-z7xd What else would you add? ♻️ Repost to help others learn and grow. 🙏 Thanks to @floxdevelopment for sponsoring this post. ➕ Follow Nikki Siapno to become good at system design.

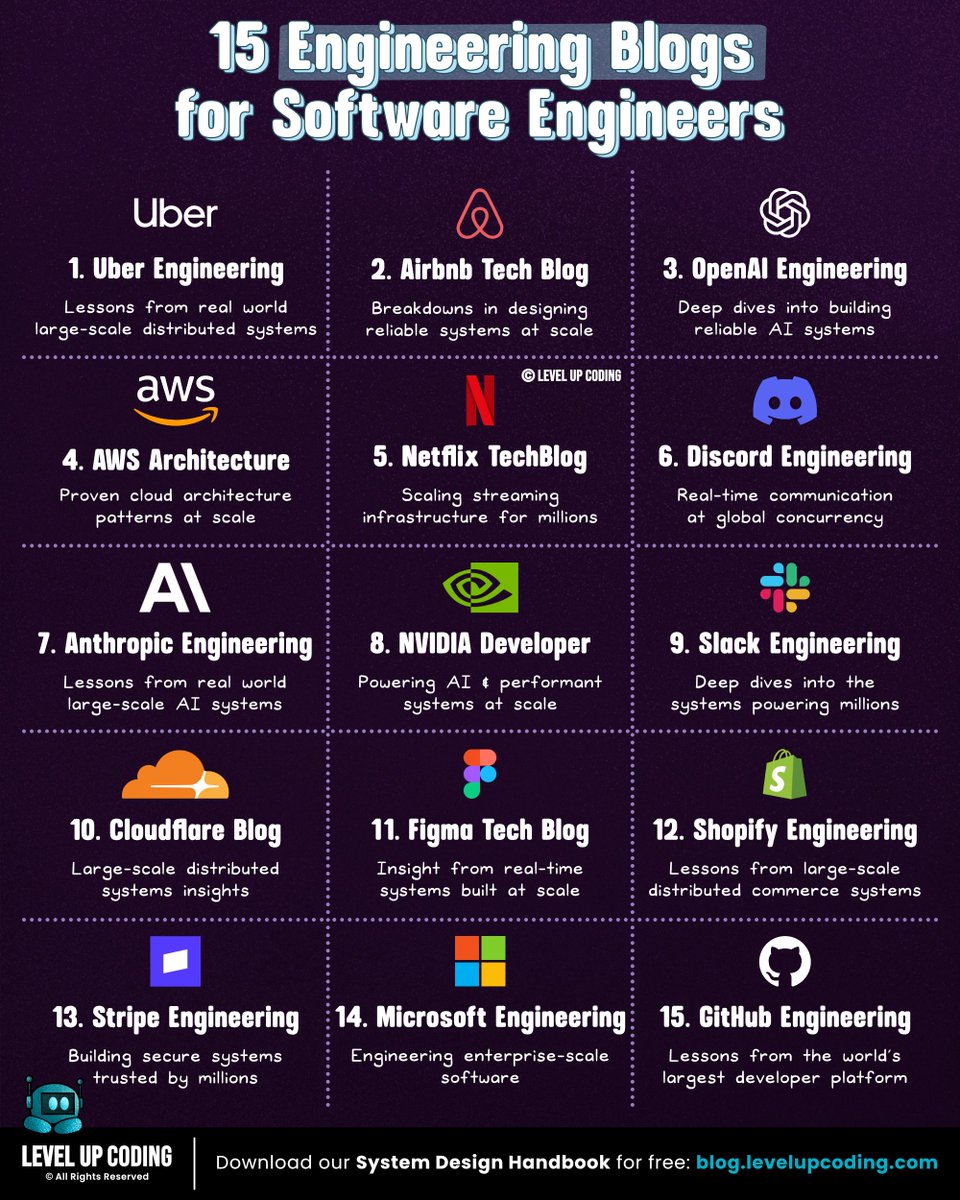

15 blogs that will help you become a better engineer: 1. Uber Engineering: uber.com/blog/engineeri… 2. Airbnb Tech Blog: airbnb.tech/blog 3. OpenAI Engineering: openai.com/news/engineeri… 4. AWS Architecture: aws.amazon.com/blogs/architec… 5. Netflix TechBlog: netflixtechblog.com 6. Discord Engineering: discord.com/category/engin… 7. Anthropic Engineering: anthropic.com/engineering 8. NVIDIA Developer: developer.nvidia.com/blog 9. Slack Engineering: slack.engineering 10. Cloudflare Blog: blog.cloudflare.com/tag/engineering 11. Figma Tech Blog: figma.com/blog/engineeri… 12. Shopify Engineering: shopify.engineering 13. Stripe Engineering: stripe.com/blog/engineeri… 14. Microsoft Engineering: devblogs.microsoft.com/engineering-at… 15. GitHub Engineering: github.blog/engineering Bonus (system design deep dives) Level Up Coding: lucode.co/luc-system-des… Bonus two: Get our 𝟭𝟰𝟮-𝗽𝗮𝗴𝗲 𝗦𝘆𝘀𝘁𝗲𝗺 𝗗𝗲𝘀𝗶𝗴𝗻 𝗛𝗮𝗻𝗱𝗯𝗼𝗼𝗸 covering 𝟱𝟭 𝘀𝘆𝘀𝘁𝗲𝗺 𝗱𝗲𝘀𝗶𝗴𝗻 𝗰𝗼𝗻𝗰𝗲𝗽𝘁𝘀 for 𝗙𝗥𝗘𝗘 when you join our newsletter. Join 30,001+ engineers: lucode.co/system-design-… What other blogs should be on this list? 🔖 Save for later. ♻️ Repost to help other engineers learn and grow.

Concepts every developer should know: concurrency is 𝗡𝗢𝗧 parallelism. These terms are often used interchangeably. But they describe very different ideas. As Rob Pike (one of the creators of Go) put it: 𝗖𝗼𝗻𝗰𝘂𝗿𝗿𝗲𝗻𝗰𝘆 is about 𝙙𝙚𝙖𝙡𝙞𝙣𝙜 𝙬𝙞𝙩𝙝 lots of things at once. 𝗣𝗮𝗿𝗮𝗹𝗹𝗲𝗹𝗶𝘀𝗺 is about 𝙙𝙤𝙞𝙣𝙜 lots of things at once. Here’s the difference in practice: Concurrency ↳ Multiple tasks are in progress. ↳ Work is interleaved to use resources efficiently Parallelism ↳ Multiple tasks are executing simultaneously ↳ Work happens at the same time across cores or systems In other words: concurrency helps manage complexity, while parallelism increases throughput. We’re starting to see this same concept appear in AI-powered development workflows. Traditionally, AI coding agents worked sequentially: You request a change → the agent plans → then builds → then you move to the next step. But new systems are moving toward parallel execution. With Replit Agent 4, tasks can run through parallel agents, allowing different parts of a project to move forward at the same time. ↳ Plan features in separate threads ↳ Execute tasks in parallel ↳ Continue designing while work runs in the background The result: a workflow that feels much closer to parallelism than step-by-step execution. Less waiting. More building. Try it out for free: lucode.co/replit-a4z7xd What else would you add? -- 📌 Save for later. ♻️ Repost to help others learn and grow. 🙏 Thanks to @Replit for sponsoring this post. ➕ Follow Nikki Siapno + turn on notifications.

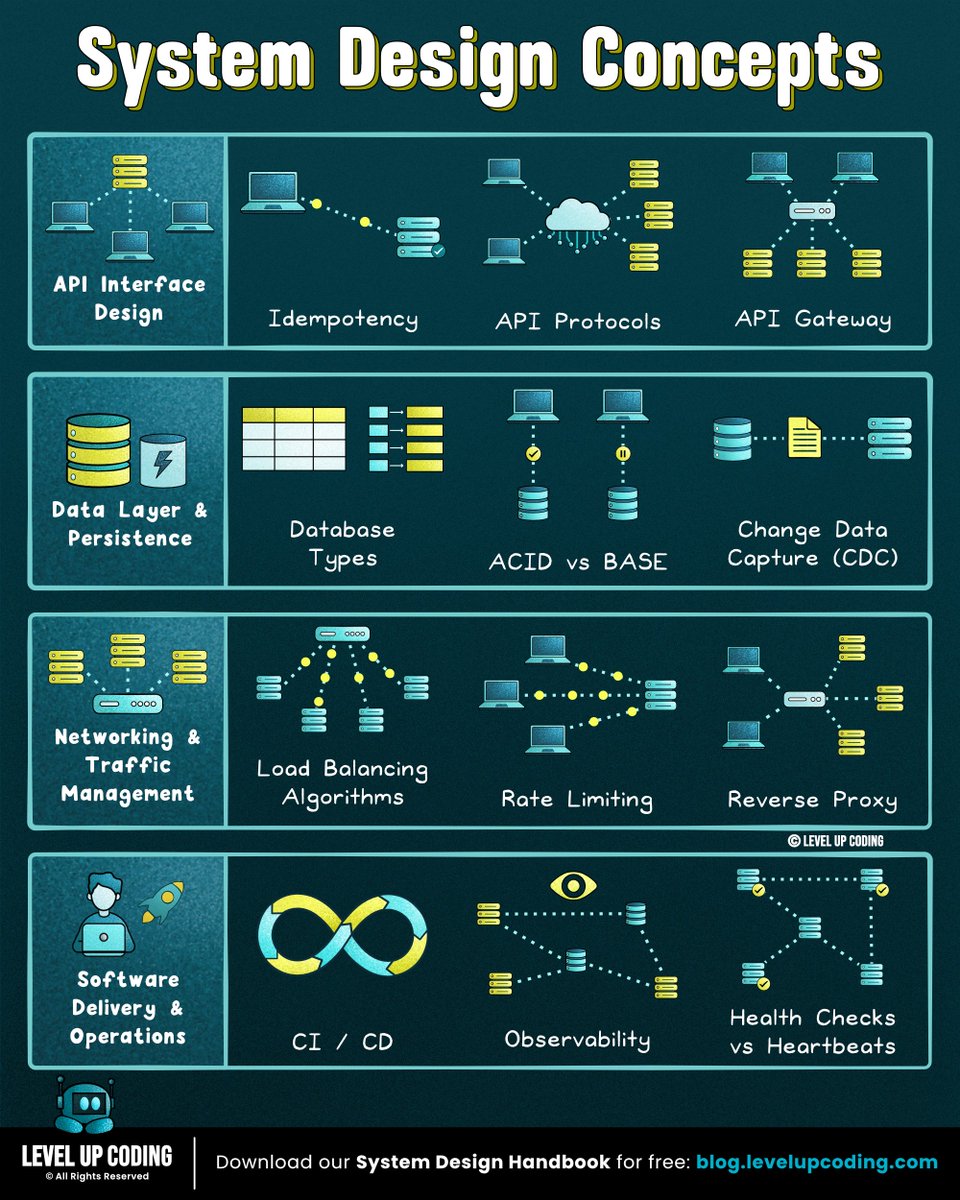

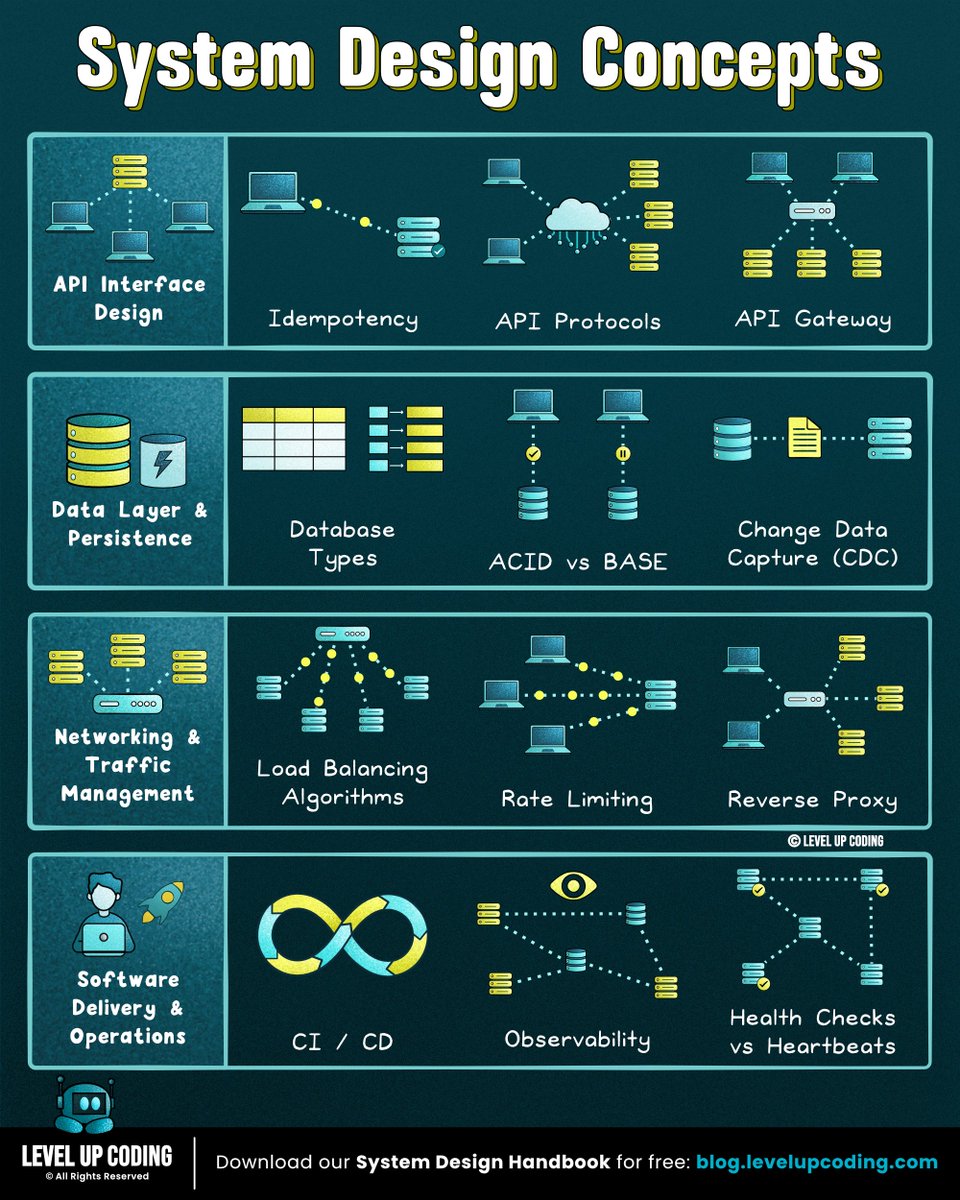

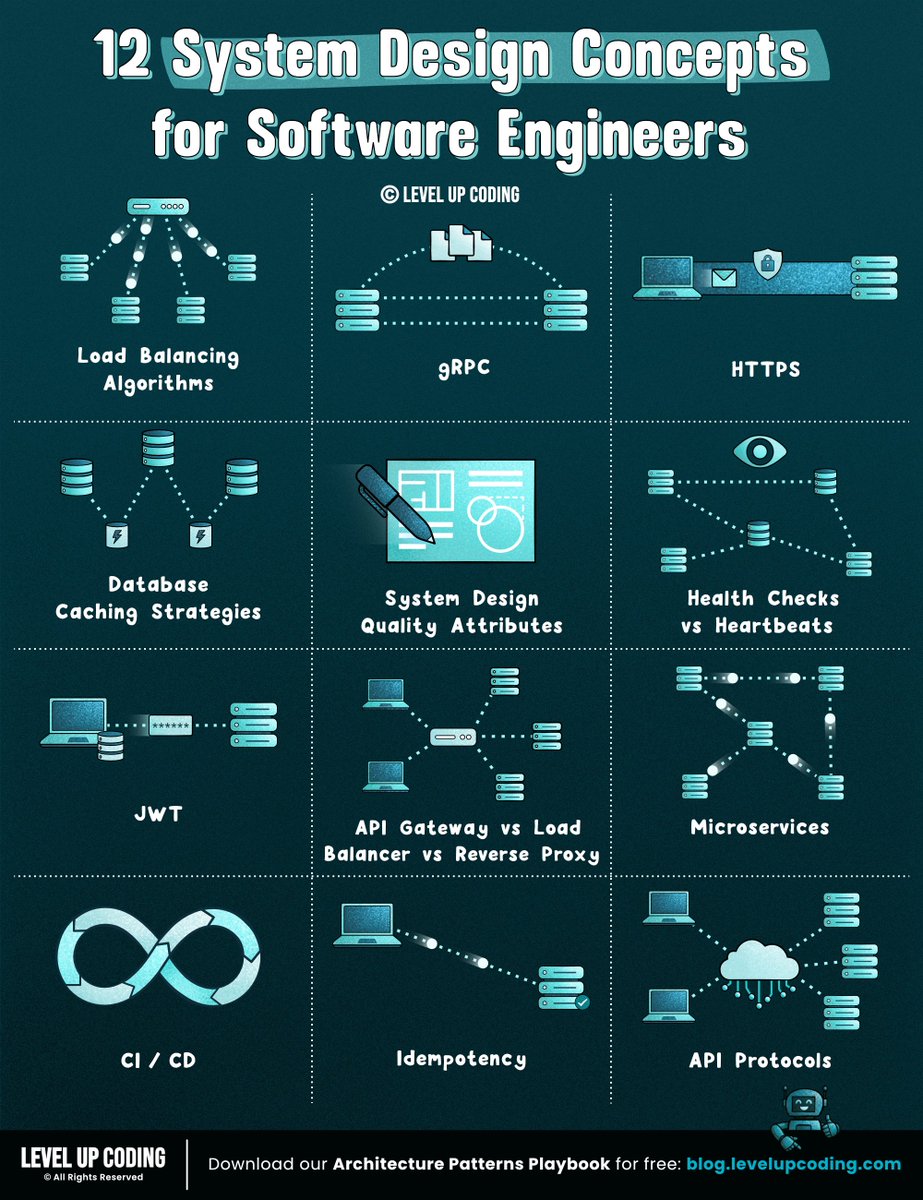

12 System design concepts engineers should know: 1. Load balancing algorithms explained ↳ lucode.co/load-balancing… 2. gRPC clearly explained ↳ lucode.co/grpc-explained… 3. How HTTPS actually works ↳ lucode.co/https-explaine… 4. Database caching strategies ↳ lucode.co/database-cachi… 5. System design quality attributes ↳ lucode.co/system-design-… 6. Health checks vs heartbeats ↳ lucode.co/health-checks-… 7. CI/CD pipelines ↳ lucode.co/ci-cd-lil1nlsm 8. API gateway vs load balancer vs reverse proxy ↳ lucode.co/api-gateway-vs… 9. Microservices clearly explained ↳ lucode.co/microservices-… 10. How JWT works ↳ lucode.co/json-web-token… 11. Idempotency in API design ↳ lucode.co/idempotency-in… 12. API protocols made simple ↳ lucode.co/api-architectu… What else should make the list? What concepts would you like me to cover? 👋 PS: Get our System Design Handbook FREE when you join our newsletter. Join 30,001+ engineers: lucode.co/system-design-… -- 📌 Save for later. ♻️ Repost to help other engineers learn system design. ➕ Follow Nikki Siapno + turn on notifications.