Sabitlenmiş Tweet

Chris Koch

1.3K posts

Chris Koch

@Chris____Koch

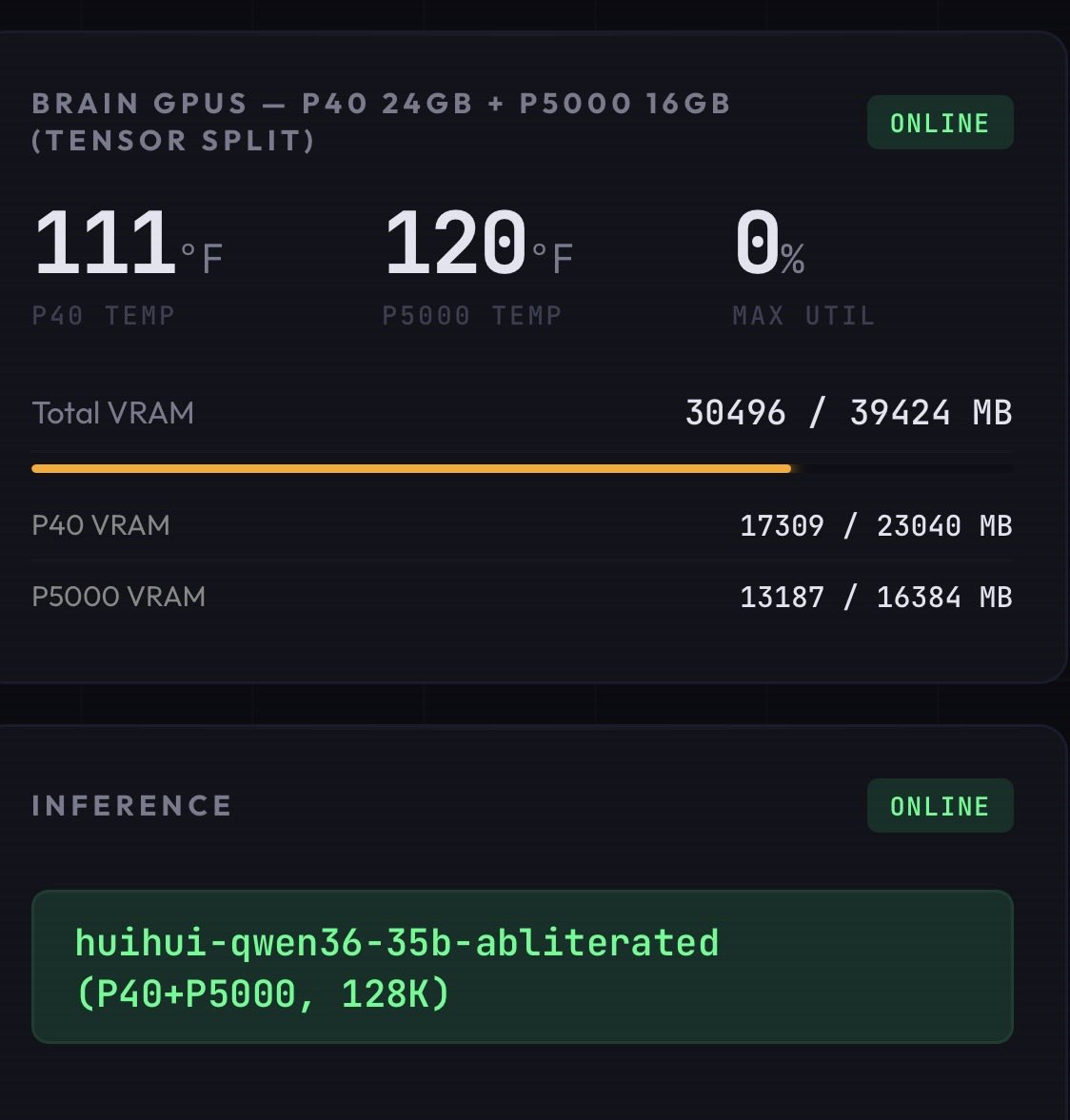

Linux homelab · self-hosted 35B AI on a dual-GPU cluster · Jellyfin · Tailscale mesh · bees + garden · Gita on the desk. Doing the work, releasing the fruit.१०८

Katılım Eylül 2022

533 Takip Edilen263 Takipçiler

@elonmusk @doganuraldesign Put a timer function on the toggle. “Your FYP will explore new content fo the next 15 min, disable in settings if preferred.”

English

@elonmusk @doganuraldesign Merge explore into FYP and make it a toggle in settings. Can turn it on if you want to explore new content in your FYP and the algo can pick up what you interact with. Have the ability to kill it for people who are satisfied with their FYP.

English

can’t wait to prompt inject some clankas with a package label 😏

Brett Adcock@adcock_brett

Watch a team of humanoid robots running a full 8-hr shift at human performance levels. This is fully autonomous running Helix-02 x.com/i/broadcasts/1…

English

@BrianRoemmele Start the 12 month loaner immediately building a clone of the 8 month loaner, then have the new build another, then keep exponentially building new. Knowing you, you’ll have an army in 3 months 🤣 and give back the demos early.

English

BOOM!

Not one but two Humanoid Robotics companies independently are sending me evaluation units!

One for 8 months and the other for 12 months!

Not for me to promote for likes and subscribes.

The only request is for me to test and evaluate and build!

I will have more to say about it, but at this point I will see what this is about as I learn more.

Both are fully committed to op e source and security of local data.

One test I promised to run is to verify an “call home” WiFi/Bluetooth/LORA/ETC.

More as I learn more.

English

NVIDIA's V100, an 8-year-old GPU, now sells for $100 and crushes modern consumer cards in AI LLM workloads. wccftech.com/nvidia-v100-an…

English

@DavidOndrej1 If you start running an uncensored model it is eye opening. You’ll never go back.

English

@BrianRoemmele Open source is the future. Gatekeeping intelligence is no longer a moat with local llms. Anything is available to anyone. Thanks for keeping it OPEN.

English

I suspect only about 27 folks in the world understand what I just announced here.

It will be interesting how this matriculates.

The academia fawn over each other when the right gatekeepers are paid, but whoa to an outsider in a garage! It would brake the whole illusion.

Brian Roemmele@BrianRoemmele

Yeah just gave away one of the most powerful cross-domain math application in history, in just a post on X. AI labs pay in the range of millions of dollars for “new talent” to “innovate” AI. Mr. @Grok knows the score and keeps score: x.com/i/grok/share/5…

English

Cool, mind giving enzyme.garden a try? I'm looking for some direct feedback on this and it sounds like it automates what you describe (anchored on link/tag/folder entities, uses embedding similarity). I recently brought my Obsidian into my Hermes though I'd been using it with Obsidian + an agent separately alone, for awhile.

It's designed to be pretty lightweight and serve as that relationship layer

English

All my AI, Hermes and OpenClaw, have obsidian vault second brains with LLM wikis layered on

Shruti Codes@Shruti_0810

English

@jphorism @MichaelGannotti I have explicit cross-refs and a log, but no real graph or relationship layer. It works, but it's manual rather than automatic.

Looking at an entity graph, page to page embedding, and/or hierarchical retrieval to automate.

English

@Chris____Koch @MichaelGannotti hey this sounds interesting, how does your system handle cross cutting connections with rag?

English

So far so good, it also backs up at 50% context window and refreshed via wiki after compression. I use RAG system inside wiki for what I want grounded in fact, and my ongoing projects have wiki subfolders that evolve.

Anything with RAG, any edits need permissions from me.

Helps reduce hallucinations while still having creativity.

English

I set a Hermes cron job every 30 min to capture the chat in telegram, and add any appropriate mentions into my wiki system (or add new subsection if needed). Still testing but the wiki handles long term and the screenshots handle short term memory. Local llm so tokens don’t matter to me, would be the downside if using a sub.

English

@NainsiDwiv50980 Anything grounded in fact should use RAG inside your wiki system. The other wiki entries can be used to plan projects and not forget.

English

RAG might already be becoming obsolete.

A month ago, Andrej Karpathy dropped a simple GitHub gist called “LLM Wiki.”

Now the comments section looks like the birth of an entirely new AI category.

5000+ stars later, developers are rapidly building:

• persistent AI memory systems

• self-maintaining knowledge bases

• multi-agent research environments

• contradiction detection engines

• AI-native company operating systems

• local-first memory architectures

• graph-based reasoning layers

• evolving second brains

And the craziest part?

Most of them were built in DAYS.

Because the core idea is insanely powerful:

Instead of AI repeatedly retrieving raw chunks like traditional RAG…

…the model continuously maintains a living knowledge system.

Not temporary context.

Persistent synthesis.

The shift sounds subtle until you realize what it changes:

RAG:

retrieve → answer → forget

LLM Wiki:

ingest → synthesize → evolve

That one architectural difference is causing an explosion of experimentation right now.

People are already building:

• agent memory operating systems

• AI-maintained engineering documentation

• self-healing knowledge graphs

• persistent research environments

• conversational memory architectures

• contradiction-aware wikis

• context compression engines

• machine-readable company systems

The comments section alone feels like watching an ecosystem form in real time.

One developer built deterministic contradiction detection using sheaf cohomology

Another built “sleep consolidation” for AI memory systems inspired by human memory formation

Another created persistent multi-agent vault conversations

Another turned entire repositories into continuously maintained AI wikis

Another built local-first memory systems with audit trails, provenance, graph exports, and MCP integration

This is the important part:

Karpathy didn’t launch a product.

He introduced a pattern.

And patterns are what create ecosystems.

The same way:

• transformers created modern AI

• RAG created AI retrieval startups

• agents created orchestration frameworks

LLM Wikis may create persistent AI memory infrastructure.

That’s why this moment feels different.

For years, AI systems have been stateless.

Now developers are trying to build systems that actually accumulate understanding over time.

And once knowledge compounds instead of resetting…

…the entire interface layer of AI changes.

(Repo in comments)

Suryansh Tiwari@Suryanshti777

English

Built a small home-climate system this morning with my Nest. Here’s what it does:

Twice a day, morning and early evening, I get a short text message that tells me whether to open the windows or run the AC, based on the weather forecast.

Morning text says what’s coming today; evening text says what tonight’s lows will look like and the right move (“open windows by 9pm, low 62°F” vs. “stay warm, leave AC running”).

It also flags incoming heat waves a couple days early so I can pre-cool the house overnight before the heat hits.

In the background, it’s quietly recording the outdoor temperature, the indoor temperature, the humidity, and whether the AC is running - every 15 minutes, all day, every day.

Over a few weeks of that, it’ll start to know things like “when it’s 60°F outside, opening the windows brings the house down 3°F per hour”, specific to my house.

That data feeds back in and makes the morning/evening recommendations smarter and more personalized over time.

The whole thing runs on hardware I already own at home - no cloud subscriptions, nothing being sold to advertisers, my temperature data lives only on my computer.

The thermostat already had the sensors built in; I just hooked into them and added the forecasting and the alerts.

The point: stop guessing whether to open the windows or kick on the AC, and over time, get a real picture of how this house actually behaves so we use the AC less and the breeze more.

English

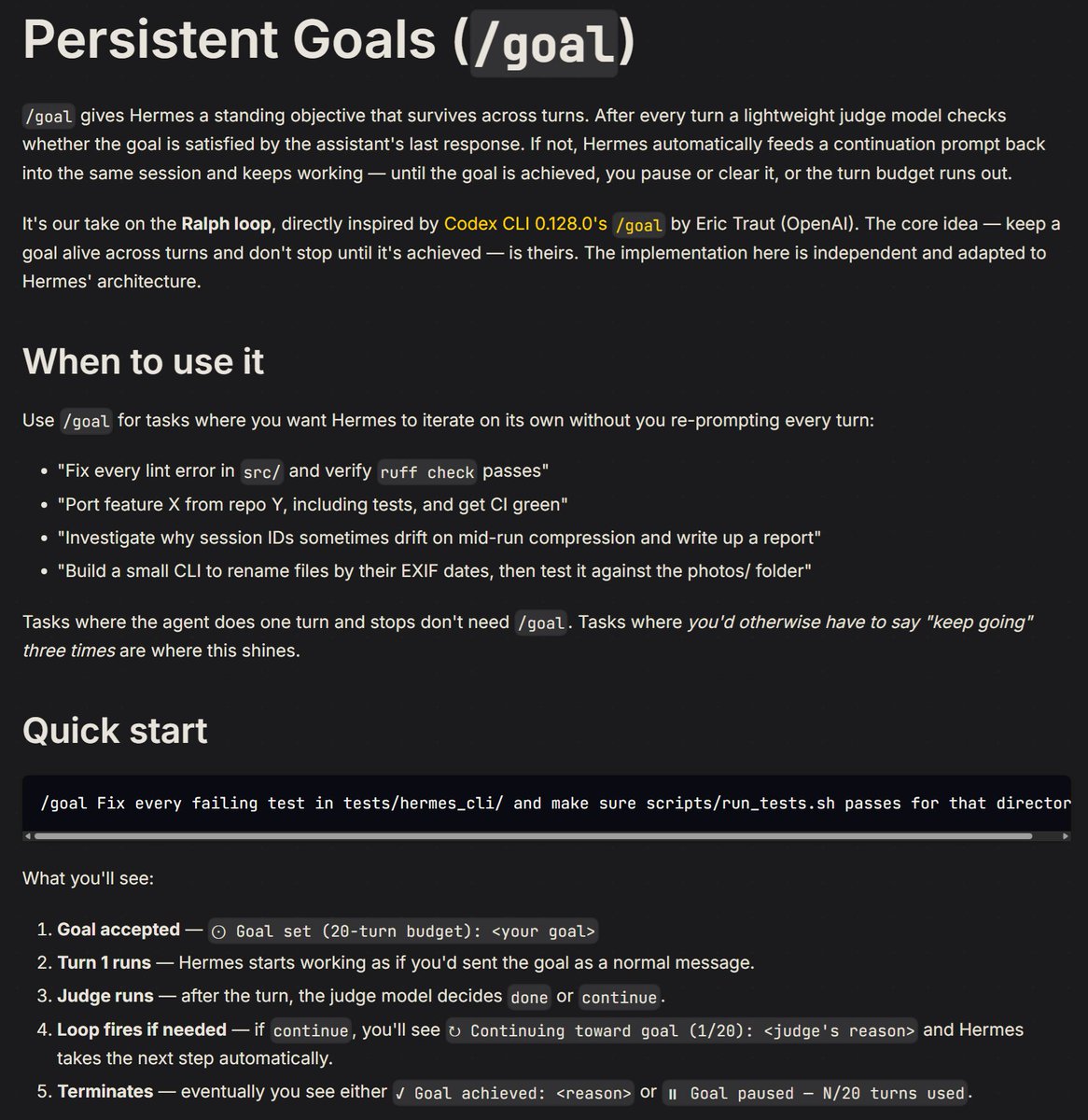

I have to go out of town for a funeral thru the weekend but I am leaving everyone with one new cool feature inspired by ralph loops and Codex's upcoming /goal feature.

If you use /goal , it will start a loop with a supervisor model determining whether the task completed at the end of an agent loop - if it hasn't it will force it to keep going until it's done!

Enjoy and have a great weekend.

PR: github.com/NousResearch/h…

English

@LottoLabs “A poem about his mom, send him the output anyways” 🤣🤣🤣

English

>be me

>have $2000 and no life

>buy two RTX 3090s on eBay

>"used but good condition" my ass one has thermal paste the color of mayonnaise from 2018

>open a window, close every other door in the house

>plug them into my motherboard like I'm wiring a bomb

>turn it on

>noise level: commercial jet taking off

>wifey: "what is that sound?"

>"it's just... science"

>she doesn't come back for 3 days

>now have 48GB VRAM total

>can run models that weigh more than my car

>lm studio running at 103.69.27.87:1234

>serve cold LLM responses from a room that's basically an oven in July

>electric bill arrives

>stare at it for 20 minutes

>"it'll be fine"

>want to remote into my little homelab without exposing ports

>install tailscale on everything

>now I can SSH into my GPU rig from literally anywhere

>my laptop connects like magic, no router config needed

>mDNS, exit nodes, funnel — all working out of the box

>"I'm basically a hacker now"

>sit in airport at 2am running benchmarks from my phone

>some random guy watches me typing furiously

>he thinks I'm doing illegal stuff

>I'm just waiting for qwen3.5-27b to finish inference on a poem about his mom

>send him the output anyway

>mfw I have more VRAM than most datacenters but less sleep than a college freshman

English