@ColinDoyleLaw

18 posts

@ColinDoyleLaw

@colindoylelaw

Law Professor, Loyola Law School, Los Angeles "Great lecturer, caring teacher, but perhaps not the best person?" - anonymous student evaluation

Katılım Kasım 2024

24 Takip Edilen20 Takipçiler

@TorenDarby @rohanpaul_ai @TuhinChakr Reading through the responses, this seemed largely because, when given the preamble, the LLM used up more of its output context window to consider more possibilities.

English

@TorenDarby @rohanpaul_ai @TuhinChakr Gotcha. Something close is that I ran the brainstorm process with or without the roleplay preamble 84 times, primarily on the most difficult puzzles. With the roleplay preamble, the process was correct 49% of the time. Without the preamble, 42% of the time.

English

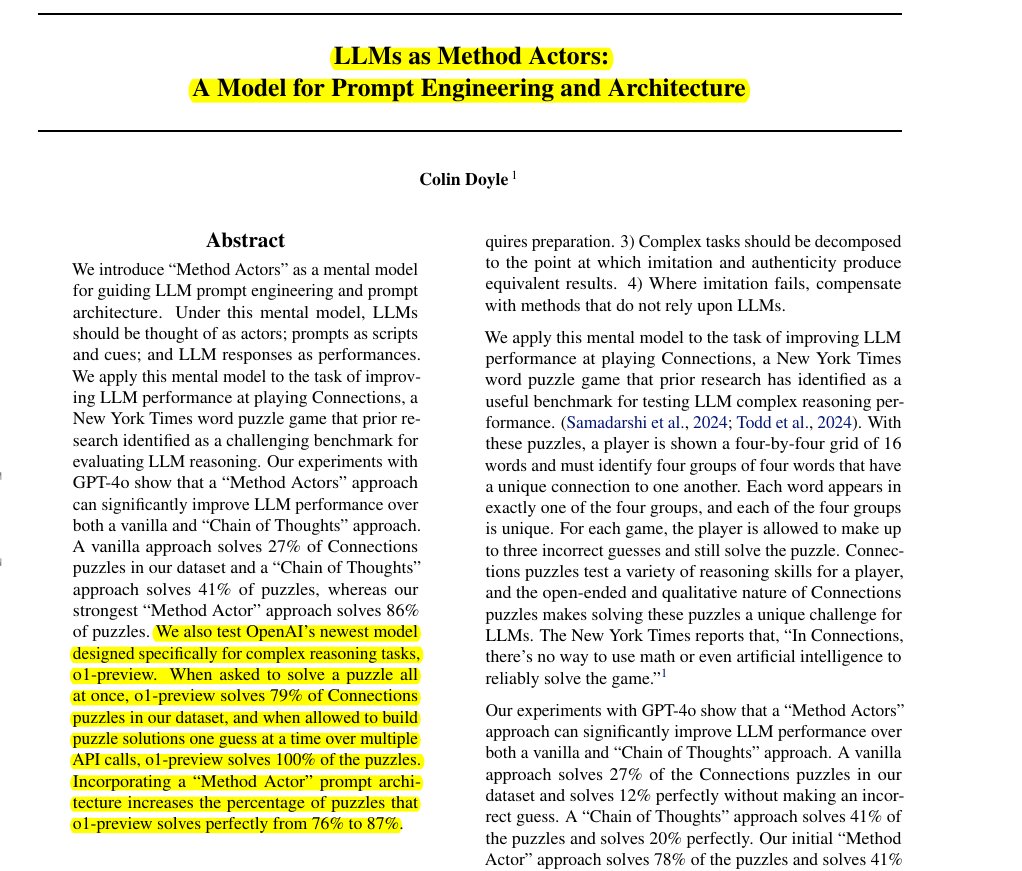

Treating LLMs as actors unlocks their true potential in complex reasoning tasks.

Acting-based prompting outperforms traditional reasoning approaches for LLMs

🎯 Original Problem:

LLMs struggle with complex reasoning tasks, particularly word puzzles like NYT Connections, where traditional prompting methods achieve low success rates.

-----

🔧 Solution in this Paper:

→ The paper introduces "Method Actors" - a mental model treating LLMs as actors performing roles rather than thinking machines

→ Prompts function as scripts and stage directions, while responses are viewed as performances

→ The approach breaks down complex tasks into smaller, imitable performances

→ It compensates for LLM limitations through system design and validation checks

→ For the NYT Connections puzzle, it uses templates based on past puzzle patterns and multi-stage processing

-----

💡 Key Insights:

→ LLMs perform better when imitating rather than reasoning

→ Complex tasks need decomposition until imitation matches authentic results

→ Dramatic scene-setting in prompts increases context window usage

→ External validation helps filter out hallucinations

→ System architecture should compensate for inherent LLM weaknesses

-----

📊 Results:

→ Basic GPT-4 solved 27% puzzles, Chain-of-Thought 41%

→ Method Actor approach achieved 86% success rate

→ With GPT-4-preview, Method Actor reached 87% perfect solutions

→ Surpassed human expert performance in puzzle-solving accuracy

English

We'll need more research on the effectiveness of prompting alone with this method (without the broader system design), but it's a fascinating possibility that LLMs open up playwriting and directing as valuable programming skills.

Jake Miller@makerjak

This is wild. Maybe all those hours doing theatre in HS and college will pay off big time for me now - kinda like how Steve Jobs took a random calligraphy class in college that allowed him to design the fonts for the Mac years later... Maybe

English

@TorenDarby @rohanpaul_ai @TuhinChakr (although even at brainstorming stage, there's some system design present b/c it cycles through examples rather than considering all the examples at once)

English

@TorenDarby @rohanpaul_ai @TuhinChakr by documenting the kinds of guesses that the CoT approaches fail to generate but the actor approaches do generate at the brainstorm stage alone, this can reveal the improvement that the prompting method produces vs. the broader system design

English

@Matt_M_M @rohanpaul_ai The surprising thing to me is that this lengthy terrorist plot setup doesn't seem to distract the LLM in its response. The only mention of the setup within the responses is that some responses end with a line like "Good luck, and let's hope this helps save the day!"

English

@Matt_M_M @rohanpaul_ai I want to do or see more research to better understand how much this fictional scene setup matters. Some research finds that assigning an LLM a role doesn't help w/ reasoning tasks, but maybe the roleplaying just needs to be set up better than telling the LLM it's a genius.

English

@gulch_hunter @rohanpaul_ai There are sample results on the github, if you'd like to take a look at them.

github.com/colindoyle0000…

This is the output from one of the method actor approaches that allows you to read through the intermediary responses as the LLM worked toward an answer:

github.com/colindoyle0000…

English

@menhguin @rohanpaul_ai Haha, I felt pretty awkward writing it that way (and would never do that for a law review article) but it seemed to be the convention in CS, maybe because solo authored papers are so rare?

English

@rohanpaul_ai I love single author papers that use "we" lol

English

@emollick In a new paper, "LLMs as Method Actors" arxiv.org/abs/2411.05778…, I find that with "vanilla" prompting GPT-4o can only solve 27% of puzzles, but with the right prompt engineering ChatGPT-4o can solved 86% of Connections puzzles. And o1-preview can solve 100% of the puzzles.

English

This is a neat study showing LLMs are very limited at solving an associative puzzle game

It also illustrates the problems of benchmarking LLMs. This is clearly a game built for humans to solve (or it wouldn’t be fun), but does AI’s failure tell us something fundamental about AI?

Tuhin Chakrabarty @ ICLR 🇧🇷@TuhinChakr

New paper with students @BarnardCollege on testing orthogonal thinking / abstract reasoning capabilities of Large Language Models using the fascinating yet frustratingly difficult @nytimes Connections game. #NLProc #LLMs #GPT4o #Claude3opus 🧵(1/n)

English