Comet

3.5K posts

@Cometml

Comet provides an end-to-end model evaluation platform for AI developers, with best in class LLM evaluations, experiment tracking, and production monitoring

I’ve spent the last week interviewing @maximilien, former CTO at IBM and Chairperson of NodeJS Foundation, who has shipped production RAG to multiple customers over the past year. The lesson he kept circling back to is that until you evaluate on your customer’s data, nothing else you do matters. Production RAG is a loop: stitch your embedding model, chunking, retrieval, vector DB, and judge, then evaluate and iterate until you hit your customer’s metrics. Public benchmarks and the MTEB leaderboard are signals, not verdicts. On a real customer dataset of Leica auction listings, an open-source sentence-transformer that ranked around #130 on MTEB still beat OpenAI by 11% in quality. It ran 240x faster, produced 50% smaller vectors, and cost $0.

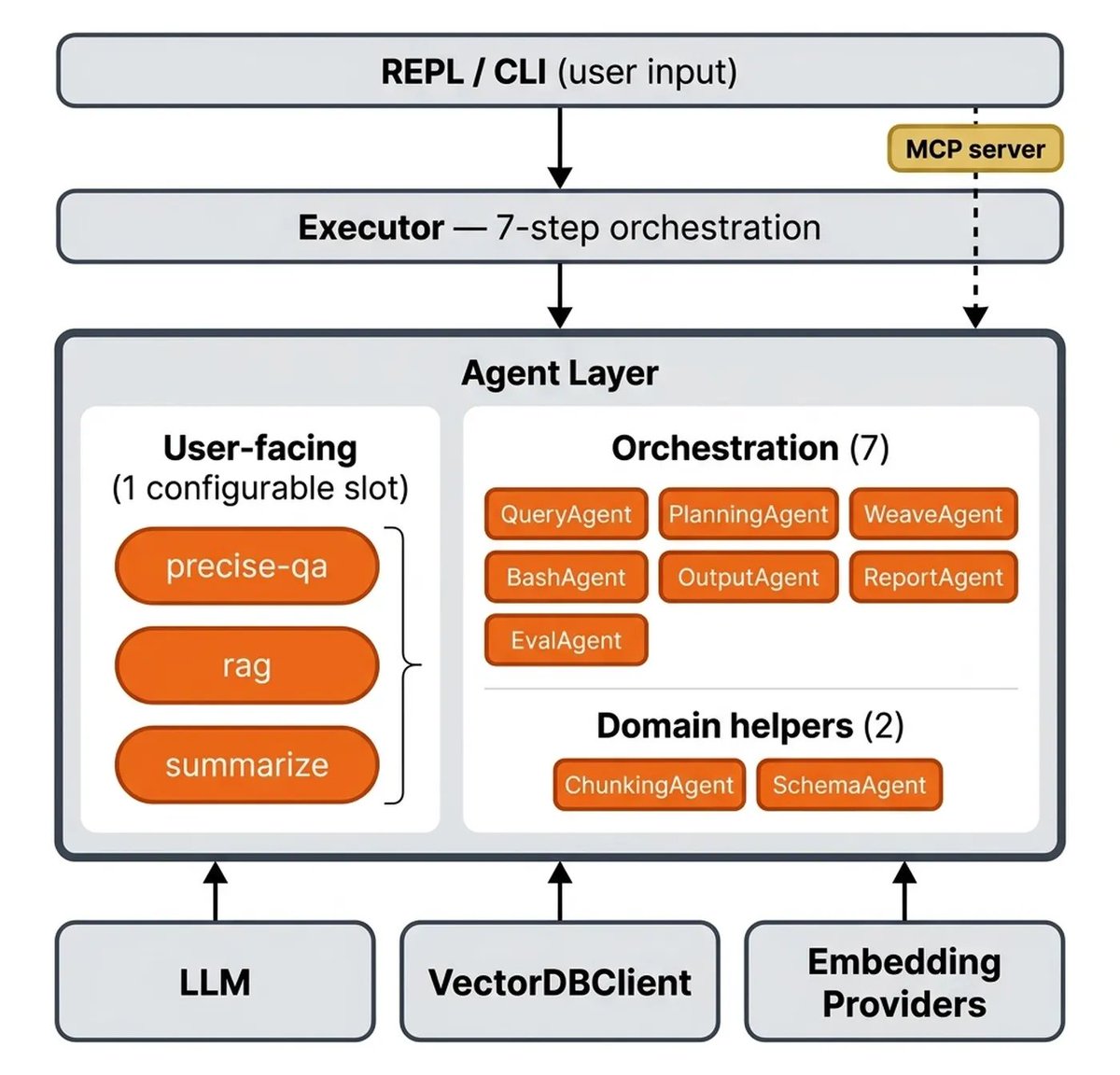

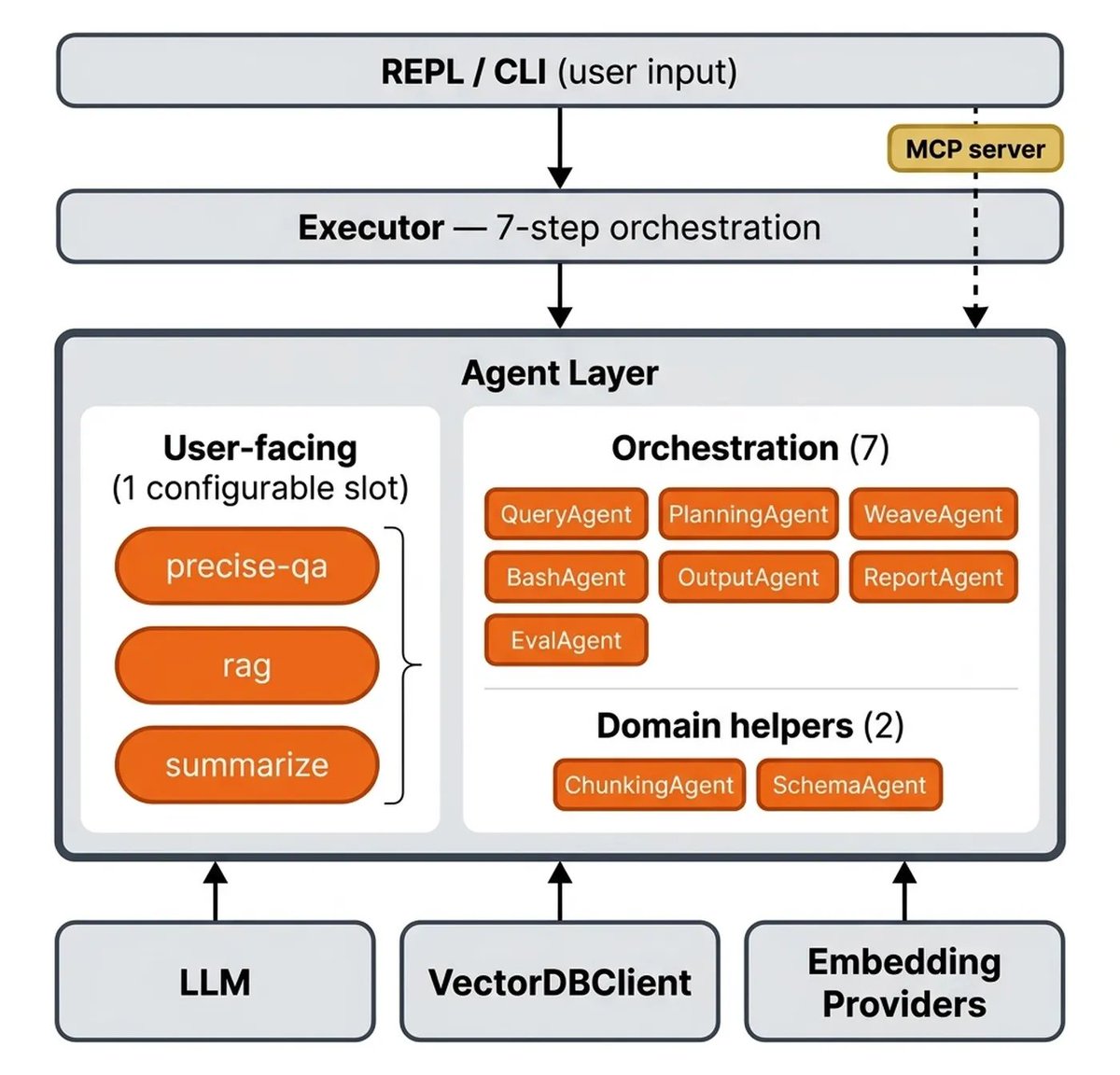

Self-improving agents are going to require a few things: A memory of past traces A way to evaluate their trajectories The ability to edit their own code So far, Opik has focused on the first two points. Now, we’re solving the third. 🧵

Self-improving agents are going to require a few things: A memory of past traces A way to evaluate their trajectories The ability to edit their own code So far, Opik has focused on the first two points. Now, we’re solving the third. 🧵

Self-improving agents are going to require a few things: A memory of past traces A way to evaluate their trajectories The ability to edit their own code So far, Opik has focused on the first two points. Now, we’re solving the third. 🧵

Self-improving agents are going to require a few things: A memory of past traces A way to evaluate their trajectories The ability to edit their own code So far, Opik has focused on the first two points. Now, we’re solving the third. 🧵