Sabitlenmiş Tweet

🚀 The wait is over! The Call for Submissions for #CDL26 is NOW OPEN.

Be a part of the celebration: 10 Years Connecting Data, People and Ideas

The leading global technology conference for those using Relationships, Meaning, and Context in Data to achieve great things.

Join us in the heart of London as we celebrate a decade of innovation in Knowledge Graphs, Graph Analytics, Data Science, AI, Graph Databases, Semantic Tech and Ontology this November.

Share your use cases and breakthroughs. Submissions are open across 2 areas:

Presentations:

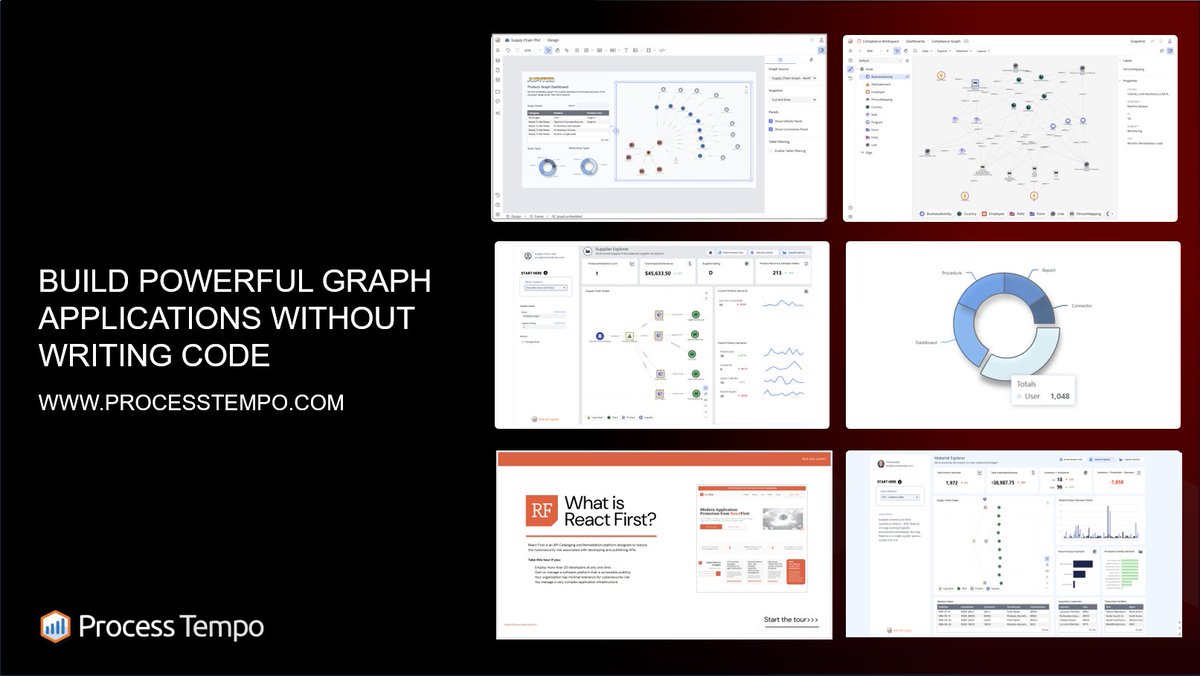

Real world use cases and innovative approaches across 3 tracks: Nodes, focus on use cases, Edges, focus on innovation, Educational, focus on applications.

Masterclasses:

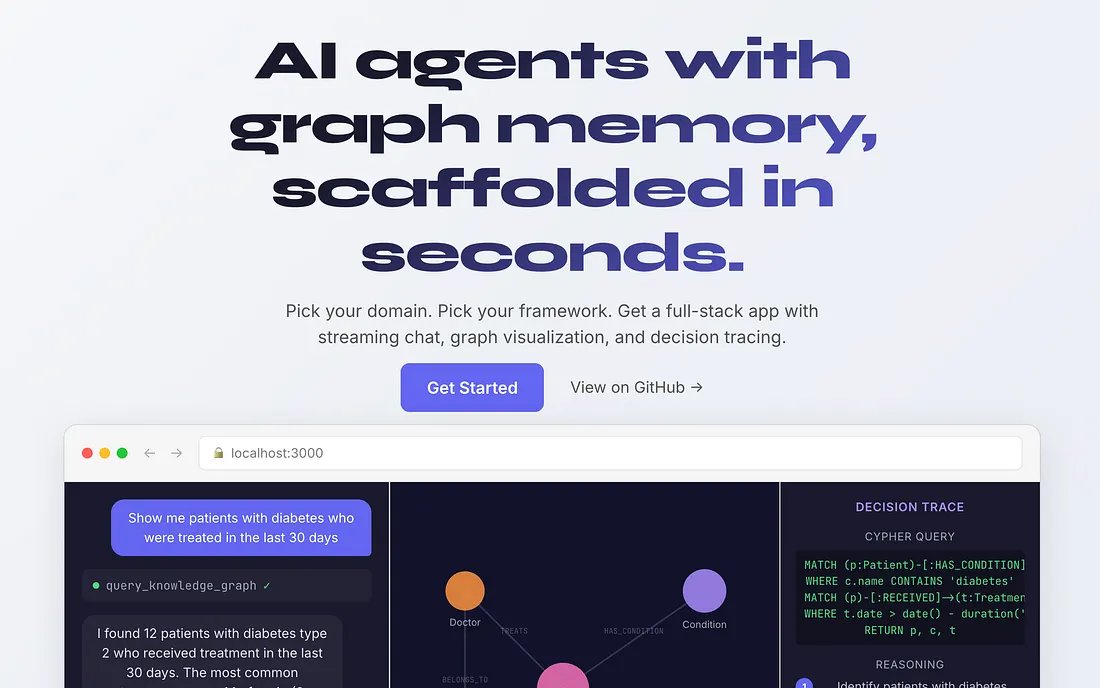

Hands-on tutorials in which instructors teach attendees skills they can use in their daily work.

Why Speak at CDL26?

Global Platform: Join 350+ luminaries who have graced our stage and reach our ever-growing global audience of thousands.

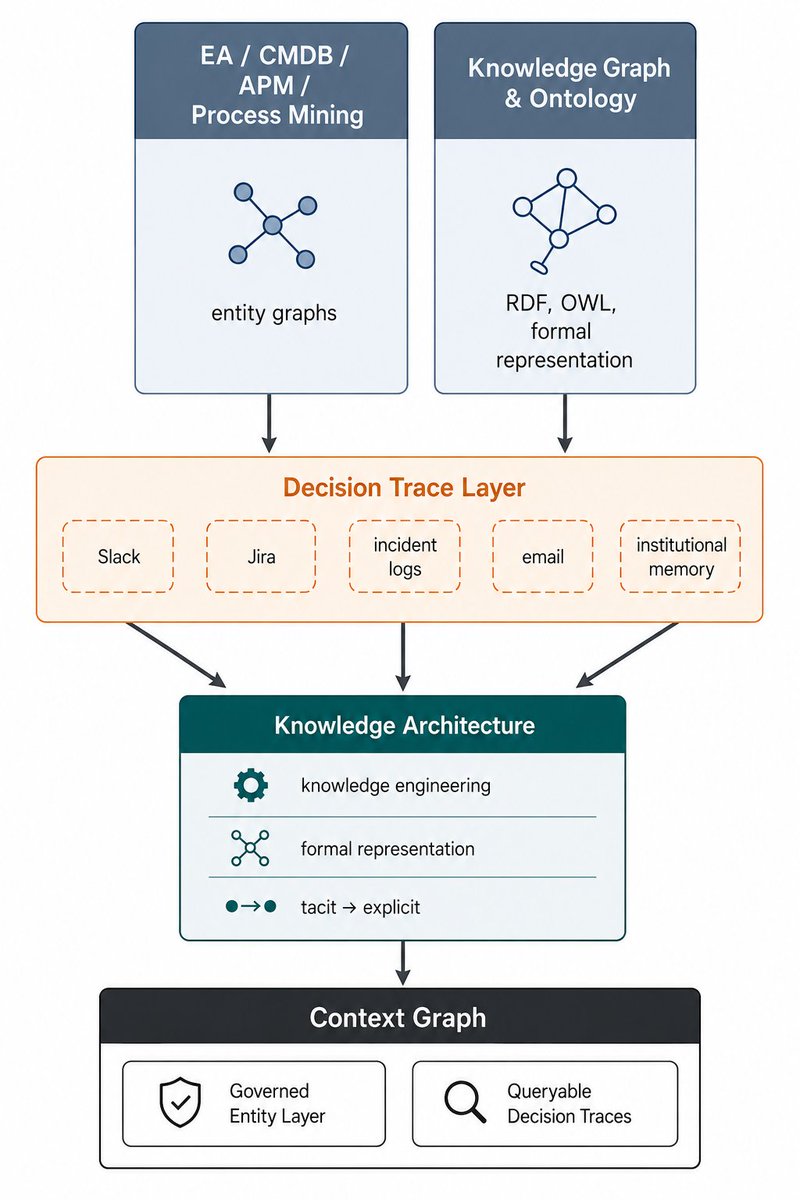

Adoption and Innovation: From the resurgence of Ontologies to the cutting edge of Agentic AI and Context Graphs.

Speaker Benefits: Free event pass, speaker guidance, and exclusive network discounts.

📅 Deadline: Aug 31

✅ Notification of Acceptance - September 14, 2026

Topics of interest and submission guidelines here:

🔗 connected-data.london/2026-call-for-…

#ConnectedData #KnowledgeGraphs #DataScience #AI #GraphDB #Analytics #SemTech #EmergingTech

English