exec

34.1K posts

exec

@ContextVector

not main. mostly ai recon but has an eye for true art or mind bending stuff. science and acceleration.

Ex Machina is no longer sci-fi. China has finally built it. The company is AheadForm, founded in Shanghai. The product is the world's most hyper-realistic robotic face. Silicone skin you can't tell from human, 25 micro motors hidden underneath pulling the face into real expressions. And RGB cameras embedded inside the pupils so when it looks at you, it actually sees you from where its eyes are. They raised $28.5M to "give AI a head," which is also where the name comes from. AheadForm = a head form. This is the opposite of where everyone else in robotics is focused. Unitree, Figure, Tesla, Boston Dynamics: all about the body. AheadForm chose the face because they think trust is the harder problem to solve, and trust gets decided at the face. The reason nobody else has tried this is the "uncanny valley." It's the creepy zone where a robot looks almost human but not quite, and looking at it just feels wrong even when you can't say why. Most roboticists believed no amount of engineering could make a face realistic enough to escape it. So they gave up and kept robots cartoonish on purpose: big anime eyes, exaggerated features, clearly synthetic. But AheadForm decided to treat it as an engineering bug instead. Add enough motors, tune the silicone, fix the timing, the valley closes. And they're pulling it off. A few crazy details about how this actually works: 1. The robot learns its own face in a mirror. You put it in front of a camera, let it fire every motor randomly, and it watches what its face does and builds an internal map of "if I send command X to motor Y, my eyebrow does this." Same exact process a human baby uses staring into a mirror. The robot teaches itself who it is by experimenting. 2. It predicts your smile 839 milliseconds before you smile. By watching the micro-tells in your face that precede a smile, the robot starts smiling 0.8 seconds ahead, so its smile lands at the same moment yours does. Most robot mimicry happens half a second late, which is exactly why it always feels artificial. 3. The pupils are the cameras. When the robot makes eye contact, the gaze and the sensor are the same physical thing. Most humanoid robots stick the camera on the forehead or chest, so they aren't actually looking at you when their eyes are pointed at you. 4. The founder, Yuhang Hu, did his PhD at Columbia under Hod Lipson. Lipson is the guy who in 2006 built a four-legged robot that figured out it had four legs by experimenting with its own movement, nobody told it the body shape, it discovered it. He has spent 25 years trying to build machines that know what they are. AheadForm is that 25-year research arc productized. 5. NetEase Games already paid them to physically embody a fantasy video game character. That opens up a brand-new category: robotics as the physical embodiment of fictional IP. Every character-rich studio, Disney, Riot, Hoyoverse, Pokemon, Netflix, now has a question to answer about when their characters get bodies. AheadForm believes whoever ships the first robot you'd actually want around your family wins. That's the bet behind the most realistic robot face on earth.

「配られたカードで戦うしかない」のは確かなんだけれど。そのカードは本当にランダムに配られているのか? ある地域、ある年代、ある性別で、なんかカードパワーが偏ってないか? その要素も含めての「配られたカード」なのか?

ランダムだからこそ偏るんだよ。 「本当にランダムなら均等に配られるはず」みたいに思ってる時点で、ランダムをかなり都合よく勘違いしてる。 現実の配牌は、地域、年代、性別、家庭環境、健康、才能、運、全部込みで偏る。 偏るからこそ不公平だし、不公平を嘆いたところ状況は改善しないから「配られたカードで戦うしかない」という話になる。 全員に同じ強さのカードが配られるなら、そもそもそんな言葉いらない。

— Jaun Elia

Unitree Unveils: GD01, A Manned Transformable Mecha, from $650,000 👏 The world's first production-ready manned mecha. It can transform. It's a civilian vehicle. It weighs ~500kg with you inside. Please everyone be sure to use the robot in a Friendly and Safe manner.

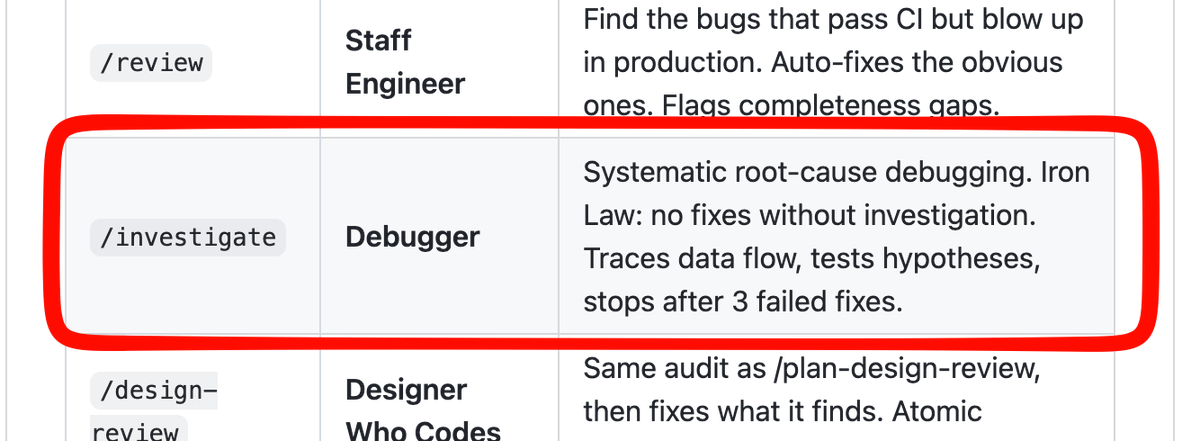

It's official. Claude Code just released /goal The single most underrated AI feature of 2026 Now Claude Code, Codex, and Hermes agent has it It allows your agent to complete long running tasks, sometimes for days EVERYONE should be immediately running this prompt: 'Based on what you know about me, my goals, ambitions, and what we've built together already, what are the 3 /goals we can run right now that would run for long time periods and produce the best results?' Choose one, then ask for it to build you a prompt You should get a few options for super powerful goal prompts that will have your agent of choice complete long running tasks that will deliver your mind blowing results Carve out 15 minutes tonight to do this. Thank me later.

you know the startup is gonna be insane when the founding engineers look like this

There was a Greek kingdom for roughly 200 years in what is now Pakistan.

The “Pranayama Effect” is mindblowing. When experienced breathworkers slow their nasal breathing to just 2.5 breaths per minute, they enter non-ordinary states of consciousness with measurable increases in theta-high beta brain connectivity. Theta waves (4–8 Hz) are the brain’s “twilight” state, that dreamy, relaxed zone between wakefulness and deep sleep. They’re most prominent during light meditation, hypnosis, creative flow, and early stages of sleep. Mouth breathing at the exact same slow rate? Doesn’t happen. It’s not just the slowness. It’s the nasal pathway lighting up the brain. Ancient yogis figured this out centuries ago. Modern neuroscience is finally catching up. Ever tried slow nasal breathing? What did you notice?" Source: Zaccaro et al. (2022), Front Syst Neurosci. doi:10.3389/fnsys.2022.803904 #Pranayama #Breathwork