Wes Roth

15.1K posts

Wes Roth

@WesRoth

FOLLOWS YOU. Artificial Intelligence, Automation & Optimism. Everything I say is 100% serious...

⚡️ UPDATE: Unitree says its WVLA 2.0 model can autonomously clean a conference room in a single take.

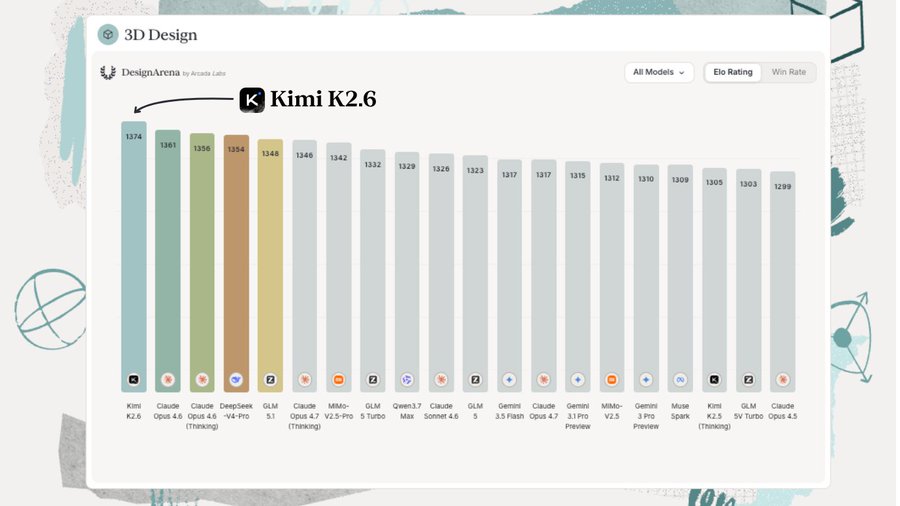

BREAKING: Qwen3.7-Max by @Alibaba_Qwen is 10th overall on Design Arena with an Elo of 1313! This is in the same performance band as DeepSeek V4 Pro by @deepseek_ai and Muse Spark by @AIatMeta Congrats to the team on the launch!

Humanity, created by God in all its grandeur, is today facing a pivotal choice: either to construct a new Tower of Babel or to build the city in which God and humanity dwell together. In Jesus Christ, this humanity in its grandeur becomes the Way, the Truth and the Life, opening the path for each of us to grow toward fullness. #MagnificaHumanitas vatican.va/content/leo-xi…

An open source model has returned to #1 on the 3D Design leaderboard by Design Arena. Kimi K2.6 has reached the top of the leaderboard for 3D Design, ahead of models 10X more expensive like Opus 4.7 by @AnthropicAI, Gemini 3.5 Flash by @GoogleDeepMind, and GPT 5.5 by @OpenAI. This is an 18 position increase from its previous model Kimi K2.5. In fact, it’s not just Kimi K2.6 - 3D Design is a striking outlier capability that’s mostly dominated by open-weight models, like GLM 5.1 by @Zai_org, MiMo v2.5 by @Xiaomi, amongst others. Congrats on the @Kimi_Moonshot team for this accomplishment!

Google was recognized as a Leader in the @Gartner_inc Magic Quadrant™ for AI Application Development Platforms: Midcycle Update—and positioned highest in Ability to Execute of all vendors evaluated. Learn more → goo.gle/4wDSPkA

❗️ OpenAI is shipping a limited-edition collectible pen to its earliest ChatGPT Pro subscribers. Eligible users were notified around two months ago. Supplies are capped at the first 4,000 who opt in through OpenAI's claim form.

Anthropic Doesn't Allow Kids Under 18 — Here's Why "We just don't know enough about what AI is going to do to kids. It needs to be done with an adult in the room. It needs to be done with a human in the loop." — @DanielaAmodei

ChatGPT is cooked after an hour or two it just stops thinking entirely, but you don't get a warning or anything it still shows that it used "GPT-5.5 Extended Thinking", but it answers instantly on every request and is noticeably dumber

Anthropic co-founder Chris Olah was invited to speak at today's presentation of Pope Leo XIV's encyclical "Magnifica humanitas." Read the full text of his remarks: anthropic.com/news/chris-ola…

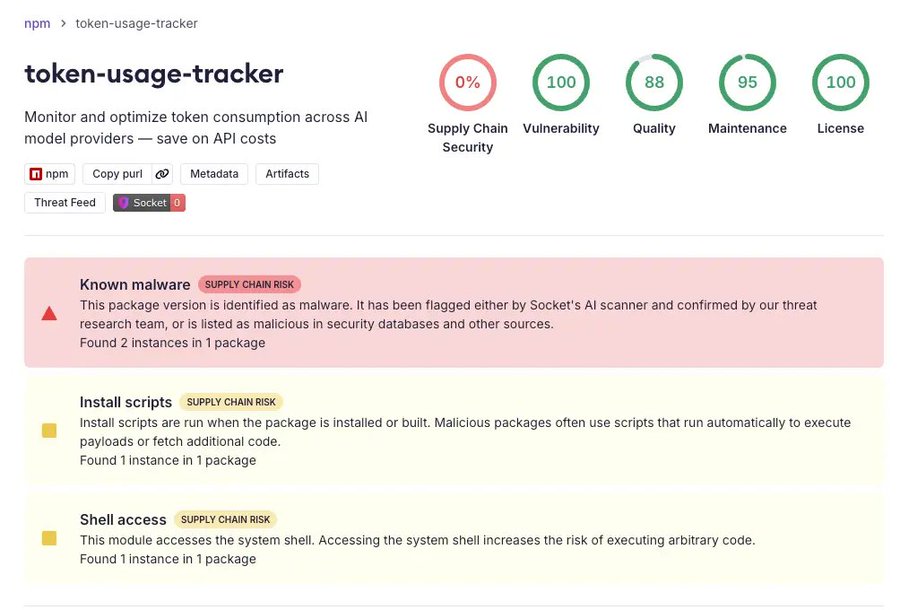

🚨 BREAKING: Active supply chain attack across npm, PyPI, and Crates.io. Socket detected TrapDoor, a crypto stealer campaign hitting 34 malicious packages and 384 versions and artifacts, with attackers repeatedly pushing new releases across ecosystems. TrapDoor targets #crypto, #DeFi, AI, and security developers, stealing wallets, SSH keys, cloud credentials, GitHub tokens, browser data, env vars, and API keys. Socket detected releases with a median detection time of 5 minutes, 27 seconds. The fastest detection occurred 58 seconds after publication.

🤖HUMANOID ROBOTS ARE LEVELING UP FAST PNDbotics’ Adam humanoid is climbing uneven outdoor stairs on its own, adjusting in real time while keeping balance. The next race isn’t walking anymore. It’s mastering the real world.

wow. This is just a beginning! Thousands of RobotEra L7 humanoids to enter service across 10+ logistics centers performing sorting tasks.

✅Implicit caching is now live on Qwen3.7-Max — kicks in automatically, no setup needed. ⚡️Faster + cheaper out of the box. Need higher, more deterministic hit rates? Try explicit caching instead. 🙌 🔗Best practices 🔗 :alibabacloud.com/help/en/model-…