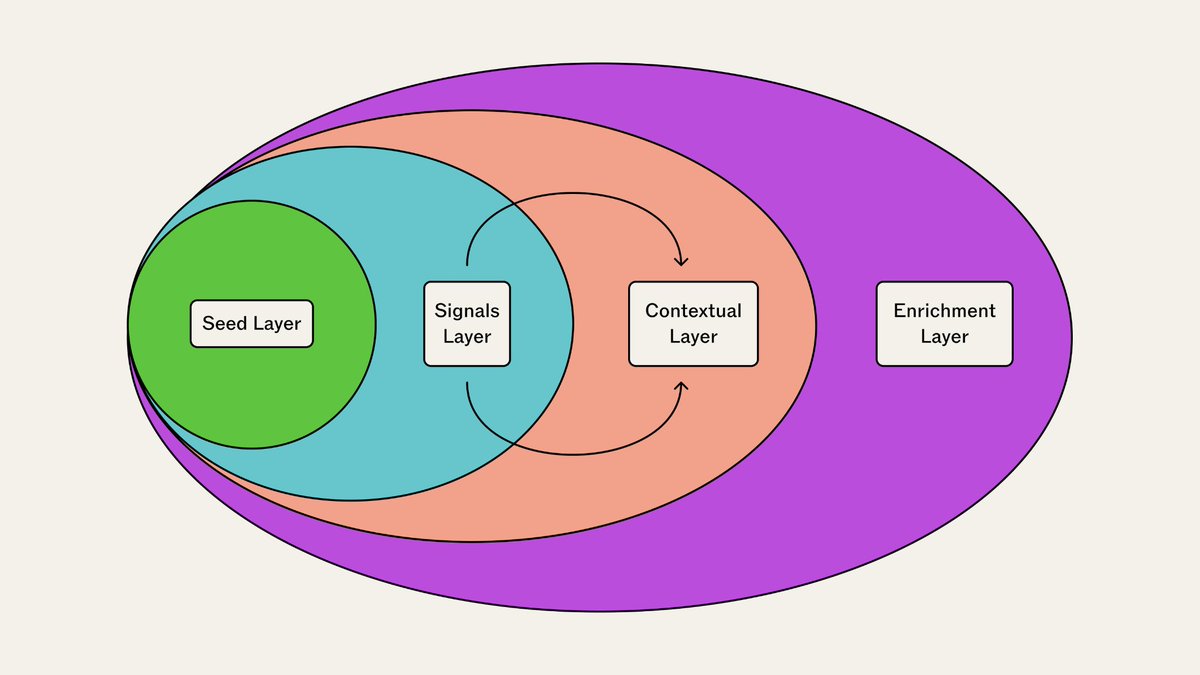

crypto Twitter says 0G_labs @RumiLabs_io inference_labs dgrid_ai dango are "revolutionizing" everything been using them daily for a month, the reality is way more boring (and interesting) let me show you the gap 🧵 THE NARRATIVE: "modular DA replaces monolithic chains", "decentralized AI beats Big Tech", "AI routing democratizes access", "micropayments enable new economy" sounds revolutionary, but what's actually happening? @0G_labs NARRATIVE: Twitter: "infinite scalability with 1 second finality", Reality: storing data blobs that timeout 30% of the time, not revolutionizing blockchain, just fighting network issues claim: 50,000 TPS theoretical capacity, my experience: 3-5 TPS actual sustained throughput on testnet, gap between theoretical and practical is massive user behavior narrative: "developers flocking to modular DA", reality: checked Discord ~2,400 members, maybe 80-100 active builders, compared to Celestia 15K+ Discord, Ethereum 50K+, it's growing but "flocking" is oversold, most just testing not production RUMILABS NARRATIVE: Twitter: "democratizing AI compute for everyone", Reality: I rent GPUs cheaper, saved $37/month, nice but "democratizing" feels stretched narrative: "thousands switching from centralized", reality: Discord 340 members, maybe 30 actively discussing, no public user metrics shown, works but scale tiny my actual usage: love cost savings ($37 real), but still 75% AWS, 25% RumiLabs, not switching fully just hedging, "democratizing" implies mass adoption, this is niche early adopters only @inference_labs NARRATIVE: Twitter: "optimizing AI access for all developers", Reality: routing API calls saving $4/month, works well but "all developers" = small API user subset checked metrics: no public user count, asked Discord: "how many users?", answer: "don't share specific numbers", translation: probably small contrast: OpenAI 180M+ weekly users (public), Anthropic 100M+ estimated, Inference: unknown but clearly orders of magnitude smaller, solving real problem for small group not "all developers" yet @dango NARRATIVE: Twitter: "enabling micropayment economy", Reality: 166K testnet users clicking buttons with fake tokens, not economy, test simulation sent 240 testnet transactions myself, but zero real economic value (testnet = play money), revolution completely simulated, mainnet Q1 2026 then we'll see user patterns narrative: "billions of AI agent transactions coming", reality: testnet 99% humans manually testing, found maybe 3-4 actual automated agents, AI micropayment economy = future thesis not current reality @dgrid_ai NARRATIVE: Twitter: "decentralized GPU network launching soon", Reality: nothing exists, all roadmap promises, can't evaluate vapor hype cycle: 2024 tweets "launching Q4 2024", 2025 tweets "launching 2026", narrative always "soon", reality perpetually delayed, familiar pattern in crypto, launch dates = rough estimates at best THE GAP: Twitter creates FOMO with grand visions, reality is slow, incremental, buggy progress, both happening simultaneously, neither complete picture ENGAGEMENT DISPARITY: 0G tweet: "infinite scalability achieved" - 3.8K likes, my experience: blob timeout again - 0 likes, Inference tweet: "democratizing AI" - 2.1K likes, my usage: saved $4 nice but not life-changing - crickets USER NUMBERS CONTEXT: 0G: ~2,400 Discord, context: Ethereum L2s have 10K-50K each, so 0G reached maybe 5-10% of one L2's community, still very early combined totals: 0G ~2,400, RumiLabs ~340, Inference ~580, dango ~8,200, DGrid ~1,100, total: ~12,620 across all 5, contrast: Solana alone has 200K+ Discord, these are small communities in crypto context am I too cynical or Twitter too optimistic? 👇 #Narrative #BuildInPublic #RealityCheck