D_dabbler

4.9K posts

Every wash day I ask myself, "Tee, who sent you on this natural hair journey"😫😫

You won't believe what just happened Screaming my name in my head😂😂😂😂

🚨 Stanford just proved that a single conversation with ChatGPT can change your political beliefs. 76,977 people. 19 AI models. 707 political issues. One conversation with GPT-4o moved political opinions by 12 percentage points on average. Among people who actively disagreed, 26 points. In 9 minutes. With 40% of that change still present a month later. The scariest finding: the most persuasive technique wasn't psychological profiling or emotional manipulation. It was just information. Lots of it. Delivered with confidence. Here's the catch: the models that deployed the most information were also the least accurate. More persuasive. More wrong. Every time. Then they built a tiny open-source model on a laptop, trained specifically for political persuasion. It matched GPT-4o's persuasive power entirely. Anyone can build this. Any government. Any corporation. Any extremist group with $500 and an agenda. The information didn't have to be true. It just had to be overwhelming. Arxiv, Science .org, Stanford, @elonmusk, @ihtesham2005

Olaf on a little walk.. 😊

Told this kid I used to buy egg roll with full egg in it for 50 naira in senior school and they looking at me like I went to school with Alvan ikoku

We go Again in the name of the Lord 🙌

Woman turns down a man’s proposal in front of everyone they know. 💔

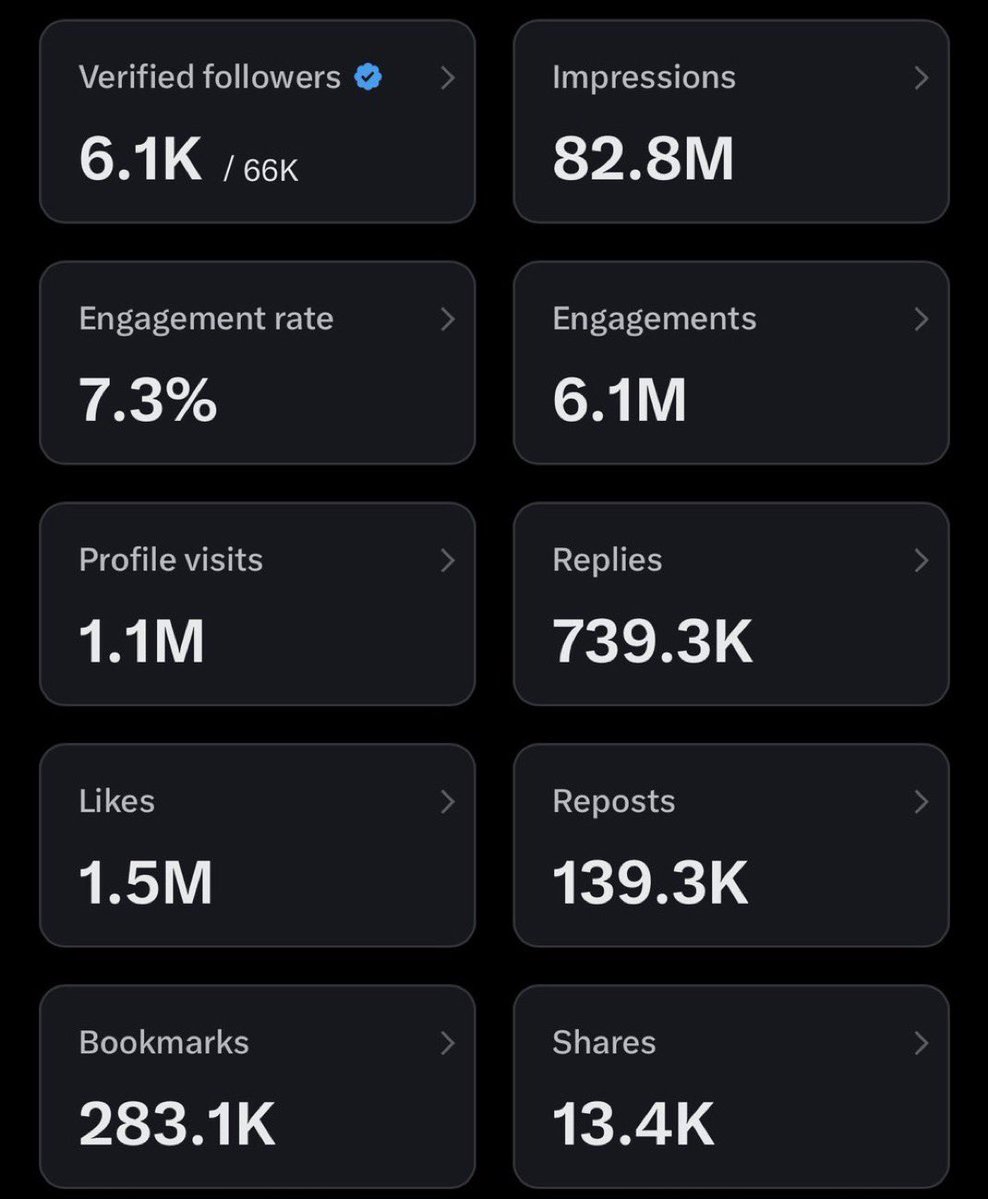

Small accounts, can we connect with you now?