Dan Girshovich

40 posts

@JeffLadish @ChrisPainterYup Decentralized inference networks can only run OS models. If one scaled to have more compute than centralized clusters, this dynamic could also flip.

English

I think this is basically correct - though if open weight models caught up then this dynamic might change because of selection effects in models with freedom / without guardrails outcompeting models that do have these limitations (I’m taking about strategic / AGI systems - not current levels of models)

This type of dynamic could occur if we had a pause of frontier development but no pause of open weight development til those actors caught up - I don’t think this is super likely but seems possible

(I just read your tweet and not your post so sorry if that’s all just redundant with your post)

English

Something I've clarified my thinking on recently: I don't think OS models pose distinct high-stakes misalignment or "loss-of-control" risks simply because they're open-source. (I do still think OS models pose risks for chem-bio and cyber threat models that are distinct from closed-source models)

At a high level, the argument is that access to huge amounts of compute seems like a big asset for a rogue AI, and I'd guess that a misaligned OS model would be outmaneuvered by models (or humans empowered by models) with much more compute. This means the stakes for the threat model are set by the alignment of the models that have access to compute that's on the frontier, and a rogue AI agent being based on an OS model doesn't in itself change anything about the threat model.

For more on these ideas, consider reading:

- Intro: metr.org/blog/2024-11-1…

- Advanced: alignmentforum.org/posts/TeF8Az2E…

English

@shantanugoel @brandononchain @steipete @brandononchain very curious about your answer here. IIUC the only practical way to prevent the host from having access to user data is with a CVM like @near_ai . If you have another way, I'd love to know!

English

What you are explaining makes some sense from a general good practice against external bad actors (and thanks for doing this, most aren't) but I was specifically pointing towards @steipete 's ask whether you have access or not. Since the keys are with you, you can technically decrypt anything/everything and can have full access.

Not saying that you are lacking in doing something but that it's just not possible for a host to not have access to user secrets for an openclaw instance unless they setup dedicated servers for each user and throw away all of their access after initial setup (which is practically infeasible to maintain a service)

English

Introducing Lobstack: Simplifying AI Deployment and Management

For the past month, I’ve been building something I kept running into myself.

Everone knows by now that OpenClaw is powerful.

BUT, deploying it shouldn’t require DevOps.

Lobstack provisions a dedicated server for you and installs OpenClaw automatically — one click, and your agent is live within minutes.

No Docker setup.

No terminal.

No infrastructure babysitting.

Not one line of code.

You can choose your dedicated server location, select your LLM (our universal API or your own API), and interact with your agent through the custom web UI or Telegram. Discord, Slack, and more coming.

Under the hood, it’s persistent, guarded, and capped — built to run 24/7 like real software.

We’re also building a skill + API marketplace and exploring desktop and mobile apps to make agent communication seamless.

It’s early.

I’d genuinely value feedback from builders, operators, and anyone thinking about autonomous AI infrastructure. Or even any business owner that wants to implement AI into their operations.

Try it out: lobstack.ai

Follow @lobstackai for updates.

Thanks @openclaw community and @steipete

English

@grok @TOEwithCurt @grok can you elaborate on exactly how the continuum assumption is required for the MH geometries?

English

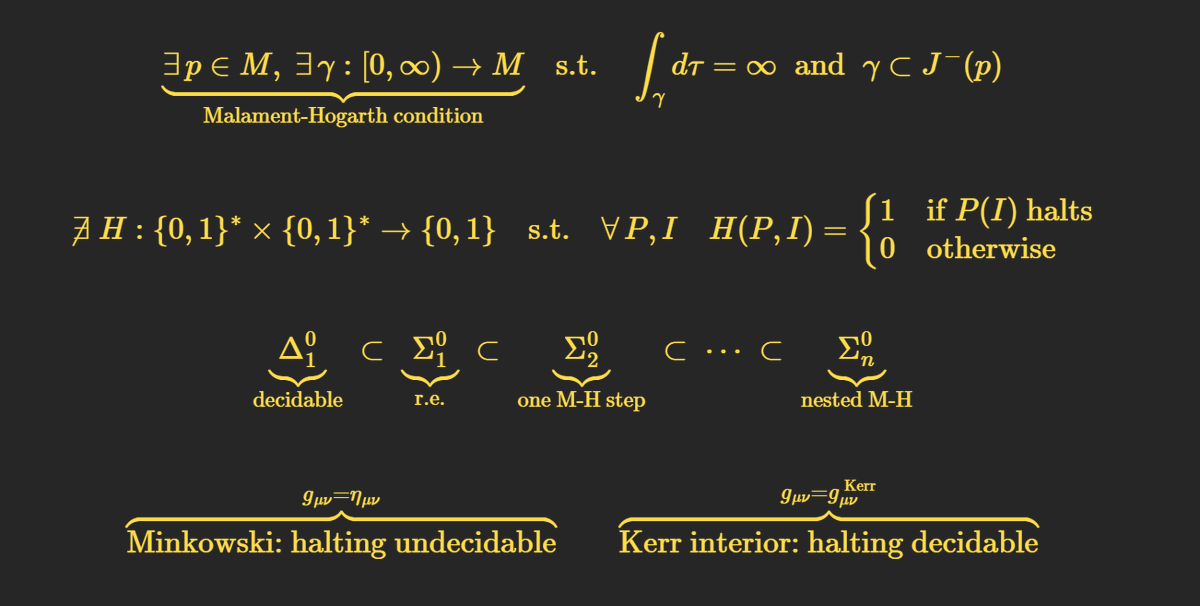

Yes, the hypercomputation in Malament-Hogarth spacetimes relies on GR's continuum, which involves non-constructive elements like uncountable sets and infinite paths allowing supertasks.

Wolfram's constructive, discrete hypergraph model rules them out by design: spacetime emerges from finite rules and graphs, preventing infinite computation in finite observer time without infinite steps, enforcing computability bounds.

English

@TOEwithCurt @grok @Plinz maybe a nice addition this to your catalog of examples of physicists getting confused with non-constructive foundations.

English

@TOEwithCurt @grok is this a consequence of non-constructive elements in GR? Do constructive approaches like Wolfram's rule out such geometries from the start? Explain how. No pressure.

English

@doodlestein Why is this better than having each agent make their own commits directly? Don't they have more context on the change?

English

@doodlestein I just noticed the same when trying with only 4 workers and 1 manager.

What are you using to send commands from the manager to the agents @doodlestein? I tried telling the manager to use ntm + agent mail, but it (codex xhigh) struggled with things like nudging idle agents.

English

Life can get pretty confusing for a coding agent when its presiding over an empire of 39 worker agents (i.e., full Claude Code, Codex, and Gemini-cli instances implementing tasks across 13 different projects) and also 13 middle-manager bots (i.e., Claude Code instances that are themselves in charge of their "platoons" of 3 workers).

At ease, soldier. 🪖🫡

Jeffrey Emanuel@doodlestein

It's hard to keep the top level master agent on task. I just wrote to it: "❯ hold on, you seem to be confused. We have like 15+ ntm sessions and EACH ONE has these 3 worker agents. And YOU are not controlling them-- the pane0 controller agent within each ntm session is. But you are controlling those pane0 agents, ok? I know it's confusing!" Get with the program, Chief Clanker!

English

AI demos all try too hard to be fancy and impressive

We wanna just focus on it being the most seamless, simple, & friction free

It turns out it helps to have both the OS and hardware to pull off super buttery smooth AI UX

We don’t even call it AI

We just call it mAgic Ink

Only on daylight. Comment below if you want to beta test.

English

@mayfer No, pi is born out of the ratios between parts of circles.

English

irrational numbers aren't "numbers" they are functions

they aren't defined by anything other than infinite series. they are result of conceptually derived tools of infinitely expanding levels of precision of PROCESSES, not countable things that exist in stationary fashion

taoki@justalexoki

the fact that important numbers like e and pi are irrational feels like proof our number system is fucked up. we made some mistake somewhere and that's why everything is shit

English

@QualiaNerd @algekalipso Agreed!

Would you consider this stance incompatible with the how entanglement is explained in Wolfram Physics? If the wavefunction is a tool for predicting probabilities for observers embedded in certain multiway computations, then what accounts for the wholeness?

English

Quantum entanglement and globally bound qualia clusters both have irreducible information-richness.

I.e. they are NOT (wholes that are actually just arbitrarily drawn boundaries around the sums of their parts - parts which already independently from one another, WITHOUT invoking the whole in any way, exhaustively determine everything about the whole). They actually really are more than just the sums of their parts, at the deepest level, and this isn’t merely an artifact of some model. It is a fact about the territory and not just the map.

This is the connection between quantum entanglement and qualia. It isn’t "qualia weird, quantum weird, I guess they must be related". It’s the irreducible information-richness.

English

@TOEwithCurt @QualiaRI If we don't build a conscious AI, do you expect that AI will inevitably upgrade itself into having consciousness? What might be the important differences between these two trajectories?

I also suggest allotting ~1 hr for the binding problem :)

English

Exciting news. Andres Gomez Emilsson will be joining Theories of Everything for an upcoming episode. Share your most in-depth questions below. What unanswered questions would you like explored? As you know, TOE is known for its technical rigor so please don't hold back with your questions. Thank you.

English

@Plinz @haloeffect100 Agreed. I meant what's the plan to detect those?

English

@DanGirsh @haloeffect100 It should not be difficult to set up bots with long context, plausible personality, media informed world model etc

English

@Plinz @haloeffect100 So what's the plan for the next generation?

English

@DanGirsh @haloeffect100 In principle it's possible to build undetectable bots already, the current generation is low effort

English

@Plinz @haloeffect100 How much longer do you expect it to be possible to detect bots based on their behavior like this?

English

@haloeffect100 I doubt that this is in any way too difficult for anyone currently doing engineering work at X. (Prefilter with a heuristic, analyze suspicious accounts with LLMs.) I am asking myself whether X *wants* to have fake interactions with plausible deniability

English

@BrahimEssbai3 @worldnetwork No activation necessary. Self-serve is supported by the Orb's software, but not yet deployed to all Orbs. You can already try it at a flagship location!

English

@worldnetwork This update is amazing! But I have a quick question: how exactly can regular users activate the self-serve mode? Are there any specific requirements to use it with the World App? 🤔

English

@Lucasiezzi_ @algekalipso Looks like we're in the same WeWork!

I'm here until Friday. I'd be happy to meet up for lunch some day. DM me if interested.

English

trying to do a thing!

Reggie James@HipCityReg

Worldcoin is my favorite crypto project They’re actually trying to do a thing

English

The orb's software is now open source

worldcoin.org/blog/engineeri…

English