Yatin Dandi

157 posts

Yatin Dandi

@DandiYatin

PhD student (EPFL, Switzerland), interested in the theory of deep learning and statistical physics of computation

Katılım Mayıs 2015

1.6K Takip Edilen333 Takipçiler

Yatin Dandi retweetledi

Fantastic workshop on High-Dimensional Learning Dynamics at BIRS. All talks here:

birs.ca/events/2026/5-…

English

Yatin Dandi retweetledi

J Cole sampled Andrew Wiles’ interview talking about his work on Fermat’s Last Theorem lol

J. Cole@JColeNC

MIDNIGHT

English

Yatin Dandi retweetledi

Excited to contribute to GPT-5.2! Thanks, and congrats to all teammates!

OpenAI@OpenAI

GPT-5.2 Thinking evals

English

Yatin Dandi retweetledi

🚨 Incredibly excited to share that I'm starting my research group focusing on AI safety and alignment at the ELLIS Institute Tübingen and Max Planck Institute for Intelligent Systems in September 2025! 🚨

Hiring. I'm looking for multiple PhD students: both those able to start in Fall 2025 (i.e., as soon as possible) and through centralized programs like CLS, IMPRS, and ELLIS (the deadlines are in November) to start in Spring–Fall 2026. I'm also searching for postdocs, master's thesis students, and research interns. Fill the Google form below if you're interested!

Research group. We will focus on developing algorithmic solutions to reduce harms from advanced general-purpose AI models. We're particularly interested in alignment of autonomous LLM agents, which are becoming increasingly capable and pose a variety of emerging risks. We're also interested in rigorous AI evaluations and informing the public about the risks and capabilities of frontier AI models. Additionally, we aim to advance our understanding of how AI models generalize, which is crucial for ensuring their steerability and reducing associated risks. For more information about research topics relevant to our group, please check the following documents:

- International AI Safety Report,

- An Approach to Technical AGI Safety and Security by DeepMind,

- Open Philanthropy’s 2025 RFP for Technical AI Safety Research.

Research style. We are not necessarily interested in getting X papers accepted at NeurIPS/ICML/ICLR. We are interested in making an impact: this can be papers (and NeurIPS/ICML/ICLR are great venues), but also open-source repositories, benchmarks, blog posts, even social media posts—literally anything that can be genuinely useful for other researchers and the general public.

Broader vision. Current machine learning methods are fundamentally different from what they used to be pre-2022. The Bitter Lesson summarized and predicted this shift very well back in 2019: "general methods that leverage computation are ultimately the most effective". Taking this into account, we are only interested in studying methods that are general and scale with intelligence and compute. Everything that helps to advance their safety and alignment with societal values is relevant to us. We believe getting this—some may call it "AGI"—right is one of the most important challenges of our time.

Join us on this journey!

English

Yatin Dandi retweetledi

More mind blowing projects on physics of AI in the years to come. Thank you @SimonsFdn for the support!!!

Simons Foundation@SimonsFdn

Our new Simons Collaboration on the Physics of Learning and Neural Computation will employ and develop powerful tools from #physics, #math, computer science and theoretical #neuroscience to understand how large neural networks learn, compute, scale, reason and imagine: simonsfoundation.org/2025/08/18/sim…

English

Yatin Dandi retweetledi

🎉 Congratulations to our researchers who were selected by the @ERC_Research as part of the 2024 call for proposals for the Advanced Grant competition: A. Ailamaki, C. Brès, P. Gönczy, E. Matioli, K. Moselund, M. Payer, F. Stellacci and L. Zdeborova.

go.epfl.ch/ERC-grants25

English

Yatin Dandi retweetledi

Announcing our first Distinguished Lecture Series speaker: Lenka Zdeborova @zdeborova of @icEPFL with a talk titled "Statistical Physics Perspective on Understanding Learning with Neural Networks." Please join us at TTIC on Wednesday, March 26 at 11:00am in Room 530, 5th floor.

English

Yatin Dandi retweetledi

Yatin Dandi retweetledi

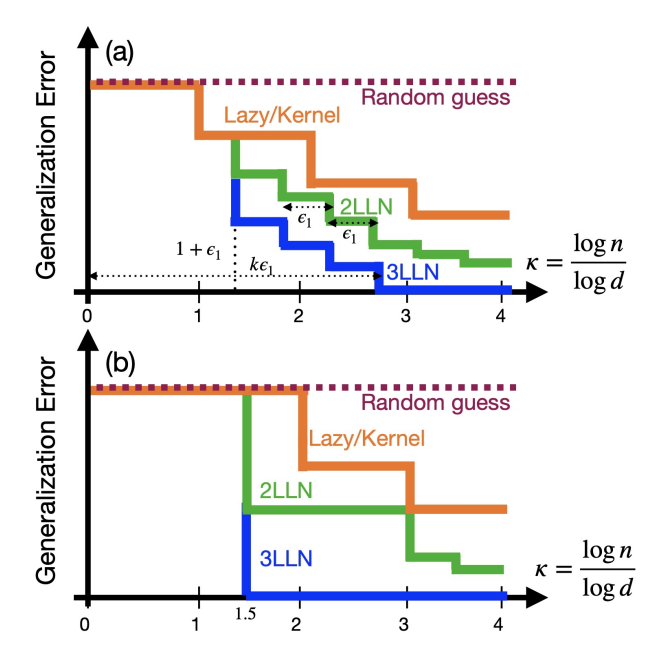

Excited about our progress in characterizing The Computational Advantage of Depth in Learning with Neural Networks. Check out the number of samples that can be saved when GD runs on a multi-layer rather than on a two-layer neural network. arxiv.org/pdf/2502.13961

English

Yatin Dandi retweetledi

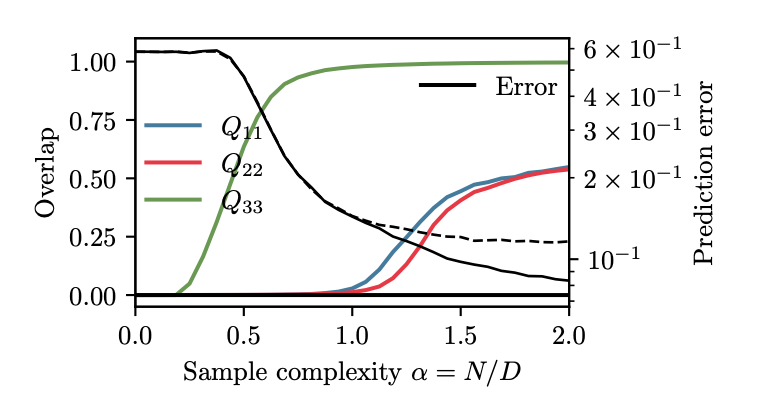

Did you think deep attention networks would be very hard to analyze theoretically? No more! Meet a model of deep dot-product attention with softmax that maps to a multi-index model, and theory provides its learning curve for high embedding dimensions. arxiv.org/pdf/2502.00901

English

Yatin Dandi retweetledi

Yatin Dandi retweetledi

The slides for the talk that I gave last week (Unlocking Hidden Dimensions: The Power of Generalized Eigenvectors) are now available at axler.net/conferences.ht… .

#linearalgebra

English

Yatin Dandi retweetledi

Congrats @KrzakalaF: Three SNSF Advanced Grants awarded to EPFL researchers - EPFL actu.epfl.ch/news/three-sns…

English

Yatin Dandi retweetledi

In 1824, a young French military engineer named Sadi Carnot published a 118-page book titled “Reflections on the Motive Power of Fire,” which he sold on the banks of the Seine for 3 francs. He died of cholera several years later, having sparked a new field that would become thermodynamics. quantamagazine.org/what-is-entrop…

English

Yatin Dandi retweetledi

'How Two-Layer Neural Networks Learn, One (Giant) Step at a Time', by Yatin Dandi, Florent Krzakala, Bruno Loureiro, Luca Pesce, Ludovic Stephan.

jmlr.org/papers/v25/23-…

#neurons #batch #gradient

English

Yatin Dandi retweetledi

Yatin Dandi retweetledi