Daniel Shao

17 posts

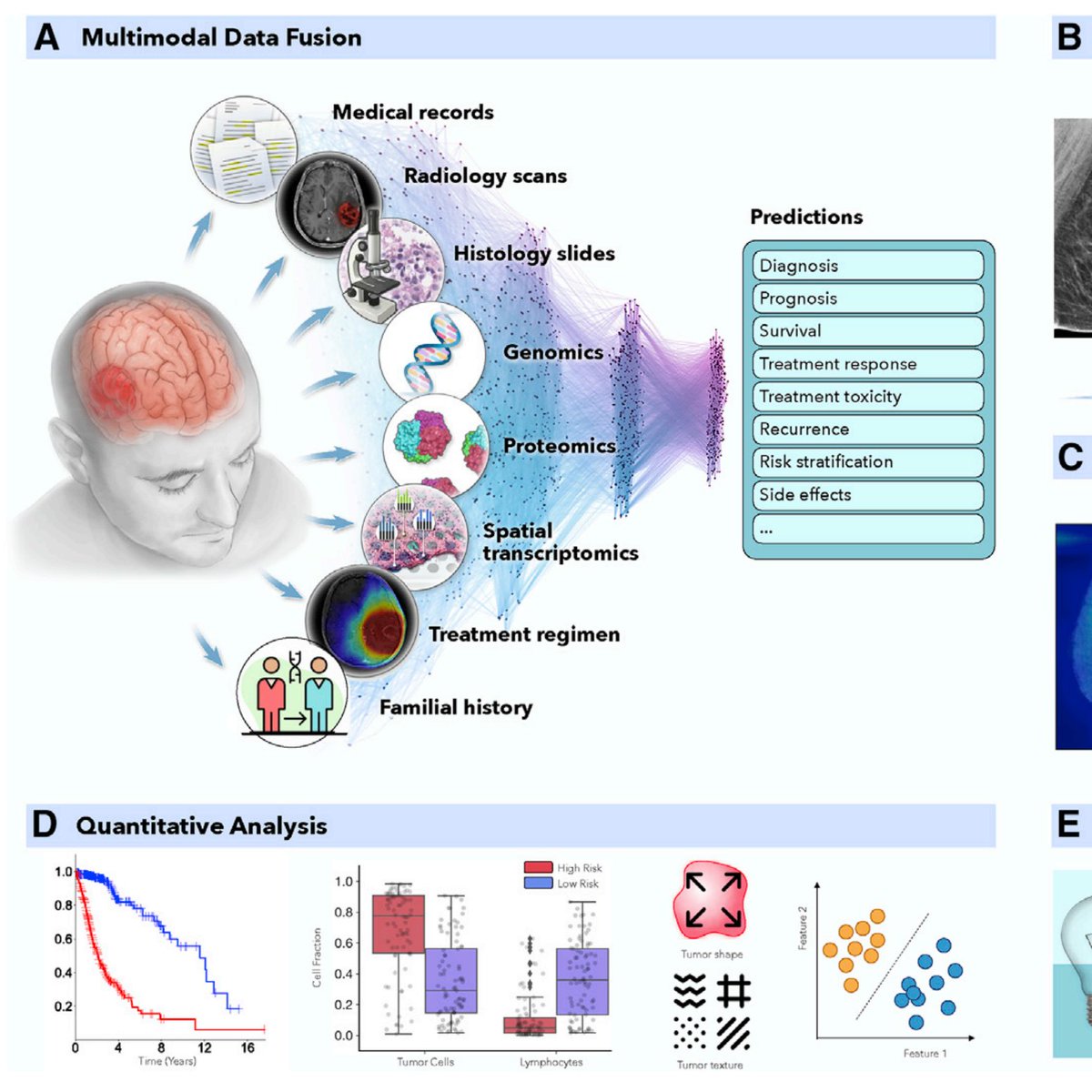

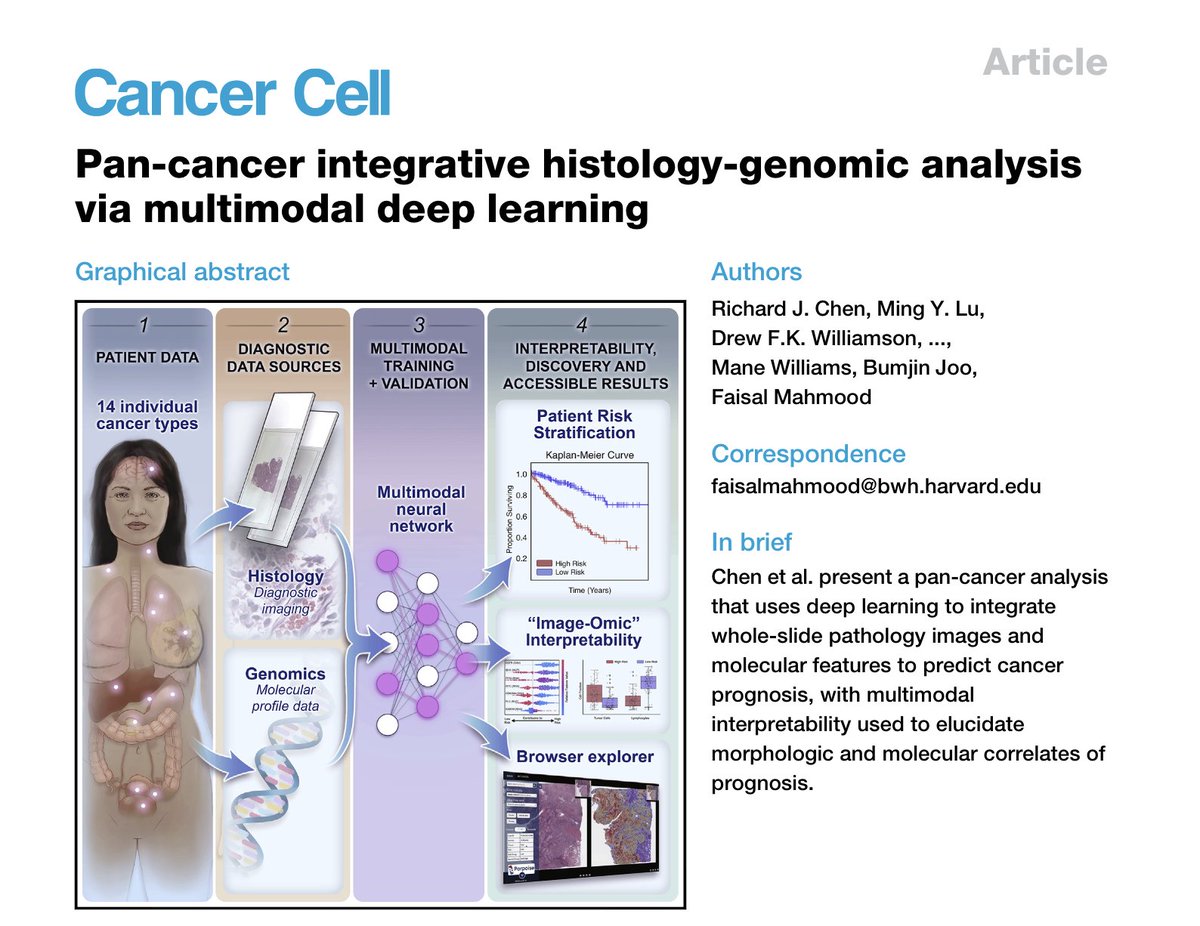

📣 Excited to share our new ICML 2025 Spotlight article, “Do Multiple Instance Learning Models Transfer?” – addressing a foundational questions for building robust and generalizable MIL models. Read the article: arxiv.org/pdf/2506.09022 👉Enhanced Performance & Robustness: Pretrained MIL models consistently lead to improved performance even when the pre-training data comes from a different organ, site, disease model than the target task. 👉Aggregation Transfers: Transfer gains come from the MIL aggregation module, not just patch encoders. Resetting attention layers drops performance by 5–8%, showing they encode generalizable pooling logic. 👉Pancancer Generalization: Models pretrained on a more diverse and challenging data (e.g. 108-class pancancer classification task) achieve the stronger overall transfer performance. 👉Robust benefits across patch encoders: Benefits from MIL transfer are consistent across a wide range of patch encoders, from out-of-domain encoders such as ResNet50 pretrained on natural images, to in-domain encoders including Gigapath and UNIv2. This research highlights supervised pretraining as a highly accessible path to generalizable MIL models, offering a data and compute-efficient route for developing slide level encoders with flexible combination of MIL method and patch encoder. Congratulations to @Daniel__Shao @GreatAndrew90 @richardjchen and everyone else who contributed. Stay tuned for an array of pre-trained MIL models ready to transfer to any task! Visit us at @icmlconf.

📣 Excited to share our new ICML 2025 Spotlight article, “Do Multiple Instance Learning Models Transfer?” – addressing a foundational question for building robust and generalizable MIL models. Read the article: arxiv.org/pdf/2506.09022 👉Enhanced Performance & Robustness: Pretrained MIL models consistently lead to improved performance even when the pre-training data comes from a different organ, site, disease model than the target task. 👉Aggregation Transfers: Transfer gains come from the MIL aggregation module, not just patch encoders. Resetting attention layers drops performance by 5–8%, showing they encode generalizable pooling logic. 👉Pancancer Generalization: Models pretrained on a more diverse and challenging data (e.g. 108-class pancancer classification task) achieve the stronger overall transfer performance. 👉Robust benefits across patch encoders: Benefits from MIL transfer are consistent across a wide range of patch encoders, from out-of-domain encoders such as ResNet50 pretrained on natural images, to in-domain encoders including Gigapath and UNIv2. This research highlights supervised pretraining as a highly accessible path to generalizable MIL models, offering a data and compute-efficient route for developing slide level encoders with flexible combination of MIL method and patch encoder. Congratulations to @Daniel__Shao @GreatAndrew90 @richardjchen and everyone else who contributed. Stay tuned for an array of pre-trained MIL models ready to transfer to any task! Visit us at @icmlconf.

Three #CWRU students—Zahin Islam, Daniel Shao and Evan Vesper—will each receive $7,500 toward tuition, room and board, books and other expenses per academic year as Barry Goldwater Scholarship recipients. ow.ly/pEQd50BeQog #Congratulations