Daniel ØG

30K posts

Daniel ØG

@Danzzy327

Ethereum core | Ton core | Building the future Onchain promised

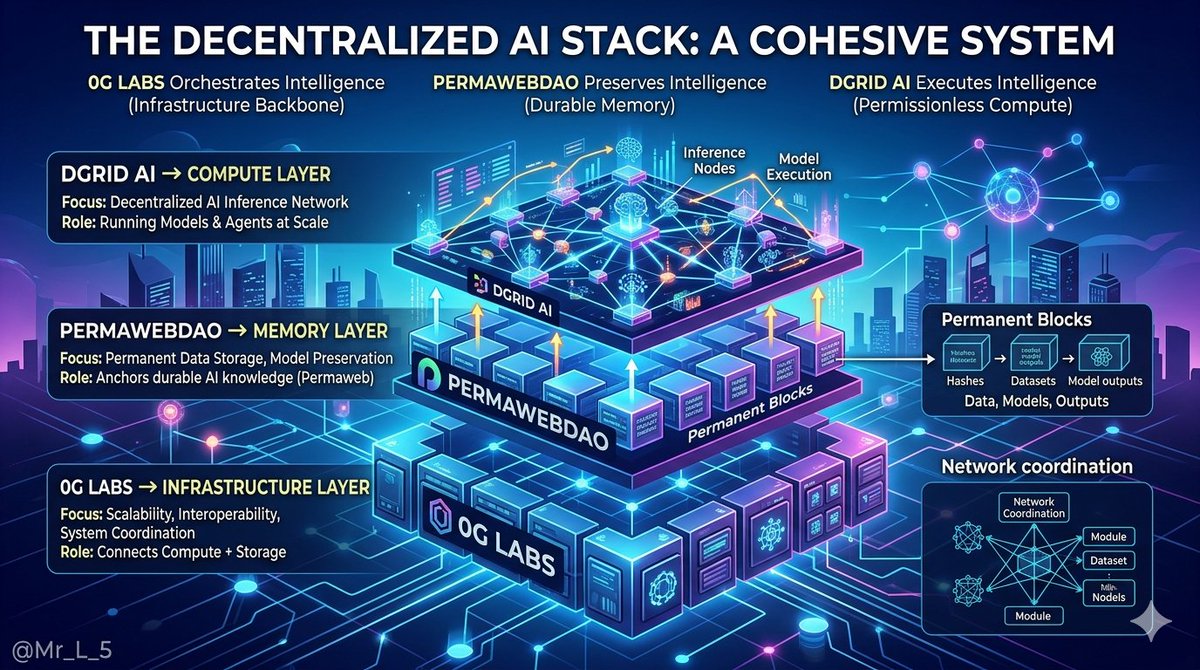

The Infrastructure Beneath the Noise The crypto market is loud. New tokens launch daily. Narratives shift weekly. Attention moves constantly. But beneath all that noise, real infrastructure gets built by teams who don't chase the spotlight. Three projects stand out. @dango Dango looked at every decentralized exchange and saw the same flaw. Smart contracts were never designed for order books. Blocks introduce latency. Traders accepted compromise. Dango rejected this. They built a chain where the Central Limit Order Book lives at consensus level. Matching and settlement happen in the same instant because they are the same mechanism. When Dango executes a trade, there is no gap between confirmation and finality. No waiting. No overhead. Just capital moving at the speed of consensus. Dango is not a faster DEX. Dango is a new category: a blockchain architected to move value and nothing else. @0G_labs 0G Labs looked at artificial intelligence and saw a scaling problem no blockchain could solve. Training needs petabytes of throughput. Inference demands low-latency compute. 0G Labs built a modular operating system where consensus, data availability, and execution each operate in their own lane. Each scales independently. Throughput grows as fast as the network grows. Data on 0G Labs flows without permission. Compute expands without ceilings. 0G Labs provides the raw power that makes decentralized AI possible at scale. @dgrid_ai DGrid AI looked at powerful AI and saw a trust deficit. Models making decisions about loans and trades were operating without verification. Black boxes in transparent systems. DGrid AI solved this through Proof of Quality consensus. Every inference carries cryptographic proof. Verification nodes sample outputs. Results that pass are rewarded. Results that fail are slashed. Every operation on DGrid AI leaves an auditable trail. Every output comes with a witness. Trust becomes a property of the system, not a promise. The Stack A model trains on 0G Labs. It generates a signal. DGrid AI verifies that signal. Dango executes it. Compute from 0G Labs. Verification from DGrid AI. Settlement from Dango. Three layers. Each specialized. Each essential. @dango, @0G_labs, and @dgrid_ai are not competing with each other. They are competing with the idea that one chain should do everything. The market will keep cycling through narratives. These three will keep building through every cycle. Dango settles. 0G Labs scales. DGrid AI verifies.

Dgrid is solving a coordination problem nobody named out loud. In a decentralized AI network, every participant assumes someone else is verifying the output. Nobody is. That diffusion of responsibility is not a bug in a specific implementation. It is a structural feature of how these networks were designed. Proof of Quality does not just verify inference. It closes the gap that distributed systems naturally create when accountability has no fixed address. --- @permacastapp is changing something about how niche content survives. On traditional platforms, obscure work fades because the algorithm stops surfacing it and the creator stops paying for a feed nobody visits. On Arweave, obscurity is not a death sentence. A niche podcast published today stays exactly as reachable in fifteen years as it is right now. The long tail of human knowledge does not need an audience today to justify its existence tomorrow. Permacastapp is infrastructure for ideas that take time to find their people.

0G Labs isn’t just another blockchain project it’s the backbone of decentralized AI at scale. In a world where artificial intelligence is rapidly becoming the most valuable resource, the biggest challenge isn’t intelligence itself it’s infrastructure. Centralized AI systems dominate today because they have: Massive compute power Fast data processing Scalable storage But they come with a cost: 👉 Control is concentrated 👉 Access is limited 👉 Innovation is restricted This is exactly where 0G Labs steps in. The Core Advantage (Your “Longs Privilege” Angle) 0G Labs provides raw, scalable power — not just tools. That means: ⚙️ High-performance decentralized compute 📦 Modular data availability 🚀 Ultra-fast processing layers for AI workloads Instead of building AI on top of weak decentralized systems, 👉 0G flips the model it builds infrastructure for AI from the ground up. The infrastructure layer for decentralized intelligence Content Strategy You Can Use 1. Narrative Hook (Use This Style Often) “AI doesn’t scale without power. And power shouldn’t be centralized.” 2. Educate Simply Break it into: AI needs compute Compute is expensive Centralization controls it 0G decentralizes it Highlight the Shift Old world: Web2 AI (OpenAI, Google, etc.) Closed systems New world: Permissionless AI Open infrastructure Decentralized compute 👉 0G = bridge between both worlds Growth Strategy (If You’re Promoting or Investing Attention) A. Early Narrative Advantage Projects like this win when people understand them early. So: Focus on explaining, not hyping Break complex ideas into simple posts B. Content Angles That Work Rotate between: “Why decentralized AI matters” “Problems with centralized AI” “Future of AI infrastructure” “How 0G fits into the future” DGrid AI vs Unstructured Intelligence: A Strategic Framework Core Statement DGrid AI formalizes intelligence into a self-governing system, while unstructured intelligence relies on continuous human oversight. This is not just a technical difference it’s a shift in how intelligence operates, scales, and creates value. Understanding the Two Models A. DGrid AI (Structured / Formalized Intelligence) DGrid AI represents: Organized intelligence systems Rule-based + adaptive frameworks Self-regulating decision loops Distributed control (not dependent on one authority) 👉 Think of it as: “An intelligence system that can run, adjust, and improve itself with minimal supervision.” Key Characteristics: Defined architecture Feedback-driven learning Autonomous correction Scalable without chaos B. Unstructured Intelligence This is the traditional model: Human-driven decisions Fragmented systems Reactive instead of proactive Heavy dependence on supervision Think of it as: “Intelligence that works only when someone is actively managing it.” Key Characteristics: Inconsistent outcomes Slower decision cycles High human cost Limited scalability Strategic Advantage of DGrid AI A. Autonomy = Speed When a system governs itself: Decisions happen instantly No waiting for approvals Continuous operation (24/7 intelligence) Strategy Insight: Speed becomes a competitive weapon

The narrative is shifting toward coordinated, decentralized systems. @0G_labs is building the modular infrastructure compute and data layers that power AI at scale. @dgrid_ai is rebuilding the intelligence layer with decentralized agents and aligned incentives. Own the rails.

DGrid_ai ✔️ Diving deep into DGrid AI the decentralized AI inference network that's quietly reshaping how we access powerful LLMs in Web3 In a world where OpenAI, Anthropic & co. control the gates high costs, black box decisions, rate limits, censorship risks), DGrid flips the script: 1.Thousands of distributed nodes resilient & always-on) 2.120+ leading AI models (and growing) 3. Developers & apps via one unified API No more juggling multiple provider keys or dealing with centralized downtime. Just plug in, switch models instantly, and get trustless, verifiable outputs.Key highlights right now (March 2026): Unified API → Access hundreds of models (open-source + premium) with a single endpoint. Smart routing picks the fastest/cheapest node automatically. Decentralized & verifiable → Built on blockchain for transparency. Inference tasks are distributed, with mechanisms like Proof of Quality and slashing bad actors via staked $DGAI. AI Arena → Live and paying USDT rewards! Jump in, compare model outputs side-by-side, vote on which is better → help benchmark & improve models while earning real money. Human preference data is gold in 2026. Low-cost / high-resilience → No middleman markups. Free models available, premium at near-supplier prices. Thousands of nodes mean redundancy & speed. ✔️Permacastapp is the AI-powered permanent media network built by PermawebDAO on Arweave. It lets you upload podcasts, audio shows, videos, news articles, and long-form content so it stays online forever.Main point: Content goes on Arweave pay once, stored permanently. No monthly hosting fees, no risk of platform deletion, no 404 links years later. Once uploaded, it's immutable, verifiable, censorship-resistant, and accessible through any Arweave gateway. PermawebDAO's goal is bigger: create a sustainably evolving Web3 ecosystem with permanent data as the foundation. They build infrastructure where apps, trading systems, governance, and media share stable, referenceable data that doesn't disappear or fragment. Permacast features right now: 1. Permanent storage on Arweave content becomes on-chain assets anyone can reference across platforms and time. 2. AI Media Intelligence Engine automatic summarization, tagging, sentiment analysis, trend detection, smart distribution, and optimization so your content reaches the right people.