DATPIFF Hip-Hop Mixtape Archives

archive.org/details/datpif…

Via Joey @gothamhiphop and @internetarchive

English

David Holmes

497 posts

@DavidHolmes

Long-time blogger & digital curator | sound engineer & musician | Internet music aficionado. Specialized lists available.

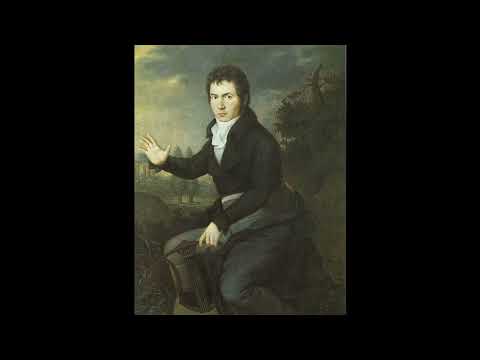

A new cinematic AI piece. Continuing with the @Magnific_AI + @midjourney + @runwayml combination, but in the setting of a museum. It's an introspection on who is watching whom in the art world.👀 "Panopticon - Museum"

If we have to reinvent punk music to get $8.5 trillion message out, we will! 4 more #FreakPower music vids to come ~ zero1.is/freakpower/mag…