DeepInfra

620 posts

DeepInfra

@DeepInfra

Fast ML inference. Run top AI models using a simple API.

Palo Alto Katılım Şubat 2023

65 Takip Edilen4.8K Takipçiler

DeepInfra has raised its $107M in Series B funding 🚀

AI is moving from training to production-scale deployment, and inference is becoming the system constraint.

DeepInfra was built for this shift — scaling high-throughput inference for open-source and agent-driven workloads. Grateful to our investors and partners, co-led by @500GlobalVC and @gharik

English

DeepInfra is now a first-class provider in @OpenClaw. One key, every model. 🦞

OpenClaw🦞@openclaw

OpenClaw 2026.4.27 🦞 🧠 DeepInfra provider 📎 better file attachments 🛡️ operator-managed proxy routing 🧭 stricter model selection + local model fixes 🔧 gateway, channel, and session reliability Ships more than it brags. github.com/openclaw/openc…

English

DeepInfra retweetledi

P-Video-Avatar is now the fastest model for top-quality and diverse avatar generation.

- It costs 0.025$ per second of video, making it up 6× cheaper than similar avatar tools

- It takes 1.83s generation time per second of video, making it up to 18x faster than similar avatar tools.

- It offers full-body control, integrated audio support in 20+ languages, and is long-form video-ready for up to 3-minute consecutive clips.

We are launching with free usage until Sunday 23:59 CET at @replicate, @WiroAI, @inference_sh, @Runware, @Scenario_gg

And with general availability at:

@deepinfra, @Eachlabs

Try it now: …-playground-production.up.railway.app

📚 API & quickstart for Pruna API: docs.api.pruna.ai/guides/quickst…

⭐️ Star our OSS: github.com/PrunaAI/pruna

🌐 Website: pruna.ai

English

DeepInfra retweetledi

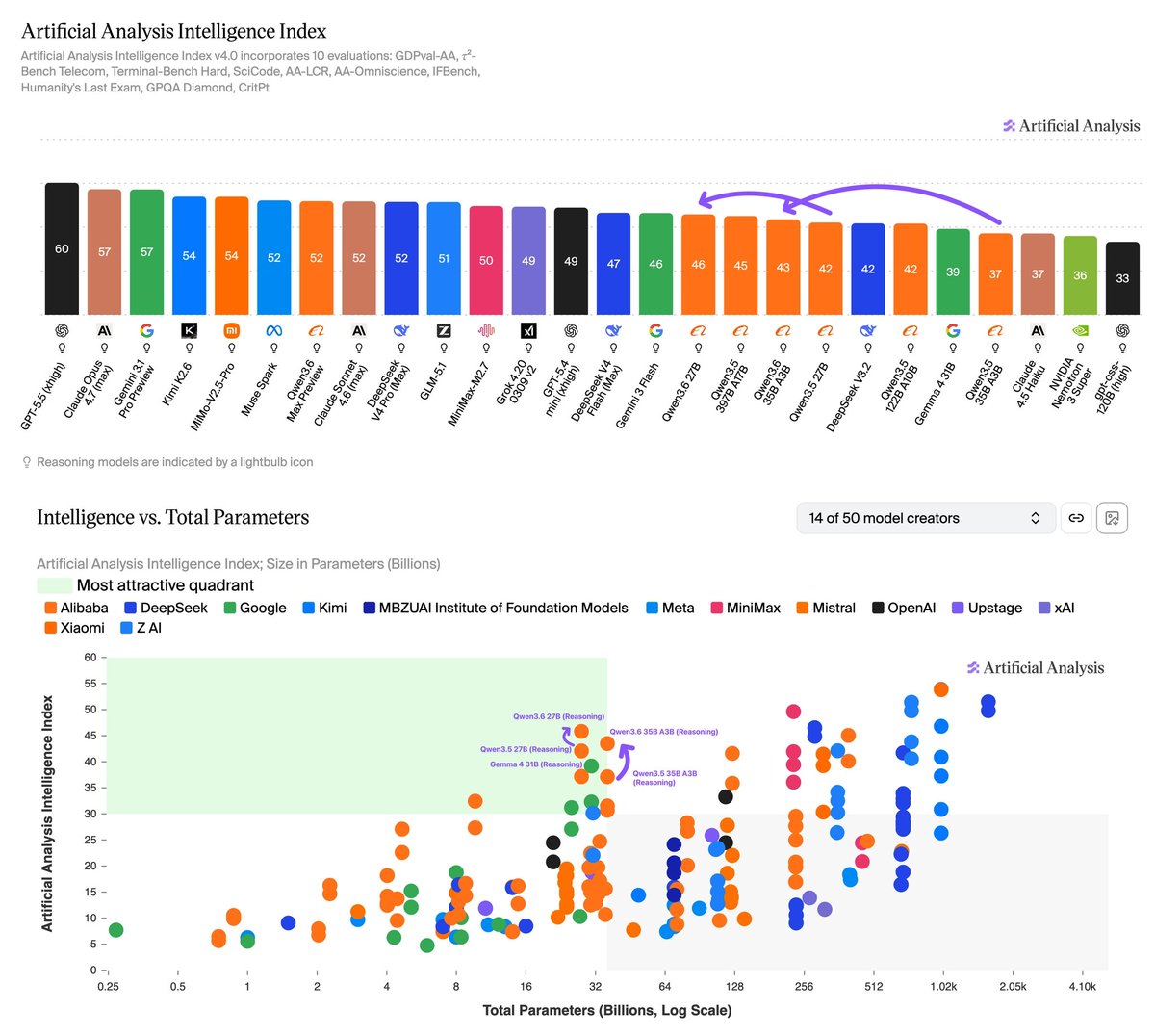

Alibaba's Qwen3.6 27B is the new open weights leader under 150B parameters scoring 46 on the Artificial Analysis Intelligence Index, but uses ~3.7x the output tokens and costs ~21x more than Gemma 4 31B (39) to run the full Intelligence Index

@Alibaba_Qwen has released two open weights models in the Qwen3.6 family: Qwen3.6 27B (Dense, 46 on the Intelligence Index) and Qwen3.6 35B A3B (MoE, 43). The MoE variant has 36B total parameters but only activates 3B per forward pass. Both are Apache 2.0 licensed, support 262K context, include native multimodal input, and use the unified thinking/non-thinking hybrid architecture.

Unlike Qwen3.5, Alibaba has not released larger Qwen3.6 models as open weights - Qwen3.6 Plus and Qwen3.6 Max Preview remain proprietary, so the Qwen3.6 open weights family is currently all under 50B models. All scores below are for reasoning mode. The Intelligence Index is our synthesis metric incorporating 10 evaluations covering agentic tasks, coding, and scientific reasoning.

Key takeaways:

➤ Qwen3.6 27B is the most intelligent open weights model under 150B parameters. At 46 on the Intelligence Index, Qwen3.6 27B is ahead of Qwen3.6 35B A3B (43), Qwen3.5 27B (42), and Gemma 4 31B (39). It is also ahead of larger open weights models including NVIDIA Nemotron 3 Super 120B A12B (Reasoning, 36), Qwen3.5 122B A10B (42) and gpt-oss-120b (high, 33). In native BF16 precision, the 27B takes ~56GB to store the weights, fitting on a single H100, and in 4-bit quantization the weights fit on consumer hardware with 16GB+ of RAM

➤ Qwen3.6 35B A3B is the most intelligent open weights model with ~3B active parameters, 6 points ahead of Qwen3.5 35B A3B (37) and 13 points ahead of GLM-4.7-Flash (30). Other ~3B active peers include Gemma 4 26B A4B (31), Qwen3 Coder Next (80B total, 28), and NVIDIA Nemotron Cascade 2 30B A3B (28)

➤ AA-Omniscience improvement is driven entirely by abstention rather than accuracy. Qwen3.6 27B's hallucination rate falls from 80% to 48% versus Qwen3.5 27B, while accuracy is roughly flat - consistent with our finding that AA-Omniscience accuracy typically correlates with total parameter count and Qwen3.6 27B retains the same 27B parameter count as its predecessor. The 35B A3B shows the same pattern whereby hallucination drops from 84% to 50% while accuracy remains equivalent

➤ Token usage is up across both models versus Qwen3.5 and significantly higher than Gemma 4 31B. Qwen3.6 27B used ~144M output tokens to run the Intelligence Index (~1.5x Qwen3.5 27B at 98M, ~3.7x Gemma 4 31B at 39M). Qwen3.6 35B A3B used ~143M (~1.4x Qwen3.5 35B A3B at 100M, ~3.7x Gemma 4 31B)

➤ The 27B got materially more expensive while the 35B A3B is roughly flat versus predecessor. Per-token pricing on Alibaba Cloud moved differently, with the 27B going from $0.30/$2.40 to $0.60/$3.60 while the 35B A3B (Reasoning) remains nearly flat at $0.248/$1.485 (vs $0.25/$2.00 for Qwen3.5 35B A3B). Qwen3.6 27B costs ~$659 to run the Intelligence Index, ~2.2x Qwen3.5 27B (~$299) and ~21x Gemma 4 31B (~$31 at median third-party pricing of $0.14/$0.40 per 1M input/output tokens). Qwen3.6 35B A3B costs ~$280, roughly tied with Qwen3.5 35B A3B (~$302) and ~9x Gemma 4 31B

➤ Qwen3.6 27B is competitive with leading models on agentic real-world work tasks despite its size. At 1414 Elo on GDPval-AA, Qwen3.6 27B is ahead of recent open weights peers Qwen3.6 35B A3B (1297), Qwen3.5 27B (1157) and Gemma 4 31B (1115), but trails larger open weights leaders including DeepSeek V4 Pro (Reasoning, Max Effort, 1554) and GLM-5.1 (Reasoning, 1535). It matches DeepSeek V4 Flash (Reasoning, High Effort, 1414) at 284B total parameters, and sits roughly in line with GPT-5.4 mini (xhigh, 1436) and Muse Spark (1421).

➤ Non-reasoning variants remain equivalent versus Qwen3.5. Qwen3.6 27B (Non-reasoning, 37) is effectively tied with Qwen3.5 27B (Non-reasoning, 37); Qwen3.6 35B A3B (Non-reasoning, 32) is equivalent to Qwen3.5 35B A3B (Non-reasoning, 31). The Qwen3.6 generation gains are concentrated in reasoning mode

Other information:

➤ Context window: 262K tokens (equivalent to Qwen3.5)

➤ License: Apache 2.0

➤ Multimodality: Native vision input (text and image), text output

➤ API pricing (Alibaba Cloud): Qwen3.6 27B: $0.60/$3.60, Qwen3.6 35B A3B (Reasoning): $0.248/$1.485

➤ Availability: Available on Alibaba Cloud first-party API. Qwen3.6 35B A3B is available on several third-party APIs such as @DeepInfra, @parasail_io, @clarifai and @novita_labs

English

@huggingface Suported models:

huggingface.co/models?inferen…

Integration docs here: huggingface.co/docs/inference…

English

DeepInfra × Hugging Face

DeepInfra is live on @HuggingFace Inference Providers.

Run DeepSeek V4, Kimi-K2.6, GLM-5.1 and 100+ more open models straight from the Hub — same OpenAI-compatible API, same low per-token pricing, no markup.

Just add :deepinfra to the model name.

English

DeepInfra retweetledi

Handles everything your agent needs to see and hear → deepinfra.com/nvidia/Nemotro…

English

DeepInfra is an official launch partner for @nvidia Nemotron™ 3 Nano Omni — live today.

One open multimodal 30B-A3B model.

One pass over image, video, audio, docs+ text.

No multi-model pipelines.

OpenAI-compatible API, usage-based pricing.

$0.20 in / $0.80 out per 1M tokens

English

Price drop alert on DeepInfra:

• GLM-5.1 → $1.05 / $3.50 / $0.205

• GLM-5 → $0.60 / $2.08 / $0.12

• MiniMax-M2.5 → $0.15 / $1.15 / $0.03

• Gemma-4-26B-A4B-it → $0.07 / $0.34

(in / out / cached, per 1M)

deepinfra.com/models

English

DeepInfra retweetledi

The new @deepseek_ai v4 Flash on @DeepInfra is crushing it.

Fast , consistent , very low cost service quality of a fantastic SOTA model with large 1M token context that actually feels meaningful.

Unlike other frontier models claiming large contexts but losing the script long before reaching context limits.

And unlike other model service providers that frequently drop to 1-2TPS at arbitrary times.

1/100th the cost of top SOTA closed providers. Intelligence density is most definitely not 1/100th. Maybe 10-15% behind. Well worth the trade off. 99% price discount for 10-15% intelligence reduction.

English