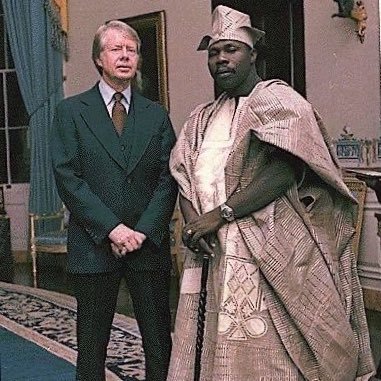

Sabitlenmiş Tweet

Dev Chopra

719 posts

Dev Chopra

@DevChopra162002

Founder & CEO, Thalenor | Machine Learning Engineer

Gurugram, Haryana Katılım Ocak 2025

68 Takip Edilen363 Takipçiler

@tunguz “No-code developers” selling automations for $20k will never know.

English

@sanjana_writer Jo sansarik moh-maya aur bhog-vilaas se pare hai

हिन्दी

In distributed systems, split brain is when a cluster gets partitioned and different nodes think they are the leader or primary at the same time. Since they cannot see each other, they keep accepting writes independently, which can lead to data conflicts or inconsistency once the network issue is resolved.

English

LLM vs SLM

LLMs have billions of parameters and are trained to handle many tasks with strong reasoning, but they’re expensive and slower. SLMs have fewer parameters, lower latency, and are often fine tuned for a narrow use case where you don’t need broad intelligence.

Tokens vs Context Window

Tokens are the basic units the model processes, roughly parts of words. The context window is the maximum number of tokens the model can attend to at one time, including both input and output, which directly limits memory and reasoning depth.

Prompt Engineering vs Prompt Chaining

Prompt engineering is optimizing a single prompt to get better outputs. Prompt chaining is designing multiple prompts where the output of one becomes the input of the next, often used to decompose complex tasks.

RAG (Retrieval Augmented Generation)

RAG combines retrieval and generation by fetching relevant documents from an external source and injecting them into the prompt. This improves factual accuracy and allows models to use up to date or private data.

Embeddings

Embeddings are dense vector representations of text or other data that capture semantic meaning. Similar concepts produce vectors that are close together in vector space.

Vector Databases

Vector databases store embeddings and support fast nearest neighbor search. They’re optimized for similarity queries rather than exact matches.

Agents vs Workflows

Agents use reasoning to decide which tools or steps to run dynamically. Workflows are deterministic pipelines with predefined steps and control flow.

English

@JohnnySacks @SumitM_X Yes that’s a fair clarification. Thanks.

English

@DevChopra162002 @SumitM_X Your main answer is correct.

But small quibble. The subquery returns a row for each unique salary value. The value of the column of each row is 0.

English

If writes are slowing down reads, I would keep them from stepping on each other.

I would move heavy write work off the main request path, usually by pushing it to a queue and processing it in the background. I would also split reads and writes so reads hit a replica or a read-optimized store, not the same place handling writes. Caching helps a lot too for frequently read data.

On the database side, I would check for long transactions or locks, add the right indexes and batch writes where possible. That alone often fixes most of the slowdown.

English

2025 was a lot, honestly.

Months of prep for an Applied Scientist interview at Amazon came down to one hour that did not go my way. That stung, especially coming from a tier 3 college where opportunities like that feel rare. But I did not stop.

Freelancing turned serious. I built @thalenor_on_x, delivered projects across three continents and earned freedom and independence on my own terms. I graduated this year too and topped my department. Missed convocation because the administration of my beloved college deemed it appropriate to inform me a day prior to the event.

Looking back, I am clear. I am not chasing big names anymore. I am building something bigger for myself.

And honestly, 2026 is going to rock 🍻

English

@Google I need your help urgently.

Create a dedicated support account so my feed doesn’t get flooded with you solving gmail problems of every user on the planet.

English

Transient vs static vs final in Serialization

Transient fields are skipped during serialization, so their values are not saved.

Static fields belong to the class, not the object, so they are not serialized.

Final fields do get serialized because they are part of the object state, as long as they are not static or transient.

Externalization vs Serialization

Serialization is automatic. Java handles everything for you.

Externalization gives you full control. You manually decide what to write and read, but you have to handle everything yourself.

SerialVersionUID

It is a version number for a Serializable class. It helps Java check whether a loaded class matches the serialized object. If it does not match, you get InvalidClassException.

Customized Serialization

It is when you control serialization logic by writing writeObject and readObject methods. Useful when you want to skip fields, encrypt data or handle backward compatibility.

English

• SerialVersionUID

It’s just a version number for a Serializable class so Java can tell if an object can be safely deserialized or not.

• Type Erasure

Generics exist only at compile time. At runtime, Java removes the generic type info and treats everything as raw types.

• CompletableFuture

A way to run async tasks and chain results without blocking, using a clean and readable style.

• BiFunction

A functional interface that takes two inputs and returns one result.

• transient

Marks a field so it doesn’t get serialized when the object is saved.

• PhantomReference

A reference used to know exactly when an object is about to be garbage collected, mainly for cleanup work.

• ForkJoinPool

A thread pool designed for tasks that can be split into smaller pieces and run in parallel.

• Shenandoah GC

A low-pause garbage collector that does most of its work while the application is still running.

• Flight Recorder

A built-in profiling tool that records JVM performance data with very low overhead.

• Virtual Threads

Lightweight threads that let you write simple blocking code while still scaling like async.

• Foreign function

Lets Java call native code written in languages like C without using JNI directly.

• Semaphore

A concurrency tool that limits how many threads can access a resource at the same time.

• CountDownLatch

Lets one or more threads wait until a set of operations in other threads finishes.

• CDS (Class Data Sharing)

Speeds up startup and reduces memory by sharing loaded class metadata between JVMs.

• SoftReference

A reference that stays in memory until the JVM really needs space, often used for caches.

English

How many of these Java terms do you know? 👇

Score yourself out of 15:

- SerialVersionUID

- Type Erasure

- CompletableFuture

- BiFunction

- transient

- PhantomReference

- ForkJoinPool

- Shenandoah GC

- Flight Recorder

- Virtual Threads

- Foreign function

- Semaphore

-CountDownLatch

- CDS ( Class Data Sharing)

- SoftReference

Comment your score 👇

English

Dev Chopra retweetledi

A common source of failure in ML systems is optimizing the wrong objective.

Many models are trained to minimize a loss function that does not reflect the metric that actually matters.

Training proceeds smoothly. Loss decreases. Validation curves look healthy.

But real-world performance does not improve.

Loss functions are proxies. They are mathematical conveniences, not goals. When the proxy diverges from the true objective, optimization pushes the model in the wrong direction.

This creates models that are technically well-trained and practically ineffective.

The problem is especially visible when business or user-facing metrics are non-differentiable, asymmetric, or threshold-based.

Examples include:

• Optimizing log loss when precision at top-K matters

• Minimizing MSE when tail errors are critical

• Training for accuracy on highly imbalanced data

• Using symmetric loss for problems with asymmetric costs

• Ignoring ranking metrics in retrieval systems

The model learns exactly what it is asked to learn.

It just is not what you needed.

The effect is often confusing. Engineers see stable training and assume the model is improving. Stakeholders see unchanged outcomes and lose trust.

The issue is not the algorithm.

It is the alignment.

The correct approach is:

1. Define the real-world objective first

2. Choose or design a loss that approximates it

3. Monitor metrics that reflect actual impact

In some cases, this requires custom losses, reweighting, or post-training calibration.

This is not an edge case. It is one of the most common reasons “good” models fail in production.

Better optimization does not fix a misaligned objective.

It only makes the mistake more efficient.

Good ML is not about minimizing loss.

It is about minimizing regret.

If the model succeeds mathematically and fails operationally, it was trained correctly for the wrong problem.

English

I would make sure all services agree on how they talk to each other so the language does not really matter.

For direct calls I would usually use gRPC with Protobuf because it gives a clear contract and works well across Java, Python, Node and Go. If the setup needs to stay simple I would go with REST and JSON but I would still define the API clearly and version it properly.

For async communication I would use a message broker like Kafka or RabbitMQ and treat events like contracts. I would define a shared schema and be careful with changes so existing services do not break.

I would also keep external data models separate from internal code add good logging and tracing and rely on contract tests to catch issues early.

English

In a polyglot microservices architecture, where services are written in different programming languages (e.g., Java, Python, Node.js, Go), communication between these services becomes more complex due to differences in serialization formats, protocols, and data structures.

How would you handle communication between services written in different programming languages in a polyglot microservices system?

English

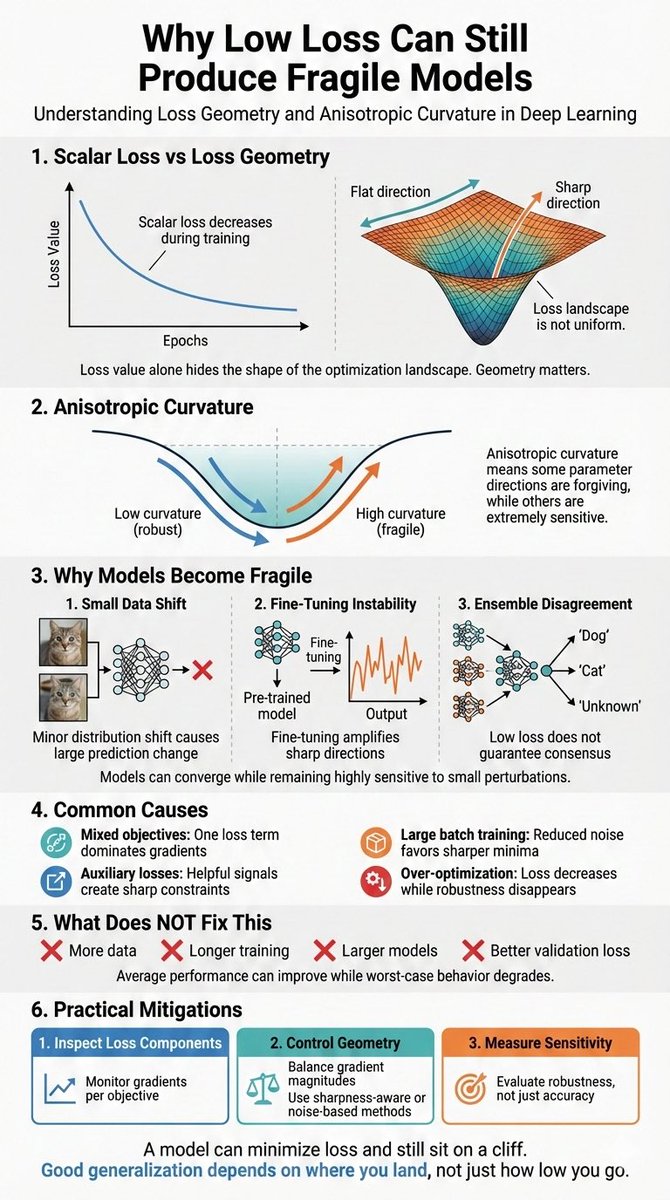

One of the most misunderstood concepts in deep learning is loss geometry.

Loss is treated as a scalar.

Gradients are treated as guidance.

Optimization is treated as progress.

But the shape of the loss matters more than the value.

Most training setups ignore this.

Loss functions are chosen for convenience.

Terms are summed without inspecting interactions.

Regularization is added as a penalty, not a constraint.

Convergence is declared when the curve looks smooth.

But smooth curves can hide sharp directions.

This produces a distinctive failure mode:

• Models converge but are hypersensitive to small shifts

• Minor distribution changes cause large prediction swings

• Ensembles disagree more than expected

• Calibration degrades without accuracy loss

• Fine-tuning becomes unstable or brittle

The model is not overfit.

It is geometrically fragile.

This shows up most often in:

• Large-scale multitask models

• Contrastive and metric learning setups

• RL fine-tuning of foundation models

• Systems with mixed objectives and auxiliary losses

The root cause is anisotropic curvature.

Some directions in parameter space are flat.

Others are razor-sharp.

SGD optimizes the average, not the extremes.

Metrics look good.

Margins disappear.

This is not fixed by:

• More data

• Larger batches

• Longer training

Those often worsen the imbalance.

The correct approach is:

1. Inspect loss terms independently, not just in aggregate

2. Control gradient dominance across objectives

3. Prefer flatter minima over lower minima

In practice, this may require:

• Gradient norm balancing or clipping per loss term

• Sharpness-aware training or noise injection

• Monitoring sensitivity, not just validation loss

This is not an optimization trick.

It is a generalization issue.

A model trained to minimize loss can still sit on a cliff.

And no amount of regularization saves a solution chosen in the wrong geometry.

English

Start with a simple rule that if the model can answer reliably from the prompt or recent context, don’t use a tool. Tools are for things the model cannot know like live data, private systems, heavy computation or actions in the real world.

I ask one question before adding a tool call: what breaks if the model guesses here. If the answer is not much, keep it model-powered. If the answer is accuracy, money or side effects, then it needs a tool.

I also bias toward fewer tools early and add them only when I see clear failure cases.

English

Quick Question :

Your AI agent has 15 tools.

In theory, it’s powerful.

In practice, it calls tools for everything and spends 80% of time waiting on APIs for things the model could answer from context.

You didn’t build an agent.

You built an over-engineered orchestrator for latency.

How would you decide what should be tool-powered vs model-powered?

English