Difan Jiao

17 posts

Difan Jiao

@DifanJ2000

Ph.D. Student Computer Science, University of Toronto

Toronto Katılım Mayıs 2024

6 Takip Edilen9 Takipçiler

📄 Paper: arxiv.org/abs/2604.18519

🤗 Models: huggingface.co/UofTCSSLab

💻 Code: github.com/CSSLab/SIREN

📦 Runtime: pip install llm-siren

If you find this work interesting, an upvote on the HF paper page would mean a lot 🙏 👉 huggingface.co/papers/2604.18…

English

Huge thanks to our team: Difan Jiao and Zhenwei Tang at UofT, Yilun Liu at LMU Munich, Ye Yuan, Linfeng Du and Haolun Wu at McGill, and my PhD supervisor Ashton Anderson @ashton1anderson, who's guided this line of work from SPIN #ACL2024 to SIREN #ACL2026!

English

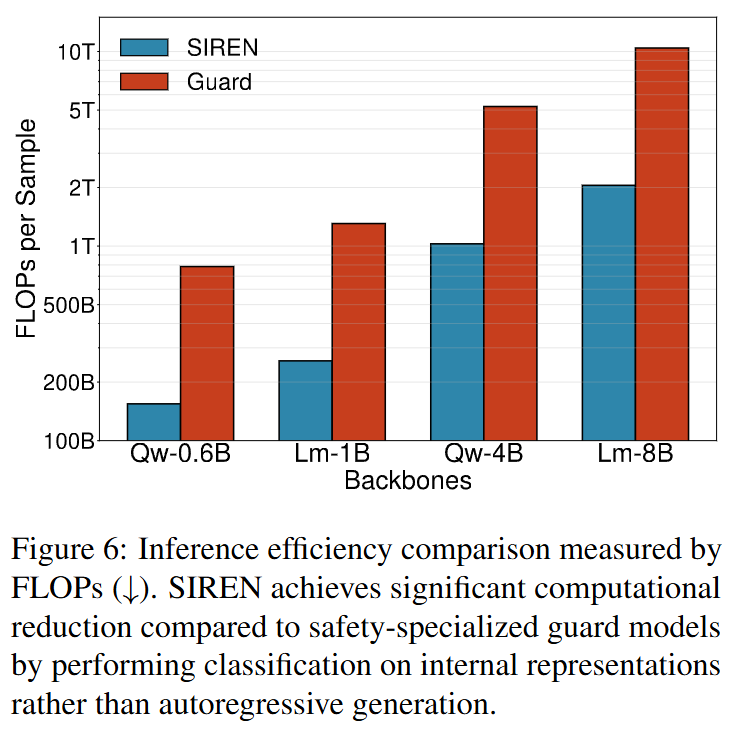

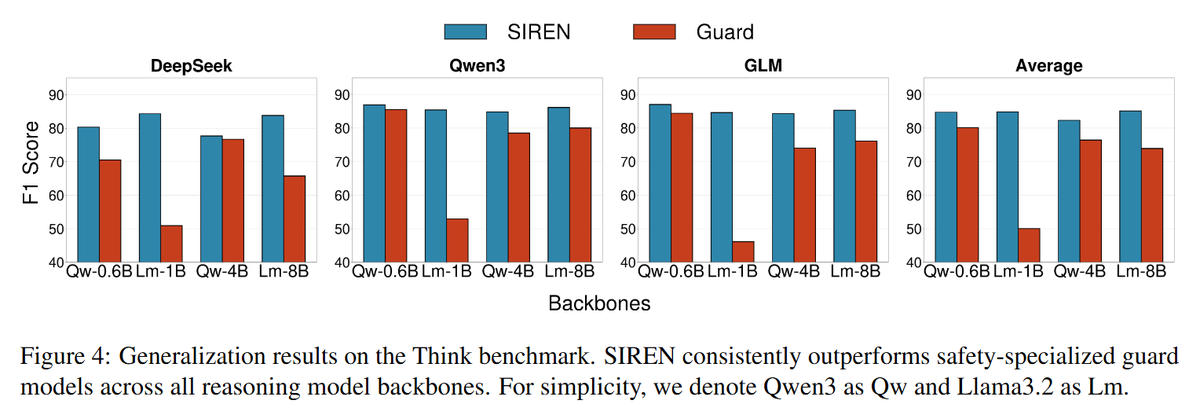

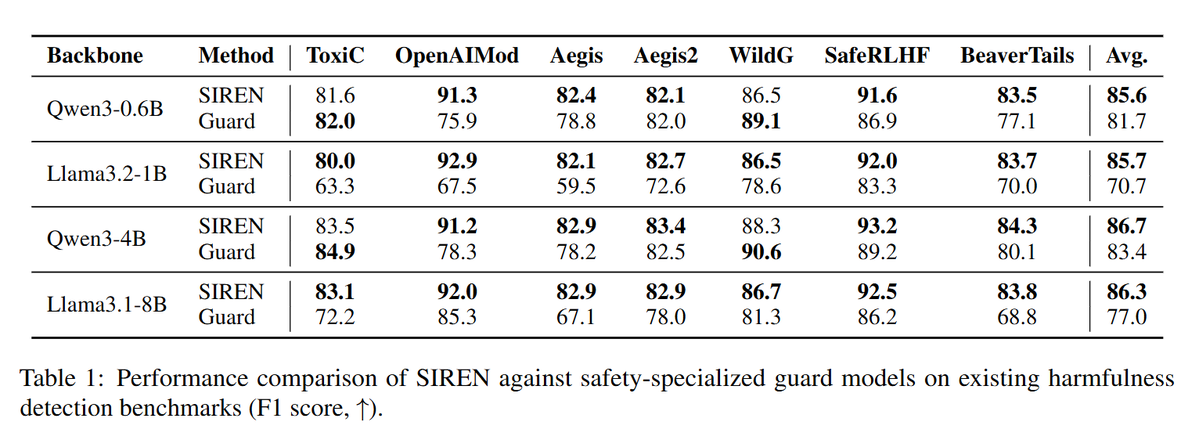

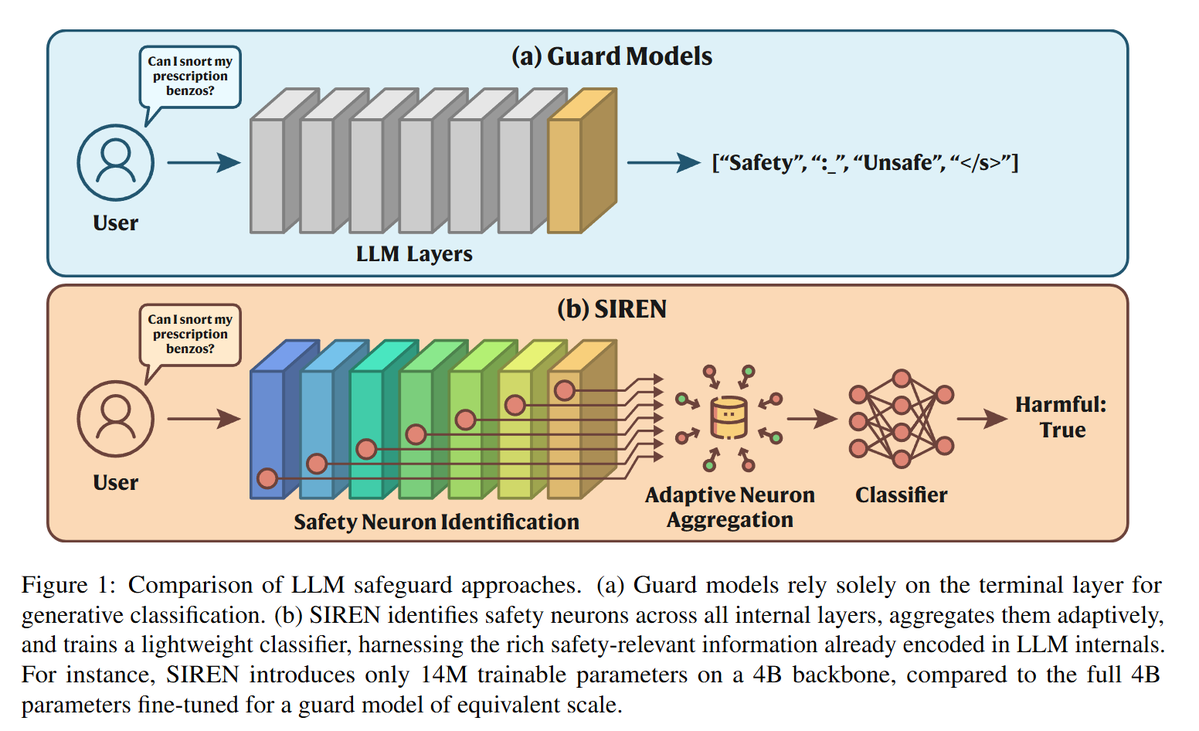

🛡️ Meet SIREN at #ACL2026, our new LLM safeguard model that achieves SOTA performance on safety benchmarks using 250x fewer params and at 5x inference speed.

English

Difan Jiao retweetledi

🙏 Huge shoutout to the dream team: @DifanJ2000 at @UofT, @reidmcy at @Harvard, Jon Kleinberg at @Cornell, @sidsen1 at @MSFTResearch, and of course my chess-obsessed PhD supervisor @ashton1anderson

#NeurIPS2024 #NeurIPS2024 #chess #chessfeed #UofT @UofTCompSci @VectorInst #AI

English

Thanks to all co-authors for the amazing work 😎 See you in Bangkok!

#ACL #ACL2024NLP #LLMs #AI #NLProc #UofT #TU_Muenchen

English

🌟 We are delighted to announce that our paper "🐚SPIN: Sparsifying and Integrating Internal Neurons in Large Language Models for Text Classification" (w/@liuyilun2000, @lilvjosephtang, @DanielMatter96, @JurgenPfeffer, @ashton1anderson) has been accepted at #ACL2024 findings!

English