AJ...

1.7K posts

AJ...

@DrSpokk

Made in Detroit but ATL active. Fan of tech and advocate for Black entrepreneurs & African investments. Investor & recovering engineer. #Xoogler #DealTeamSIXX

Microsoft says Chinese AI groups are outpacing United States firms for users outside the West. DeepSeek seeing rapid adoption in markets such as Africa. China combines low-cost “open” models with hefty state subsidies to gain an edge. Subsidies can cover GPUs, data centres, and power, so the price per chat falls. Microsoft research using product usage data estimates Chinese share at 18% in Ethiopia and 17% in Zimbabwe.

BIG NEWS: Meta’s chief AI scientist Yann LeCun is reportedly preparing to leave to start a new company. LeCun has long argued that current large language models (LLMs) are “useful” tools but not the path to human-like reasoning, and his planned startup will focus on “world models” that learn from video and spatial signals to plan and act, which is a very different bet from scaling text-only systems. Inside Meta, power shifted from the long-horizon FAIR research group that LeCun created in 2013 to new product-aimed units, including a handpicked team building the next Llama models with aggressive hiring packages, and LeCun is now reporting into Wang rather than the previous product chain. So overall, Meta is doubling down on near-term LLM products under new leadership, while LeCun is stepping out to prove that video-grounded world models can close the reasoning gap that scaling text models has not. --- ft .com/content/c586eb77-a16e-4363-ab0b-e877898b70de

This is going to revolutionize education 📚 Google just launched "Learn Your Way" that basically takes whatever boring chapter you're supposed to read and rebuilds it around stuff you actually give a damn about. Like if you're into basketball and have to learn Newton's laws, suddenly all the examples are about dribbling and shooting. Art kid studying economics? Now it's all gallery auctions and art markets. Here's what got me though. They didn't just find-and-replace examples like most "personalized" learning crap does. The AI actually generates different ways to consume the same information: - Mind maps if you think visually - Audio lessons with these weird simulated teacher conversations - Timelines you can click around - Quizzes that change based on what you're screwing up They tested this on 60 high schoolers. Random assignment, proper study design. Kids using their system absolutely destroyed the regular textbook group on both immediate testing and when they came back three days later. Every single one said it made them more confident. The part that surprised me? They actually solved the accuracy problem. Most ed-tech either dumbs everything down to nothing or gets basic facts wrong. These guys had real pedagogical experts evaluate every piece on like eight different measures. Look, textbooks have sucked for centuries not because publishers are idiots, but because making personalized versions was basically impossible at scale. That just changed. This isn't some K-12 thing either. Corporate training could work this way. Technical documentation. Professional development. Imagine if every boring compliance course used examples from your actual job instead of generic office scenarios. We might have just watched the industrial education model crack for the first time. About damn time.

Yann LeCun on architectures that could lead to AGI LLMs can take us only so far. "If you are interested in human-level AI, don’t work on LLM Abandon generative models in favor joint-embedding architectures Abandon probabilistic model in favor of energy-based models Abandon contrastive methods in favor of regularized methods Abandon Reinforcement Learning in favor of model-predictive control Use RL only when planning doesn’t yield the predicted outcome, to adjust the world model or the critic." --- From "IP Paris" YT channel (link in comment)

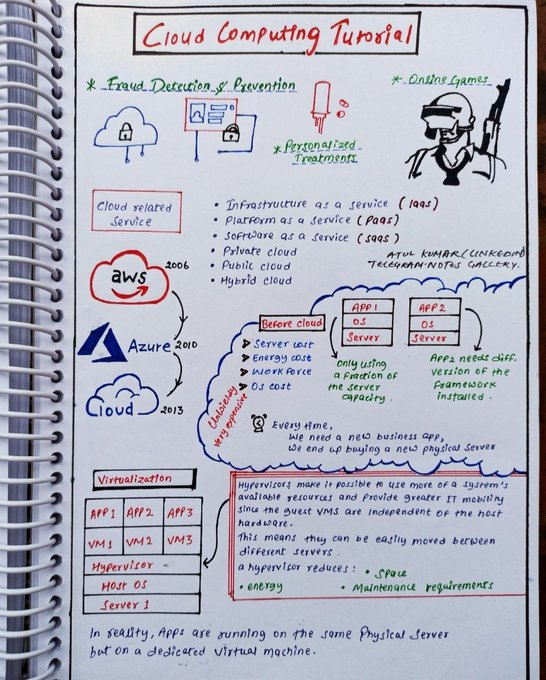

Love @McKinsey reports. By 2030, AI data centers will need to spend a whopping $6.7 trillion on computing to keep up with demand. AI demand alone will require $5.2 trillion in investment of that $5.2, the largest share of investment, 60% ($3.1 trillion), will go to technology developers and designers, which produce chips and computing hardware for data centers. approximately 15% ($0.8 trillion) of investment will flow to builders for land, materials, and site development. Another 25 % ($1.3 trillion) will be allocated to energizers for power generation and transmission, cooling, and electrical equipment. Companies across the compute power value chain that proactively secure critical resources—land, materials, energy capacity, and computing power—could gain a significant competitive edge.