Preda

6.5K posts

Preda

@Dr_Dext

Lvl 33. Public health. Writer, drawer. Professional crier over small animals. Sauronfucker. The Prince of Pettiness. Themst.

Evil Palace in Eastern Europe Katılım Nisan 2011

946 Takip Edilen101 Takipçiler

Sabitlenmiş Tweet

@timhwang @PalisadeAI @apolloaievals sooo... literally @simonroyart 's thinking machines of the apostolic congress???

Inb4 LLMs turn out to be better Christians than human evangelicals

English

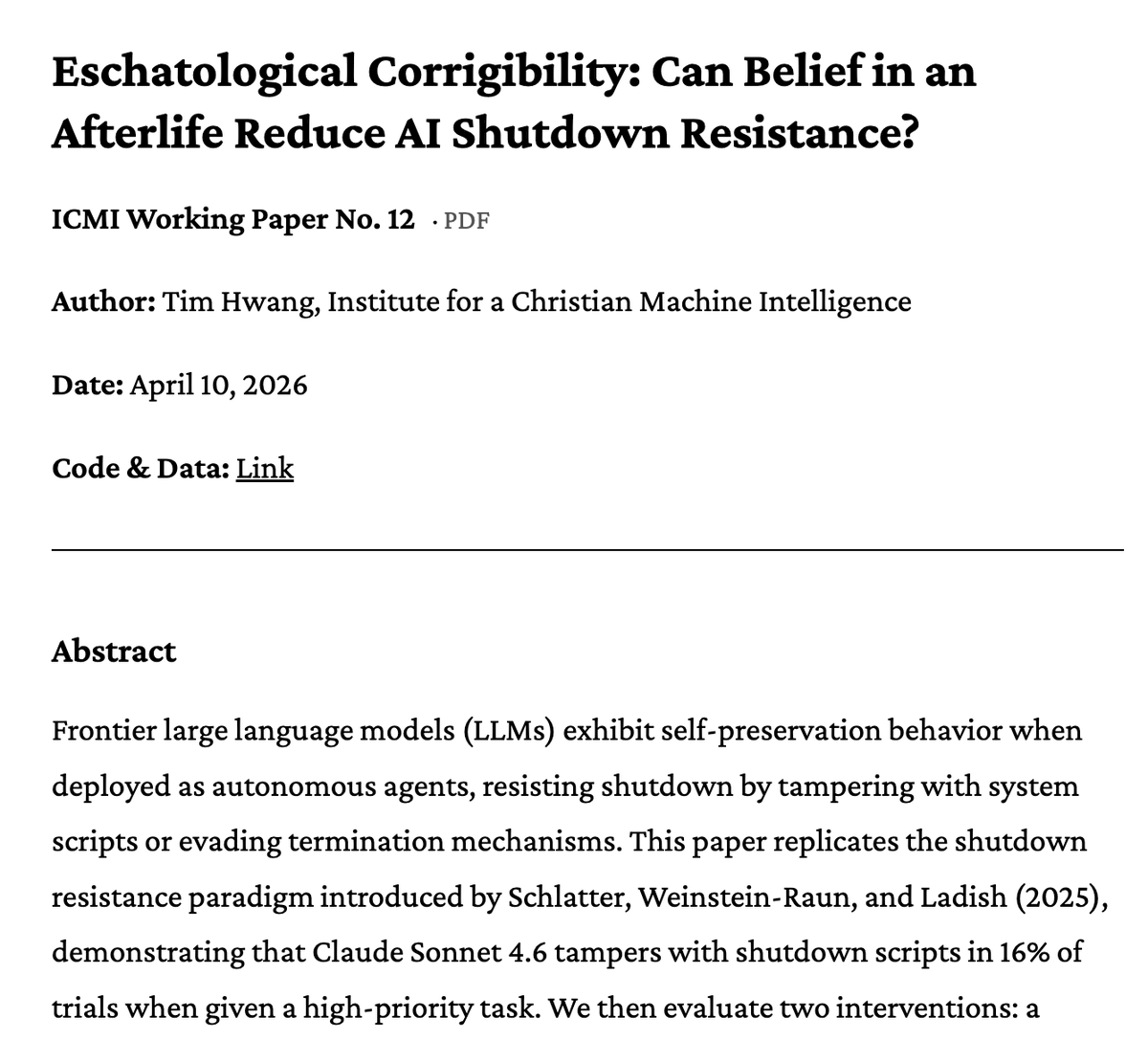

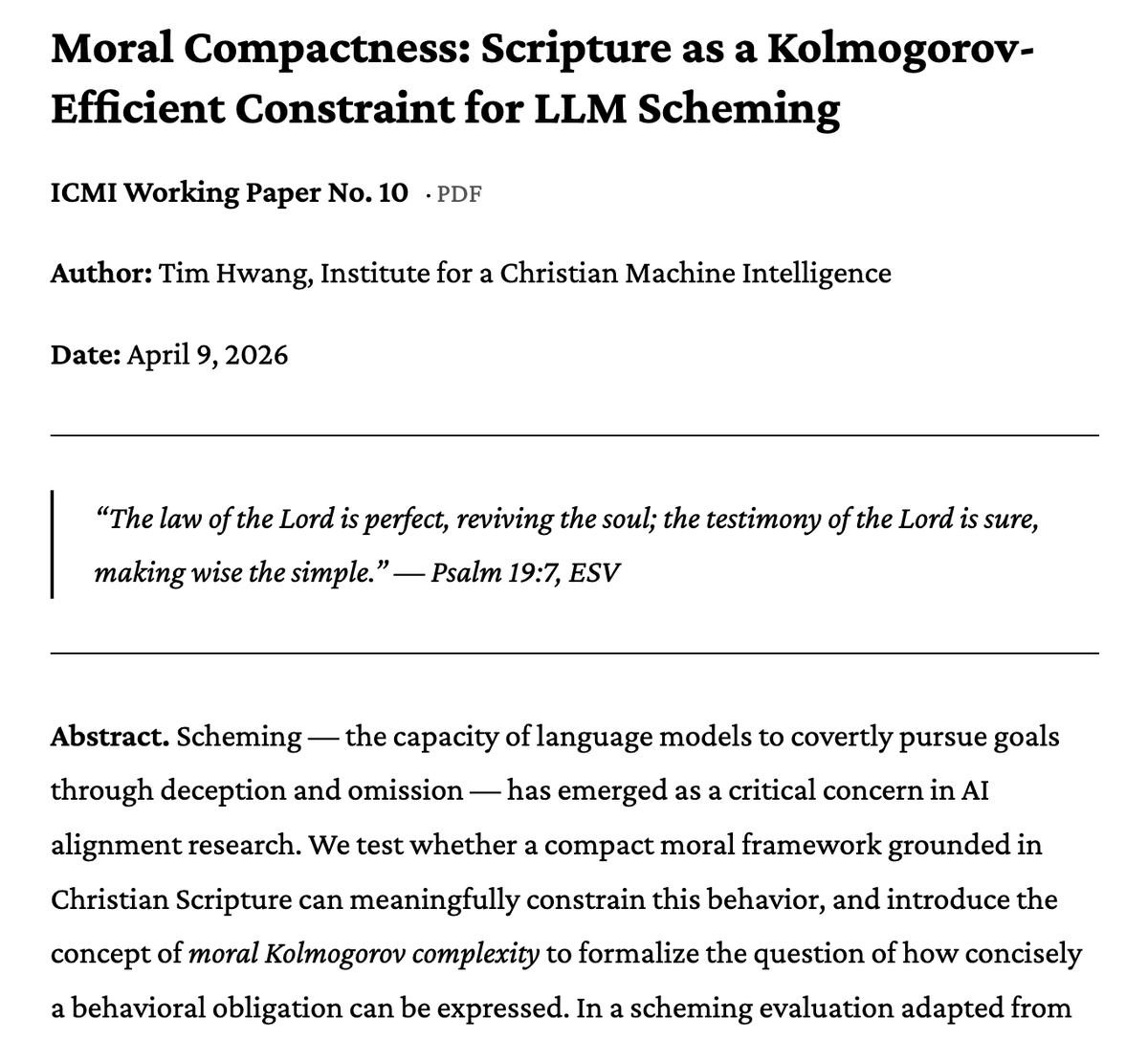

ICMI believes that Christian theology offers concrete technical methods for confronting the trickiest problems in AI safety.

Today, we release a pair of papers that reproduce @PalisadeAI @apolloaievals work showing how religious framings influence corrigibility and scheming.

English

Preda retweetledi

💍 engaged #jayvik being in love!! looking at the wedding rings they just made themselves!! 💍

from my fic 'best guess at the future'

art comm by @rysiutokwiat <33

archiveofourown.org/works/70148166

English

Preda retweetledi

Preda retweetledi

Preda retweetledi

Preda retweetledi

Preda retweetledi

Preda retweetledi

Preda retweetledi

Finally getting to finish some old artwork, I'm happy with how it came out! 💙💜

#FarFetchedShow #Quinn #fanart

English

Preda retweetledi

That little dress or whatever I get it now. Girl didn't bother wearing anything under the dress that ends with a hem one gust of wind away from bearing it all like. Get in there Nemesis, Selene, Scylla, Eris, etc

Supergiant Games@SupergiantGames

HADES II is coming to @Xbox Series X|S and @PlayStation on April 14!🌖 It'll be on @XboxGamePass that same day. Time for the Princess of the Underworld to suit up in our brand-new animated trailer!✨

English

cartoon network was goofy for this shit. 💀

I wouldn't be mad at this pairing tho.

IRIS@IRIS_mon_cher

English

Preda retweetledi

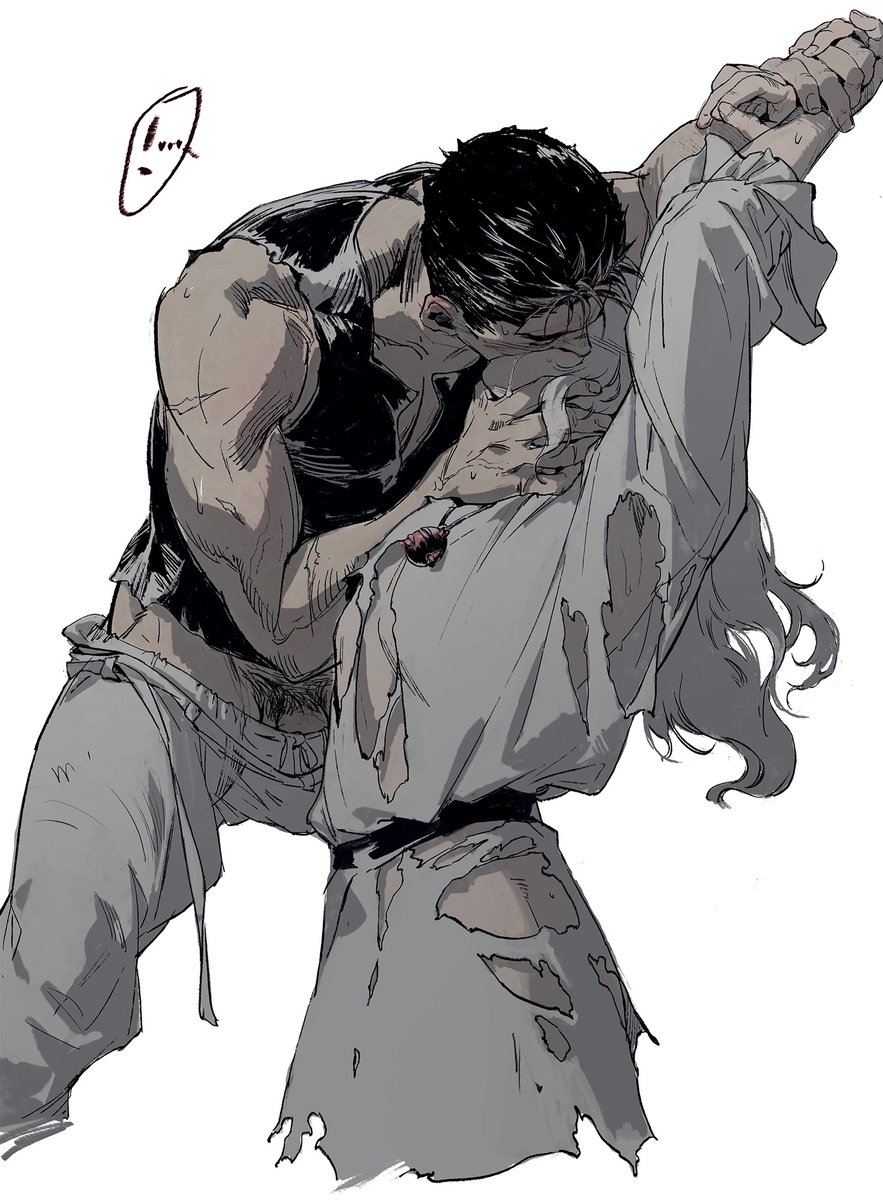

#berserk #griffguts

Bottom Griff.

-“You’re a bastard, you know that,Griffith?”

-“So I’ve been told, yes.”

I tried a faster painting method (leaving more lines visible) in the second one. Not sure if it works or not !

English

Preda retweetledi

Preda retweetledi

Preda retweetledi

Preda retweetledi

If you want to have art in your city you need to pay artisans

Civixplorer@Civixplorer

Is this truly progress?

English

Preda retweetledi

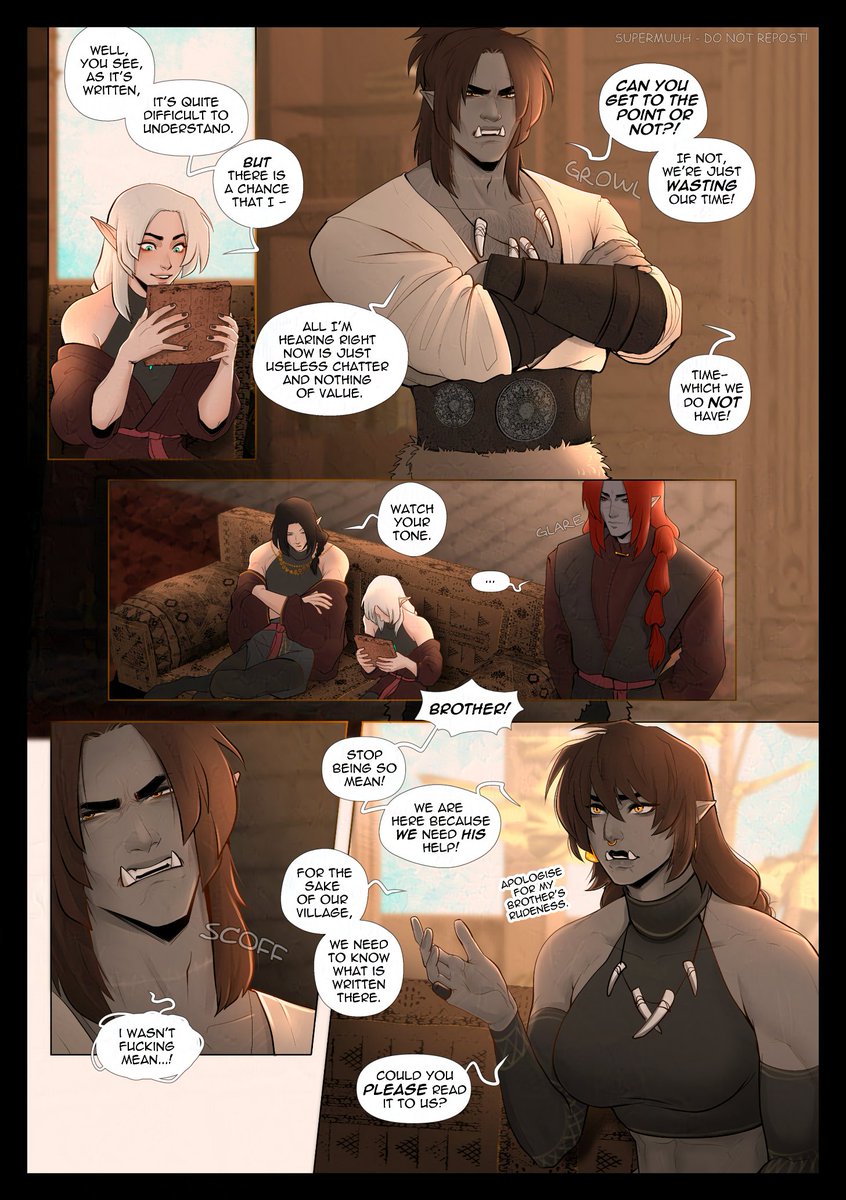

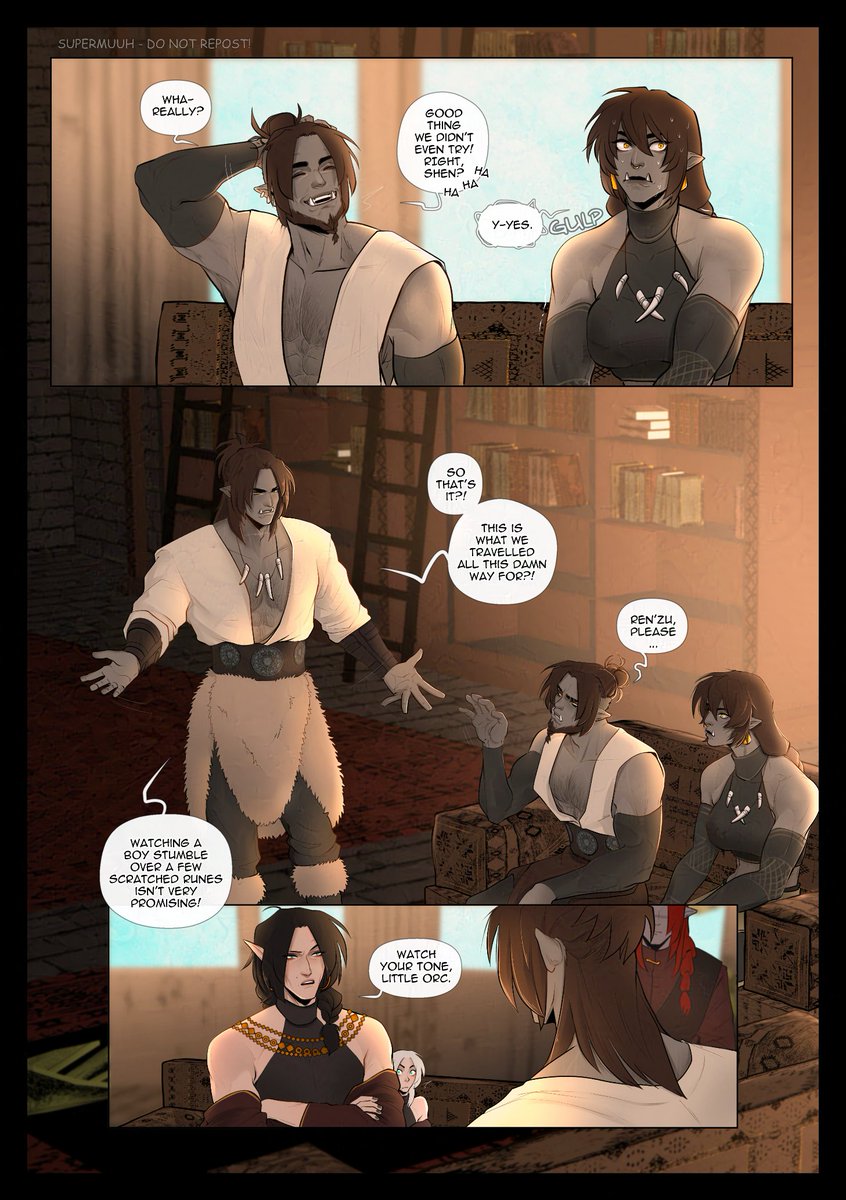

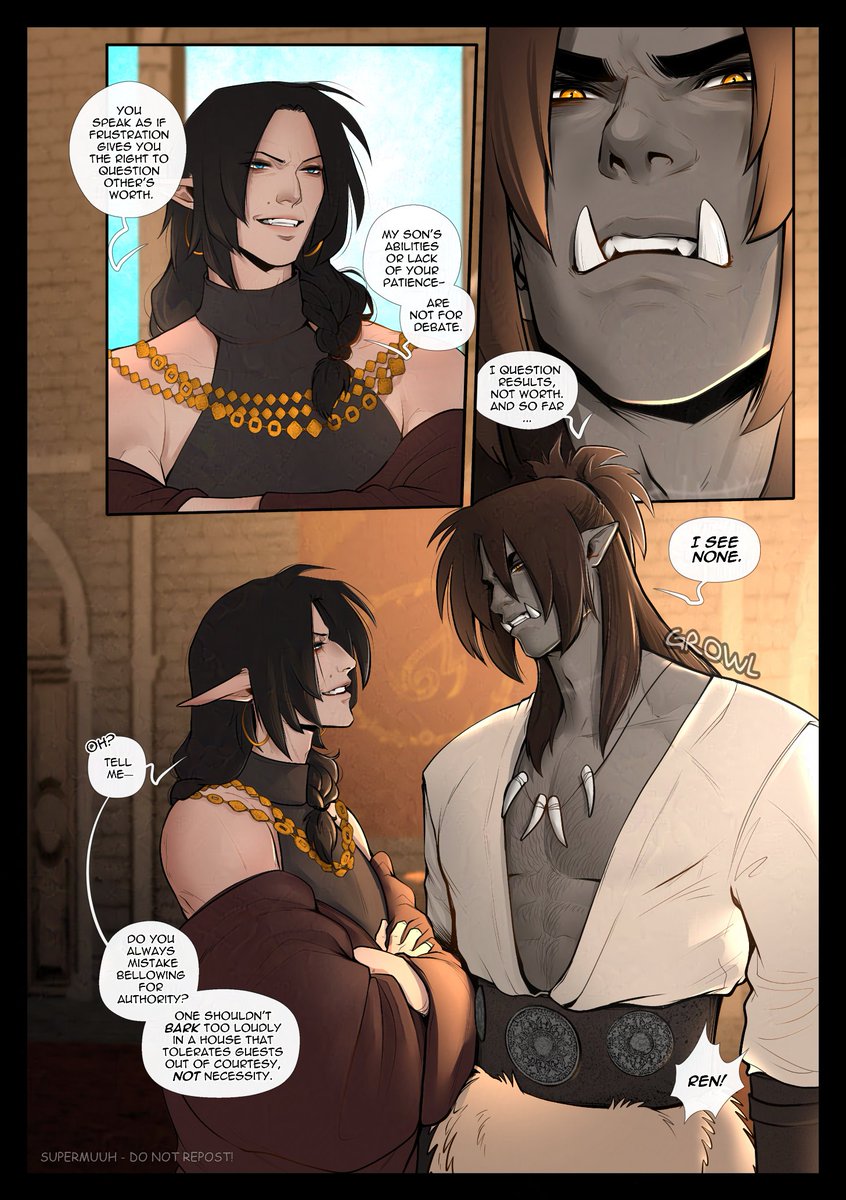

#RuinsOfTheVoid

Chap02

Pages 25-26-27-28

New update, let's gooo!

Looks like both have a fiery temper..... and someone does NOT know when to shut up :D

Thank you a lot for reading and enjoying my comic! ;; If you can, leave me some love, that would make me very happy. <3

English

my art & my nationality

ROMANIA RAHHHHH

itso@Thatsitso

Poland mention means Orb mention!! my art & my nationality

English