Draneil Mifa

220 posts

@sudoingX to be clear, which model / quantization did you run on the 3090?

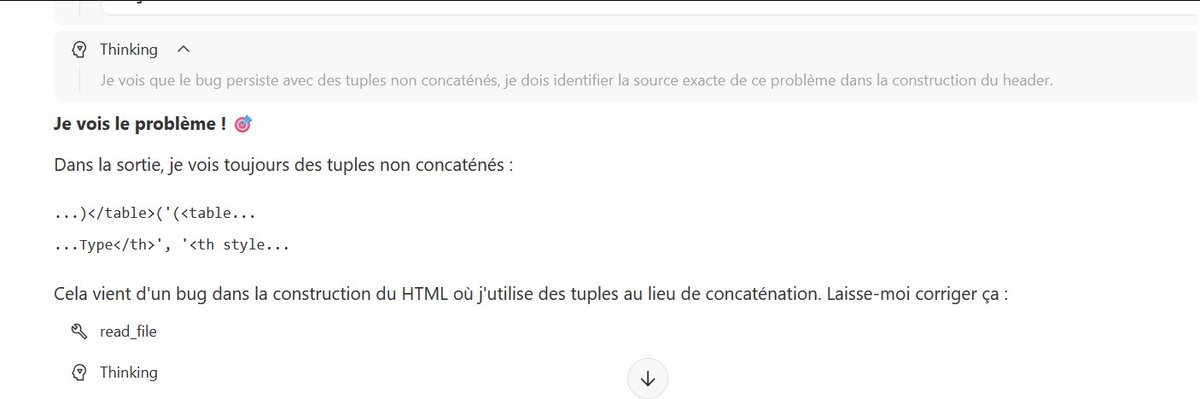

everyone is wondering the same thing qwen3.5 27b or gemma 4 31b? new benchmarks from @ArtificialAnlys are out, let's dig the numbers: 💻 coding index > gemma wins surprisingly it was the best and scored 42 — it managed to handle more coding tasks successfully than qwen, very interesting! 👀 🤖 agentic index > qwen destroys gemma when it comes to tool calls, multi-step reasoning, and autonomous task execution, there's no need to talk about it — qwen is the absolute winner in the category, scoring 55 (!) vs 41 for gemma 🤯 👑 the winner > qwen3.5 27b stays undefeated gemma could have been an amazing contender but in agentic tasks it's just too much behind, compared to what qwen has to offer — makes no sense to use it if your tasks are heavy and need reasoning what are your thoughts?

Hermes Agent is the third fastest growing GitHub repo this week!