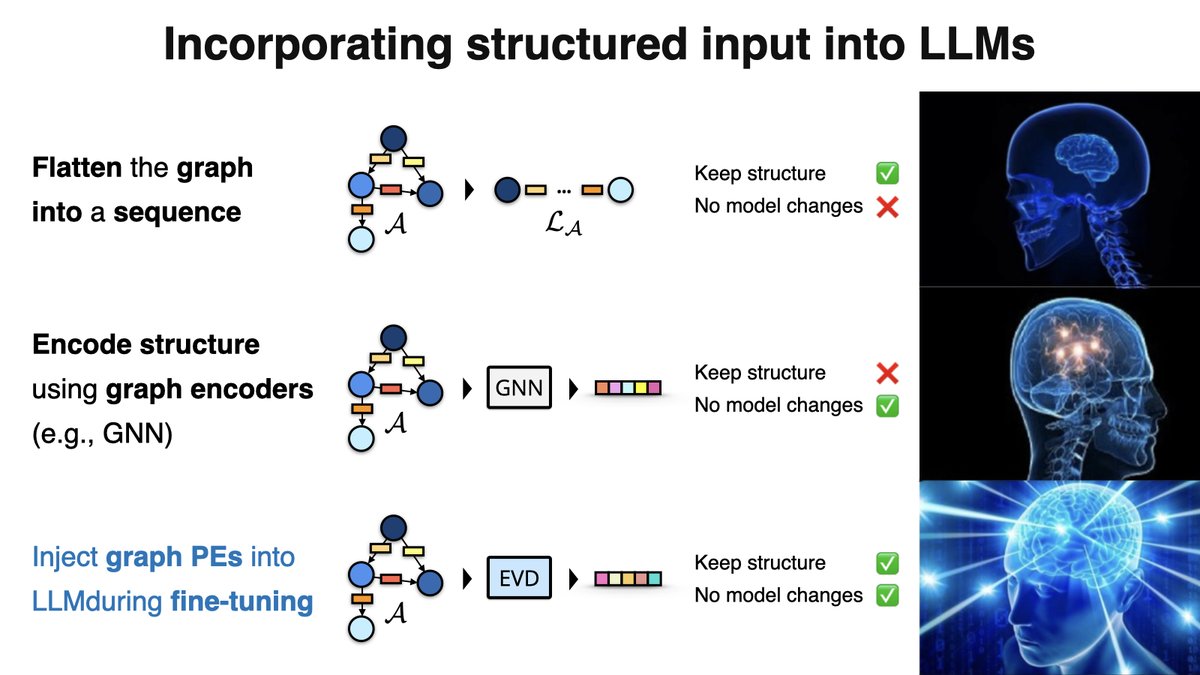

Switching gears from QMC to DFT for this one. I'm excited to share our newest work, where we learn the non-local exchange-correlation functional in KS-DFT with equivariant graph neural networks! Joint work w/@ESEberhard, @guennemann 📝 arxiv.org/abs/2410.07972