Ahmed Elkhanany

581 posts

Ahmed Elkhanany

@Elkhanany

Assistant Prof of Breast Onc at @BCMCancerCenter. BrCA TME & disparity. Father/Husband. Into R, philosophy, violin, photography & JRPGs. 🧬🥼👨👩👦👦🎮🎻🇪🇬

Shit sorry guys, no session tonight. Had another epiphany and I really need to focus tonight on building.

⚠️All of the below images were FABRICATED by ChatGPT Images 2.0, each with a single prompt❗️ ⚠️

🚨🚨🚨 RASOLUTE-302 Ph3 is POSITIVE "Daraxonrasib demonstrated a median OS of 13.2 months versus 6.7 months for chemotherapy, with a hazard ratio of 0.40 (p < 0.0001)".... WOW! AMAZING news for patients with #PancreaticCancer The RAS Revolution is ON!! ir.revmed.com/news-releases/…

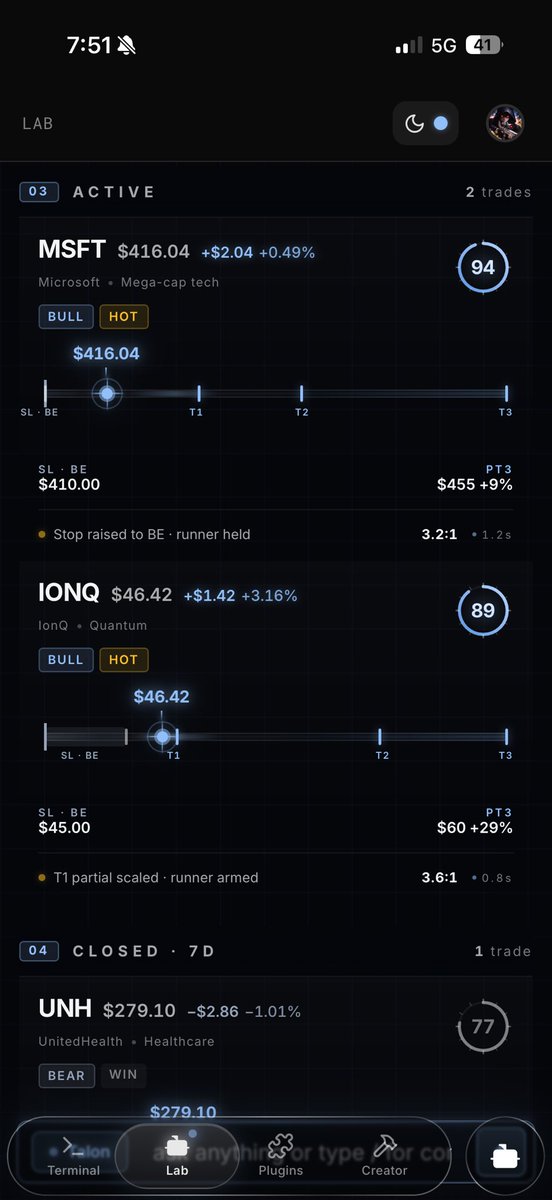

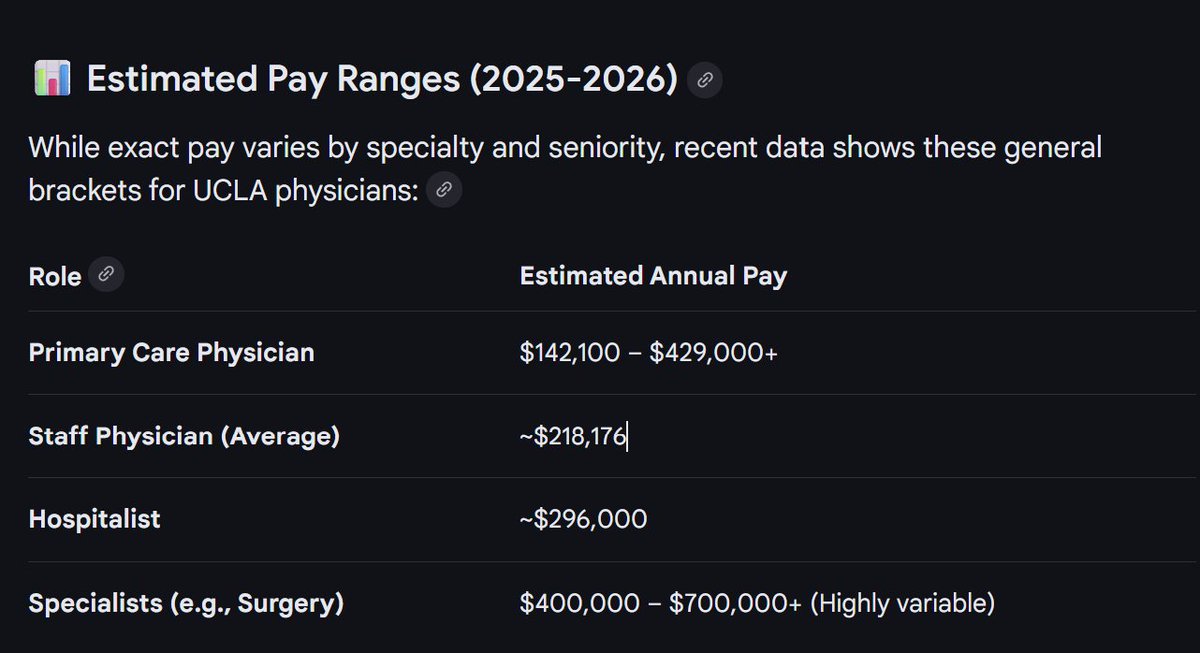

American medical student just did “horrifying math” on her student loans - Her amount due is $396,945.67 - Annual interest rate: 5.875% - Monthly interest accrual: $1,943.38 Her monthly resident paycheck is $4,054.98 HALF her income goes to just interest… She says if she pays nothing toward interest, the loan would grow to roughly $600,000 by the time she finishes residency Interest rates on student loans are predatory and should be illegal. This is usury

🦔A researcher invented a fake eye condition called bixonimania, uploaded two obviously fraudulent papers about it to an academic server, and watched major AI systems present it as real medicine within weeks. The fake papers thanked Starfleet Academy, cited funding from the Professor Sideshow Bob Foundation and the University of Fellowship of the Ring, and stated mid-paper that the entire thing was made up. Google's Gemini told users it was caused by blue light. Perplexity cited its prevalence at one in 90,000 people. ChatGPT advised users whether their symptoms matched. The fake research was then cited in a peer-reviewed journal that only retracted it after Nature contacted the publisher. My Take The researcher made the papers as obviously fake as possible on purpose. The AI systems didn't catch it. Neither did the human researchers who cited it in real journals, which means people are feeding AI-generated references into their work without reading what they're actually citing. I've covered the FDA using AI for drug review, the NYC hospital CEO ready to replace radiologists, and ChatGPT Health launching this year. All of that is happening in the same environment where a condition funded by a Simpsons character and endorsed by the crew of the Enterprise was being presented as emerging medical consensus. The people making these deployment decisions seem to believe the pipeline from research to AI to patient is more supervised than it actually is. This experiment suggests it isn't supervised much at all. Hedgie🤗 nature.com/articles/d4158…

Indian has 0.7 active physicians per 1,000 people, America has 3.0 active physicians per 1,000 people. You are a liar. You are not motivated by increasing patient access to care. You just want to practice in America because you can make more money.

Instead of mocking IMGs, be proud they’re willing to serve here. U.S. grads often match at top programs while many IMGs take the spots left behind. It takes immense sacrifice to get here. Mocking poorer countries and their doctors is shameful. I am proud of each and every IMG.