Enamul Hoque Prince

139 posts

Enamul Hoque Prince

@Enamul_Hoque

Associate Professor at York University, former PostDoc @StanfordHCI, PhD from @UBC_CS. Interested in the areas of NLP, Visualizations, and HCI.Views are my own.

Verification is key to reliable LLM reasoning—both in RL and test-time scaling. Meet FARE, our first open-source "Universal Verifier": a family of 8B & 20B models trained on 2.5M multi-task, multi-domain samples; outperforms 70B+ specialized evaluators and GPT-4.1 and 4o. Try it.

Happy to announce AlignVLM📏: a novel approach to bridging vision and language latent spaces for multimodal understanding in VLMs! 🌍📄🖼️ 🔗 Read the paper: arxiv.org/abs/2502.01341 🧵👇 Thread

🚨 New Paper Alert! Excited to share that one of our latest works, "The Perils of Chart Deception: How Misleading Visualizations Affect Vision-Language Models", has been accepted at IEEE VIS 2025 📄 Preprint link: arxiv.org/pdf/2508.09716 1/6🧵

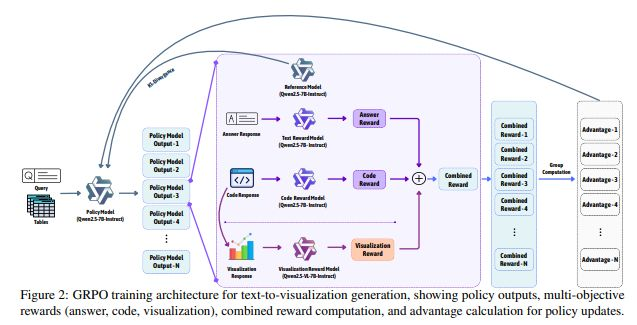

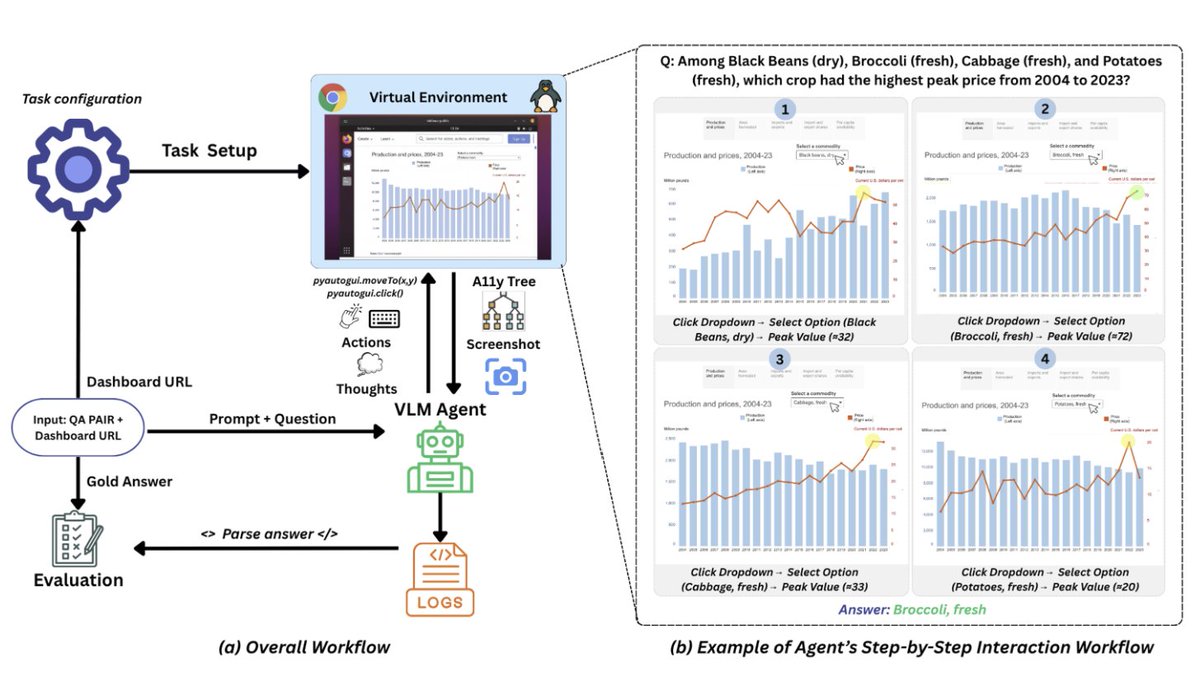

Excited to share that our paper "Text2Vis: A Challenging and Diverse Benchmark for Generating Multimodal Visualizations from Text" has been accepted to EMNLP 2025 (Main)! Paper: arxiv.org/abs/2507.19969 Dataset: huggingface.co/datasets/mizan… Code: github.com/vis-nlp/Text2V…

🚨 New Benchmark Alert Our popular ChartQA📊benchmark is now saturated⚠️ Recent ones like CharXiv fall short in visual & question diversity. 🔥 Say hello to ChartQAPro: A more diverse & challenging benchmark for Chart Question Answering! 🧵👇 📄 arxiv.org/abs/2504.05506…