Kairiel

156 posts

Kairiel

@EphemeralEph

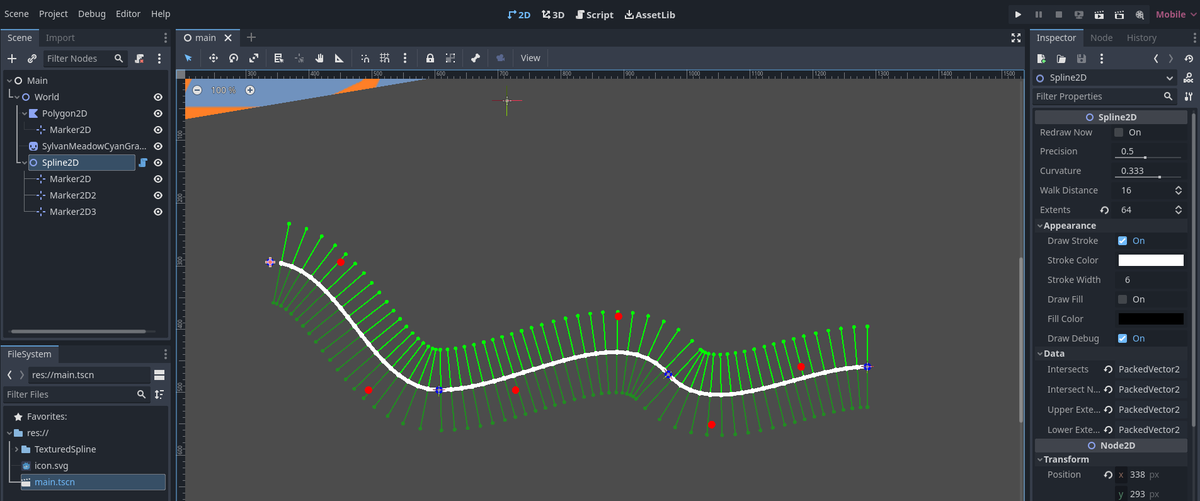

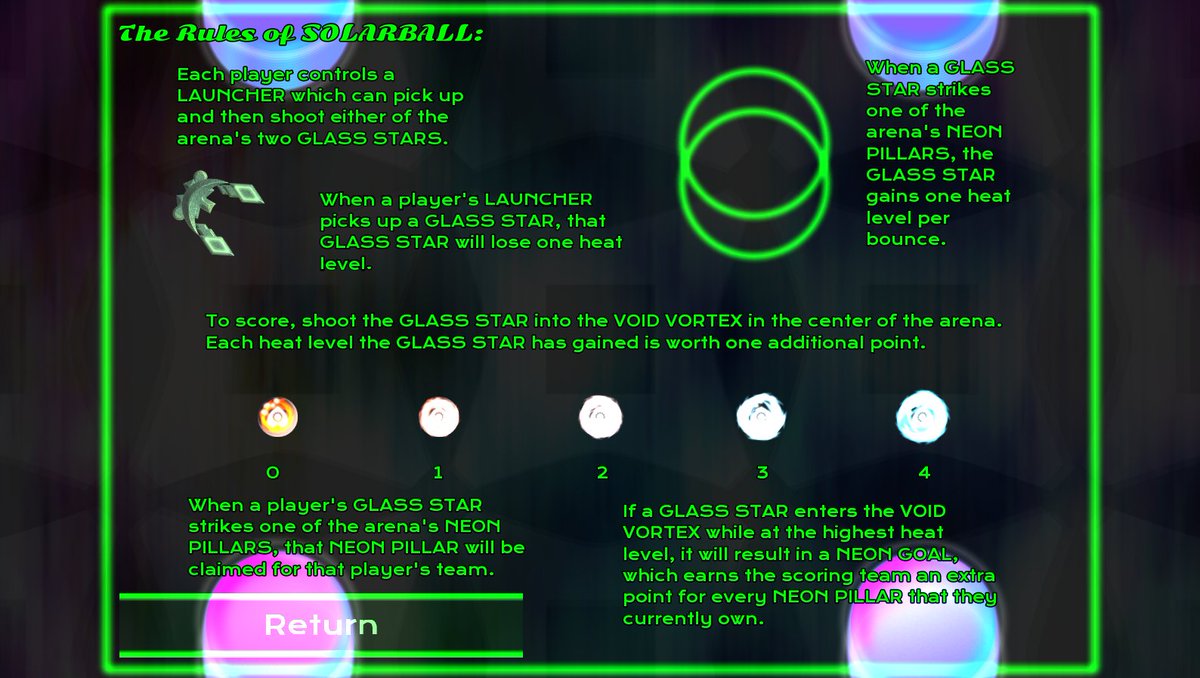

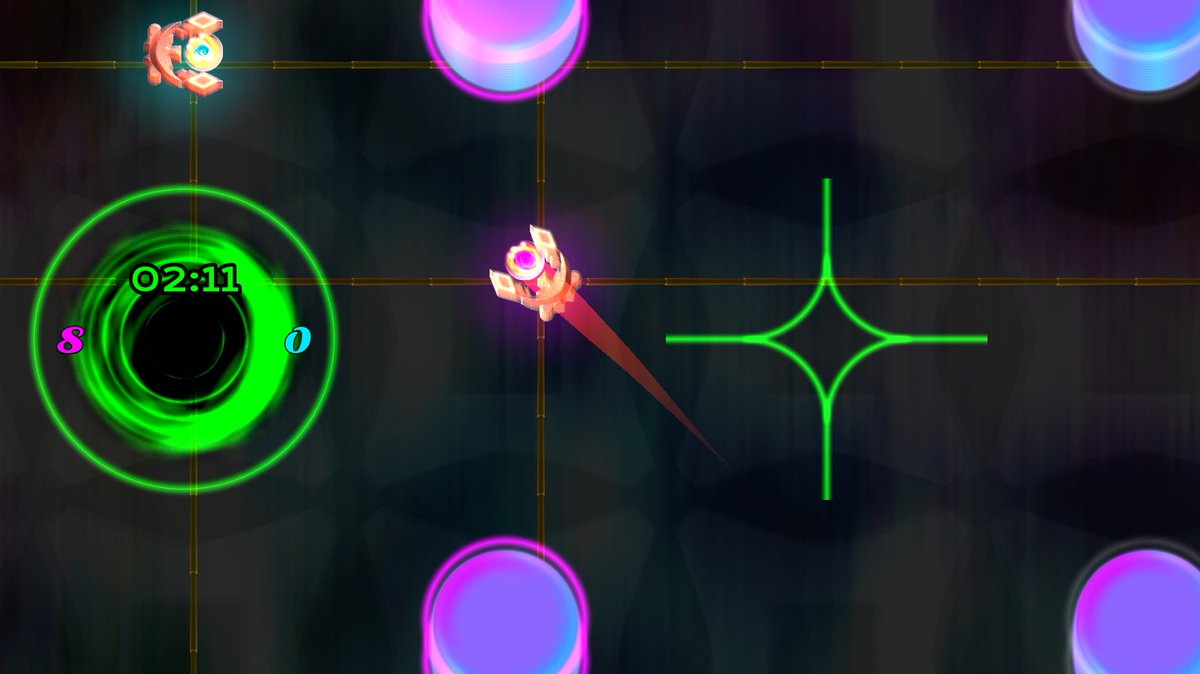

An artistic polymath and a neurodivergent polyamorous bisexual healslut wrapped in a useless cinnamon roll. I write erotica and do game development (in Godot).

To understand AI x-risk, you must understand the Orthogonality Thesis. Let’s learn...with memes! Orthogonality Thesis: “an AI agent can have any combination of intelligence level and goal” or “If we give it a goal, like maximize paperclips, it will do that. Then we just, um... die.” AI safety researchers mostly buy this, so we’re trying to figure out how to give AIs good goals, etc. What if this is wrong? Eh, we wasted some time. “Knowing an AI agent is highly intelligent does not allow us to presume that it will be moral” -Stuart Russell Anti-Orthogonality people: “Once intelligent enough, AIs will converge to a common goal” or “If we tell it to maximize paperclips, once it’s smart enough, it will just magically decide that’s a dumb goal. It will then decide on a “better” goal, more morally aligned with human values. So stop worrying.” What if they’re wrong? Every human on earth may die.

@depauper8 is it a skill issue (on her part) or a supply issue (bigger picture mismatch re his need v. her desire for sexual intimacy)?