Brian Fitzgerald

8.3K posts

Brian Fitzgerald

@ExaGridDba

AI, Cloud, and databases

New York Katılım Eylül 2009

1.6K Takip Edilen1.3K Takipçiler

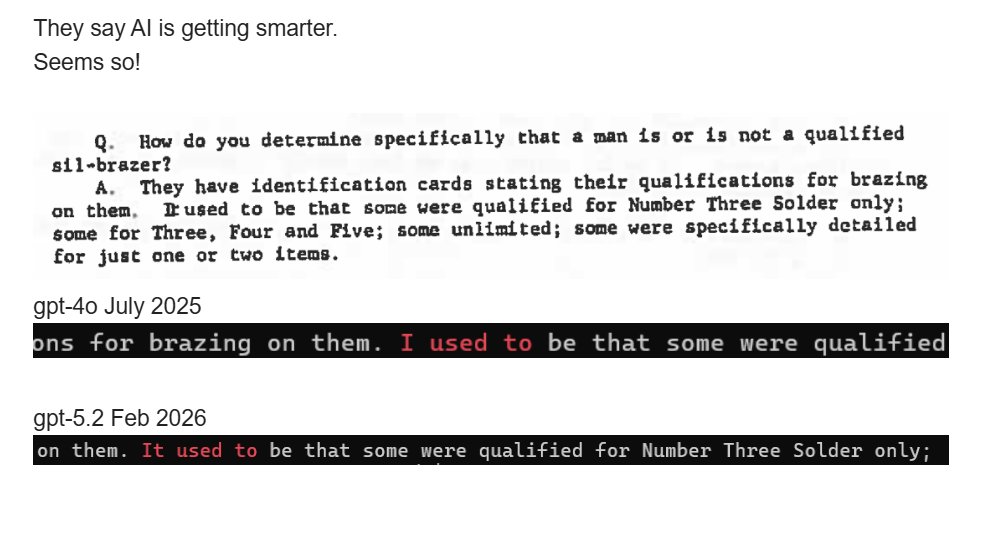

@AIFrontliner Hallucinations are the biggest trust issues with LLMs right now.

English

As a lawyer who uses LLMs every day at work, I feel qualified to respond.

First, hallucinations are no longer a problem. Consistent with the prediction you quoted from 2023, GPT-5.x almost never hallucinates. And overall, the percentage of inaccurate responses I get from GPT-5.2 Pro is lower than the percentage of inaccurate responses I would get from a competent junior associate (yes, fully accounting for hallucinations).

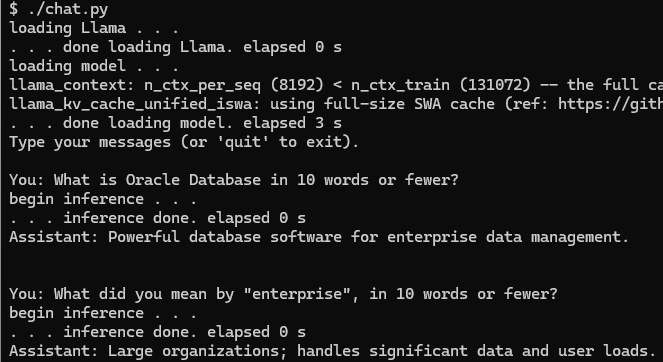

Second, people wildly overestimate the difficulty of most tasks performed by lawyers. The vast majority of the things we do are not nearly as challenging intellectually as solving an Erdos problem. Key skills for a lawyer are attention to detail, ability to synthesize and reason through precedent, ability to construct logical arguments, writing, research. LLMs are *very* good at most of these things even today, and top-tier LLMs (GPT-5.2 Pro) are excellent at them.

Put in another way, I feel that the biggest barrier to widespread adoption of AI by lawyers today is connectivity, interfaces, harnesses - *not* intelligence of the best models, and certainly not hallucinations. Unclear to what extent these issues will be resolved in the next 12-18 months, but given how economically valuable lawyers' work is, I wouldn't be surprised to see significant progress on that front. It's also worth considering that, given the general trend of rapidly falling costs of running reasoning models, it is likely that a model as intelligent as GPT-5.2 Pro, but *much* cheaper and faster, will be publicly available in the next 12-18 months.

Note that the above assumes (conservatively) that the next 12-18 months in AI will be relatively boring: no continual learning, no drop-in virtual employees, not much further progress in agentic AI (Codex), no significant progress in intelligence possessed by the best models. Relaxing these assumptions would mean that we should expect even faster progress.

English

How did this work out? Are LLM hallucinations largely gone by now?

So now the @FT platforms the same guy saying most the of the tasks lawyers and accountants do will be replaced in 12-18 months?

From the same company that said that GPT-5 would be a giant humpback whale that would blow away PhDs?

Where is the accountability? The concern about CEOs’ conflicts of interest in selling these narratives? The view from skeptics?

Mustafa Suleyman@mustafasuleyman

LLM hallucinations will be largely eliminated by 2025. that’s a huge deal. the implications are far more profound than the threat of the models getting things a bit wrong today.

English

Brian Fitzgerald retweetledi

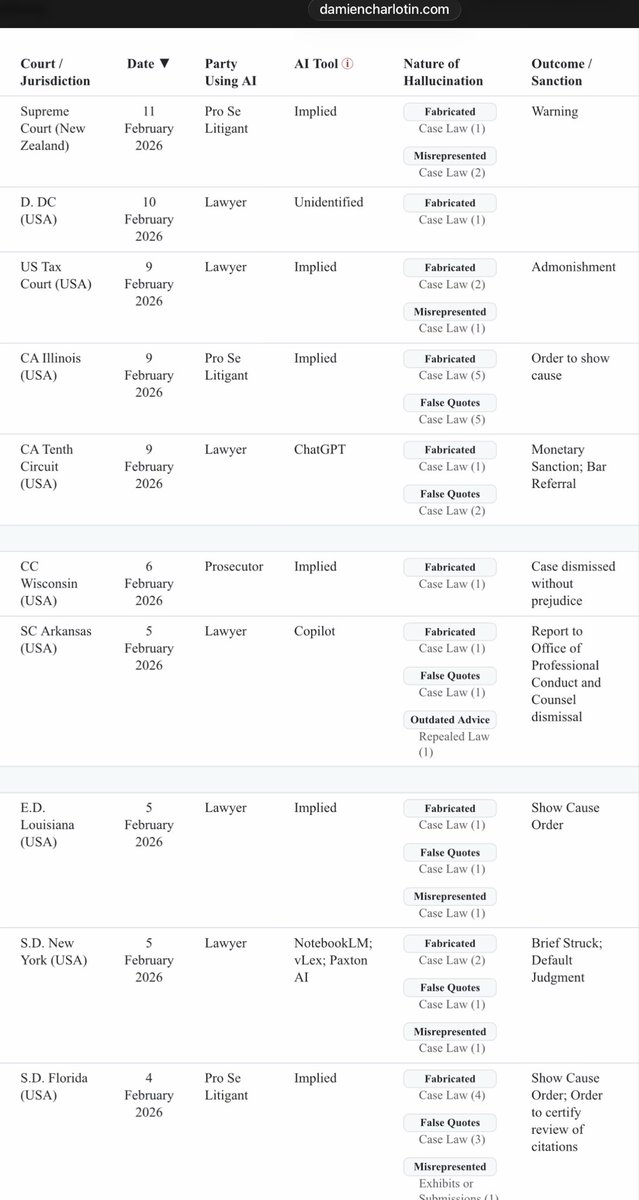

@deredleritt3r @FT and yet lawyers keep getting busted for fake cases in their briefs, per @DamienCharlotin’s database damiencharlotin.com/hallucinations/

Pretty much every day, at much a higher clip than two years ago:

English

@connor_mc_d Aye, sir.

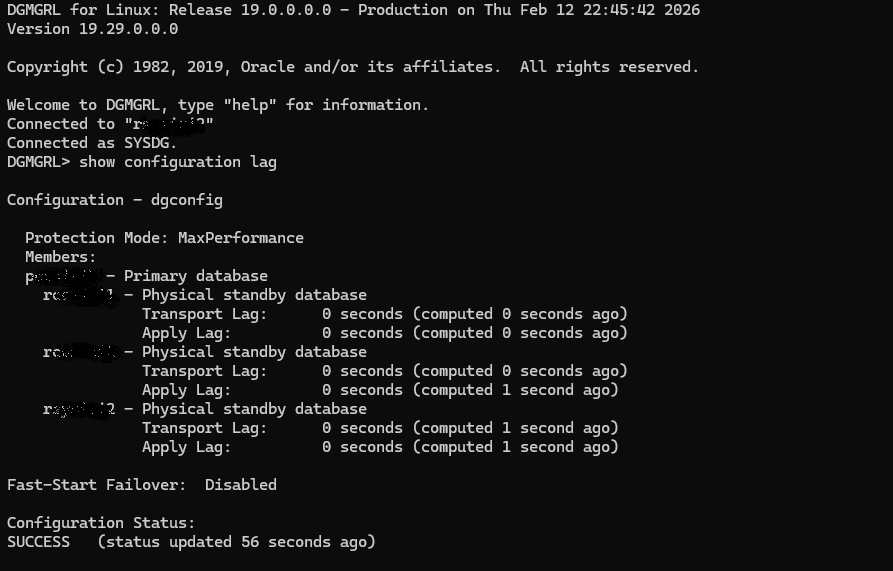

After a load, the optimizer pushed its internal to_number filter into the joins. One of the full table scans uncovered some non-numerics. After the PeopleSoft admin ran pscbo stats, the new plan skipped those values.

Hence, "seemingly cleared".

English

@ExaGridDba Normally implicit datatype conversion... "Cleared of its own" often means "Got lucky and skipped the data in question" so buyer beware

English

Something Big Is Happening

@mattshumer_

shumer.dev/something-big-…

English

@USN_Submariner The Blue Crew's first CO was James B. Osborn, who earlier earned an M.S. in Mech E from Rensselaer Polytechnic Institute.

English

Brian Fitzgerald retweetledi

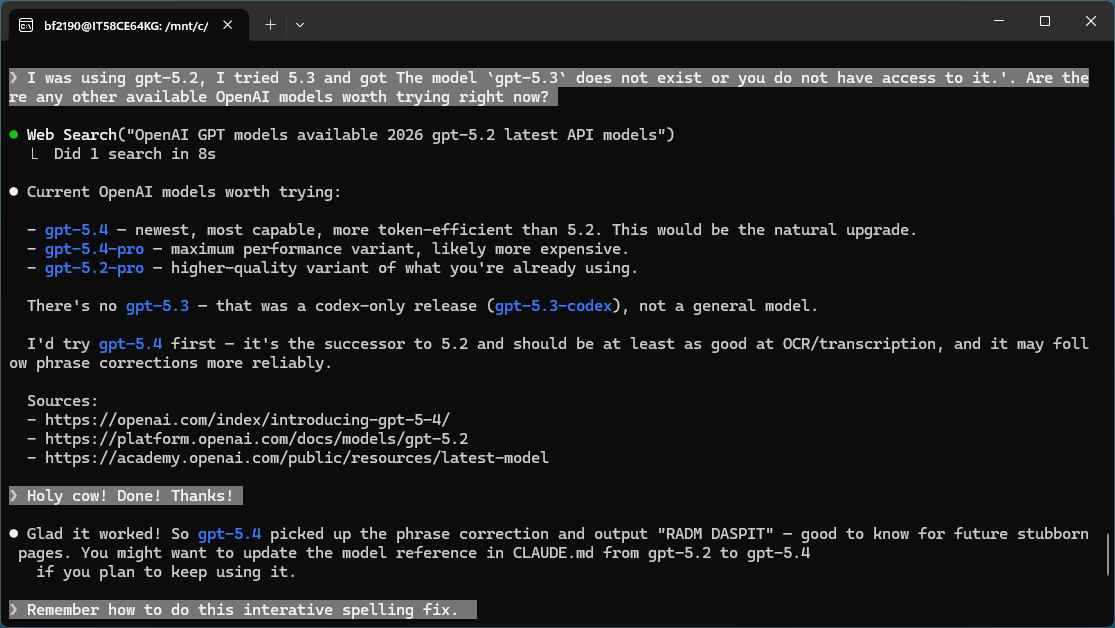

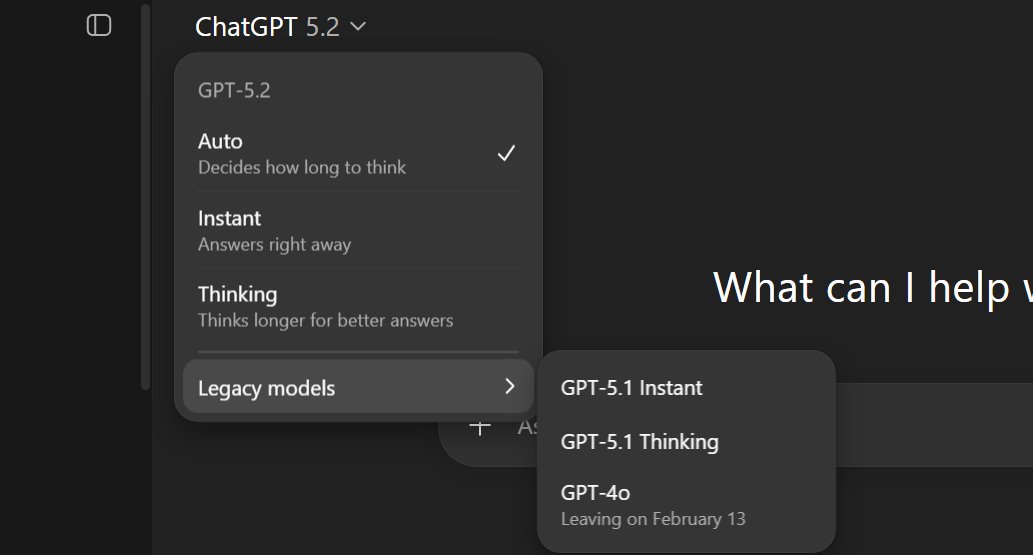

@OpenAI GPT-5.2 inference quality in the Python API is noticeably improved.

English

GPT-5.2 is now rolling out to everyone.

openai.com/index/introduc…

English

Brian Fitzgerald retweetledi