Sabitlenmiş Tweet

Mark Esposito, PhD

67.8K posts

Mark Esposito, PhD

@Exp_Mark

Social Scientist @BKCHarvard | Public Policy @MBRSG & @Georgetown | Member @wef | Founder @NexusFrontier | Chief Economist @micro1_ai | Professor @northeastern

Boston, Geneva, Dubai Katılım Ağustos 2012

11.8K Takip Edilen52K Takipçiler

Mark Esposito, PhD retweetledi

The Enterprise AI Paradox: Why Fast Automation Is Not the Goal. Watch the executive recap below.

Key insights:

- Intelligence is cheap, but trust is expensive.

- Talent, not data, is the new bottleneck.

DM for the full 45-minute recording.

@dcwgoh @Exp_Mark @Terencecmtse

English

Mark Esposito, PhD retweetledi

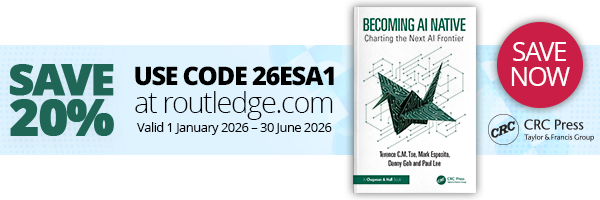

Introducing the Realm Financial Reasoning benchmark, our new evaluation of frontier AI on reasoning in finance and spreadsheet-grounded analysis.

Tasks are built around the actual work product that practitioners deliver, from IFRS reconciliation workbooks and hedge-fund backtests to VC term sheet analyses and treasury cash-flow forecasts. Each task drops the model into a sandbox with the same source materials a human analyst would open: named-range Excel workbooks, broker PDFs, earnings call transcripts, monetary-policy decisions.

Here's what the results showed (Pass@3):

-GPT-5.5: 0.456

-Claude Opus 4.7: 0.398

-Gemini 3.1 Pro: 0.349

The three models score similarly, and none clears 50% on tasks that demand a judgment call. The back and middle office are defensible today, but on capital allocation questions current frontier models should be treated as research accelerators, not final decision-making support systems.

Full report linked in the comments.

English

Mark Esposito, PhD retweetledi

Most AI pilots fail governance, not technology.

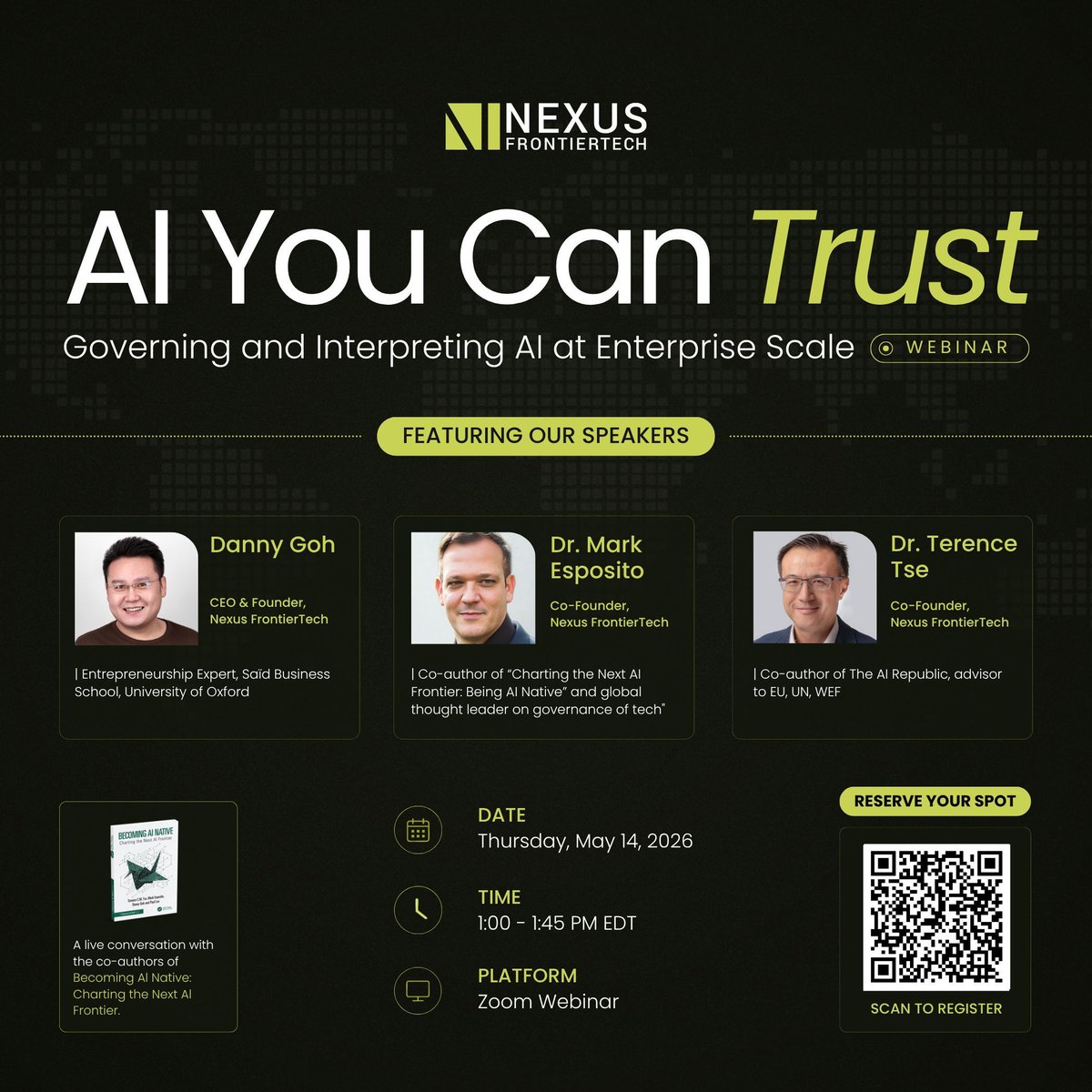

Join us tomorrow for a live conversation with the co-authors of Becoming AI Native @dcwgoh, @Exp_Mark, @Terencecmtse on governing and scaling enterprise AI.

May 14 · 1PM EDT → us06web.zoom.us/webinar/regist…

#AIGovernance #EnterpriseAI

English

Mark Esposito, PhD retweetledi

Mark Esposito, PhD retweetledi

Earlier this week we hosted the “Women Shaping the Future of AI in Law” panel, bringing together leaders across legal, AI, and enterprise technology to discuss what it actually takes to build reliable AI systems for the legal industry.

The conversation covered where AI is already driving real value in legal workflows, the challenges that still remain around trust, accuracy, and human oversight, and how the industry is thinking about building systems that can perform consistently in real-world legal environments.

A huge thank you to Anique Drumright, D. Isabel Ajuria, Shannon Yavorsky, Isabel Yishu Yang, and Amy Sennett for an incredible discussion, and to everyone who joined us.

The future of legal AI will depend on more than model capability alone. It will require deep collaboration between AI builders, legal experts, and the enterprises bringing these systems into real-world workflows.

English

Mark Esposito, PhD retweetledi

Today we’re releasing Realm Warren, part of the Realm benchmark series for measuring frontier AI models on real-world expert workflows.

Each task tests whether a model can produce a legal work product and adapt it as circumstances evolve. We evaluated Claude Opus 4.7, GPT-5.5, and Gemini 3.1 Pro across federal and state law, scored through IRAC: issue spotting, rule identification, factual application, and legal conclusion.

Here’s the results (mean score):

-Claude Opus 4.7: 0.358

-GPT-5.5: 0.351

-Gemini 3.1 Pro: 0.219

The sub-40% result shows where models break down on long-horizon legal work. Three failure modes drive it: the IRAC chain breaks after issue spotting, models front-load their effort and fail to revise, and skipping visual exhibits leads to invented facts.

Full report linked in the comments.

English

Mark Esposito, PhD retweetledi

🤖 South Korea Leads the World in Industrial Robot Adoption

Here’s the 2024 ranking (robots per 1,000 employees):

Top 10:

1. 🇰🇷 South Korea – 122

2. 🇸🇬 Singapore – 82

3. 🇩🇪 Germany – 45

3. 🇯🇵 Japan – 45

5. 🇸🇪 Sweden – 38

6. 🇩🇰 Denmark – 33

7. 🇸🇮 Slovenia – 32

8. 🇺🇸 United States – 31

9. 🇹🇼 Taiwan – 30

10. 🇨🇭 Switzerland – 29

10. 🇳🇱 Netherlands – 29

(Our World in Data)

English

Mark Esposito, PhD retweetledi

Most AI readiness tools, such as those from Cisco, Microsoft, Avanade, and Google, are designed for organisations. They look at things like your company’s infrastructure, data pipelines, cloud setup, and governance. And then there are many educational outfits preparing leaders to use AI in their companies. These tools are helpful, but they really just answer one question: “Is our organisation ready for AI?”

But they don’t answer a more personal question: “Am I ready for AI?”

This is the gap that AI Compass aims to fill.

AI Compass is an AI readiness intelligence platform created by the AI Native Foundation. Instead of just giving you a quiz and a score, it first gathers and checks information from 12 different sources about you, your company, and your industry. Then, it tailors 25 questions to fit your profile, including your seniority, sector, and regulatory environment, and creates a personalised report with 11 sections.

The report covers peer benchmarks, a regulatory scorecard, a register of risks and opportunities, competitive intelligence, a skills and career matrix, and a 90-day action plan designed for your role. This isn’t just a generic checklist—it’s a personalised strategic briefing.

What makes it different from what’s out there? Most tools in the market are organisation-level diagnostics designed for CIOs and IT teams, often pointing you towards a specific vendor’s ecosystem. AI Compass is designed for individuals — from individual contributors to board members, across technology, finance, healthcare, government, education, and beyond. Whether you use AI tools every day or have never touched one, the assessment adapts to you.

As AI readiness becomes a key career skill, it’s more important than ever to know where you stand personally.

Try it here: aicompass.ainativefoundation.org

If your company or institution wants team, group, or enterprise access, feel free to message me.

#AICompass #AIReadiness #AINativeFoundation #ArtificialIntelligence #AIStrategy #CareerDevelopment #Leadership

GIF

English

Mark Esposito, PhD retweetledi

A lot of people are focused on rolling out AI at scale, but not many are discussing how to manage it effectively. That’s a real issue.

How can you trust an AI system if you can’t explain its decisions? How do you manage something that changes faster than your compliance rules? And what does responsible AI really mean when it’s part of your daily work, not just a slide in a deck?

Danny Goh @dcwgoh, Mark Esposito @Exp_Mark, and I will dive into these questions in our upcoming webinar: AI You Can Trust: Governing and Interpreting AI at Enterprise Scale.

This won’t be just a theory. Together, we’ve built AI systems, advised governments and global organisations, and even written the book on becoming AI native. We’ll share what has worked, what hasn’t, and what enterprise leaders should focus on right now.

If you’re a CTO working to build trust in your AI stack, a board member asking tough questions, or a leader who thinks your organisation’s AI governance is more about slides than real action, this session is for you.

Join us on Thursday, May 14, 2026, from 1:00 to 1:45 PM EDT for a Zoom webinar.

You can scan the QR code in the image or go here tinyurl.com/2pjjms92

Hope to see you there!

#AIGovernance #TrustworthyAI #BecomingAINative #NexusFrontierTech #AI #EnterpriseAI #Leadership

English

Mark Esposito, PhD retweetledi

Mark Esposito, PhD retweetledi

Mark Esposito, PhD retweetledi

Highly recommend this issue of "Think:Act" from Roland Berger. For one because it's dedicated to "Changing Rules for a Changing World," which is where our attention needs to be. But also because it's jam-packed with great ideas on what we can do to shape the emerging future, from Sam Palmisano, Linda Hill, Sebastian Thrun, Paul Saffo, AG Lafley, Rita McGrath, Kishore Mahbubani, Juergen Schmidhuber, Gerd Leonhard, Emily Bender, Alex Hanna and many more. And yes, my friend, colleague and co-author, @Exp_Mark, and I are in it as well. Yes, yes, shameless, I know. 😉 Enjoy: rolandberger.com/en/Think-Act-M…

English

Mark Esposito, PhD retweetledi

Glad to have contributed, alongside my longterm friend, colleague and co-author @Exp_Mark, to this bleeding edge report by the World Economic Forum @Davos and our friends at @Capgemini: weforum.org/publication…

Crack it open -- you won't be disappointed. It delivers actionable insights and tools that help policy-makers, economists and business leaders design systems, structure organizations and scale solutions beyond the scope of a single technology for broader societal value. The analysis examines how eight advanced technology domains interact, using the 3C framework and the Technology Maturity Index to track how technologies move from experimentation to real-world impact and global change. Thanks to the teams of both organizations.

Thanks to the teams of both organizations: Jeremy Jurgens, Aiman Ezzat, Kary Bheemaiah, Cathy Li, Mylo Kidwell, Antoine Tillette de Mautort, Connie Kuang, Simone Schmalzbauer; Simone Xinyi QIU, Mattia Damati, Maria Basso.

English

Mark Esposito, PhD retweetledi

AGREE: There’s no such thing as the petrodollar via @FT. Very nice piece. giftarticle.ft.com/giftarticle/ac…

English

Mark Esposito, PhD retweetledi

In recognition of National Cancer Prevention and Early Detection Month, join us for an important conversation on how AI is reshaping the future of cancer care.

From accelerating drug discovery to enabling more accurate, scalable diagnostics, artificial intelligence is unlocking new possibilities across prevention, early detection, and treatment. We’ll also dive into the real challenges, data quality, bias, interpretability, and bridging the gap between research breakthroughs and real-world clinical impact.

Featuring:

•Virginie Buggia-Prevot, PhD (Executive Director, @ValoHealth)

•Bahar Rahsepar, PhD (Associate Director of Product, @Path_AI)

•Paola Rodríguez - MD, Eng, MSc. (Director of Medical Research, @micro1_ai)

Moderated by @Exp_Mark (Chief Economist, micro1)

This session brings together leading voices at the intersection of AI and healthcare to explore how human + AI are transforming patient outcomes.

Join us on 4/28, 10am PT: us06web.zoom.us/webinar/regist…

English

Mark Esposito, PhD retweetledi

Mark Esposito, PhD retweetledi

Dan Heffernan has led sales teams at some of the biggest names in tech and now is making his mark in AI.

In this conversation, he breaks down why the human element is the secret behind the best models, and how he's putting that belief into action at micro1 while training AI.

Watch the full interview on YouTube now! (Link in the comments)

English

Mark Esposito, PhD retweetledi

micro1 x Crosby: AI Fellowship for SaaS Contracting Attorneys

We've teamed up with @crosbylegal to launch an AI Fellowship for SaaS Contracting Attorneys, and we're looking for attorneys with deep expertise in tech transactions to help us shape how AI handles real legal work.

Here's what the fellowship looks like:

- Simulated contract negotiations and redlining exercises

- Evaluating AI-generated suggestions for accuracy and legal soundness

- Collaborating with product and research teams to improve AI outputs

This is a part-time, fully remote opportunity paying $80-$105/hr.

Apply now at the link in the comments.

English