Sabitlenmiş Tweet

Francesco A. Fabozzi

27 posts

Francesco A. Fabozzi

@FAFabozzi

Education & Research on LLMs and Quant Finance | Research Director @ Yale ICF | Managing Editor @ Journal of Financial Data Science | Data Science PhD

Katılım Mayıs 2020

176 Takip Edilen135 Takipçiler

Francesco A. Fabozzi retweetledi

@MattiasLamotte @bugabtc Instead of trimming out features, try PCA or other dimension reduction techniques!

English

a lot. The dataset I built had approximately 1650 features, and I usually try to trim them to between 100 and 200 features. Many of the 1650 features are correlated/cointegrated so part of the work is to find a method to trim them.

Implied vols, realised vols, bond yield movements, underlying market movements etc...

English

Finding edgartools to be the best Python package for downloading financial statements from the SEC. This video provides a great overview👇

youtube.com/watch?v=mI6KDe…

YouTube

English

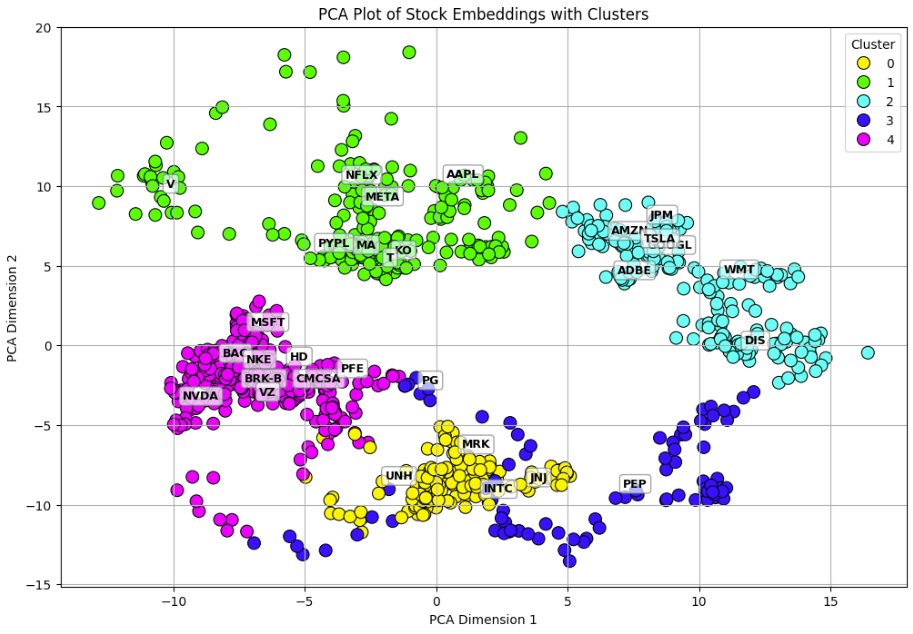

@quantscience_ Great thread! There’s also clustering for pricing illiquid bonds

English

On this point, an under-appreciated paper related to this work is the "Deep Regression Ensembles" paper by Didisheim, Kelly, and Malamud.

Link: arxiv.org/abs/2203.05417

English

Financial markets are complex, and linear approximations of input variables to asset returns fail to capture this complexity. Predictability can be improved by examining large-scale interactions among predictive variables, even when fitting with a linear model—just don’t forget to regularize!

Clifford Asness@CliffordAsness

From my colleagues: “Can Machines Build Better Stock Portfolios?” aqr.com/Insights/Resea…

English

For those looking to get started in #FinancialNLP, the Financial Phrasebank dataset on Huggingface is a great place to start playing with LLMs!

huggingface.co/datasets/takal…

English

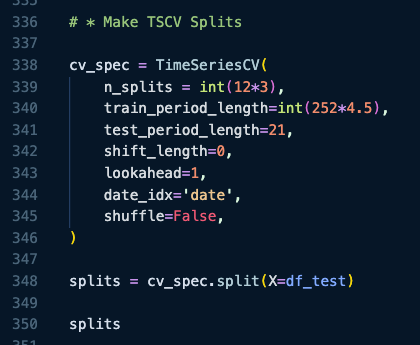

@quantscience_ Correct! ML usually comes with more risks😂 proper CV is a necessity

English

@pyquantnews I always tell beginners... start with a project, not syntax!

English

When using LLMs in financial backtesting, a common question is: "Doesn't ChatGPT have forward-looking bias?" 🤔

Paul Glasserman and Caden Lin dive into this in their paper "Assessing Look-Ahead Bias in Stock Return Predictions Generated by GPT Sentiment Analysis."

Their study backtests ChatGPT-based sentiment trading strategies, comparing portfolios with and without anonymized headlines (masking company identifiers). Interestingly, portfolios using anonymized headlines outperform those with original headlines. Why? 🧠

Their findings suggest that including company names creates a “distraction effect,” where the model fixates on names rather than sentiment—a stronger effect than any look-ahead bias! 📉

This work is critical for advancing GPT models in trading and portfolio construction. I’ve observed similar results in my own research too.

Paper: pm-research.com/content/iijjfd…

English

Paper link: papers.ssrn.com/sol3/papers.cf…

Associated repo: github.com/francescoafabo…

English

These @notebooklm_pods podcasts are crazy! @ProfJimLiew just sent me a podcast he generated based on my paper, "Cut the Chit-Chat". A bit too much embellishment but otherwise amazing!

English

6/ Follow me here for more insights into cutting-edge applications of LLMs for Finance, and stay tuned for tools, research, and practical tips!

#GenAI #Finance #LLMs #Python #SentimentAnalysis

English

5/ 💻 To make Logit Extraction accessible, I've developed a Python package, TokenProbs, now available on GitHub and PyPi.

PyPi: pypi.org/project/TokenP…

GitHub: github.com/francescoafabo…

English