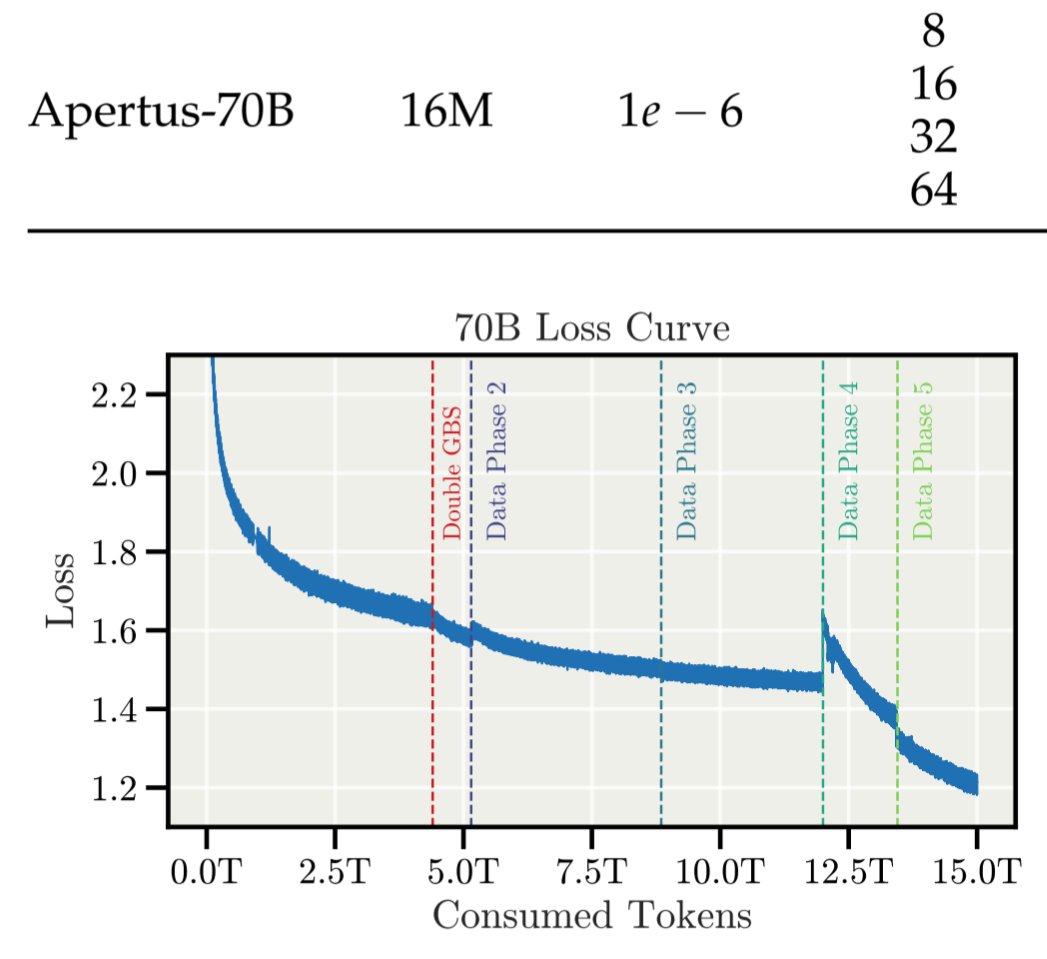

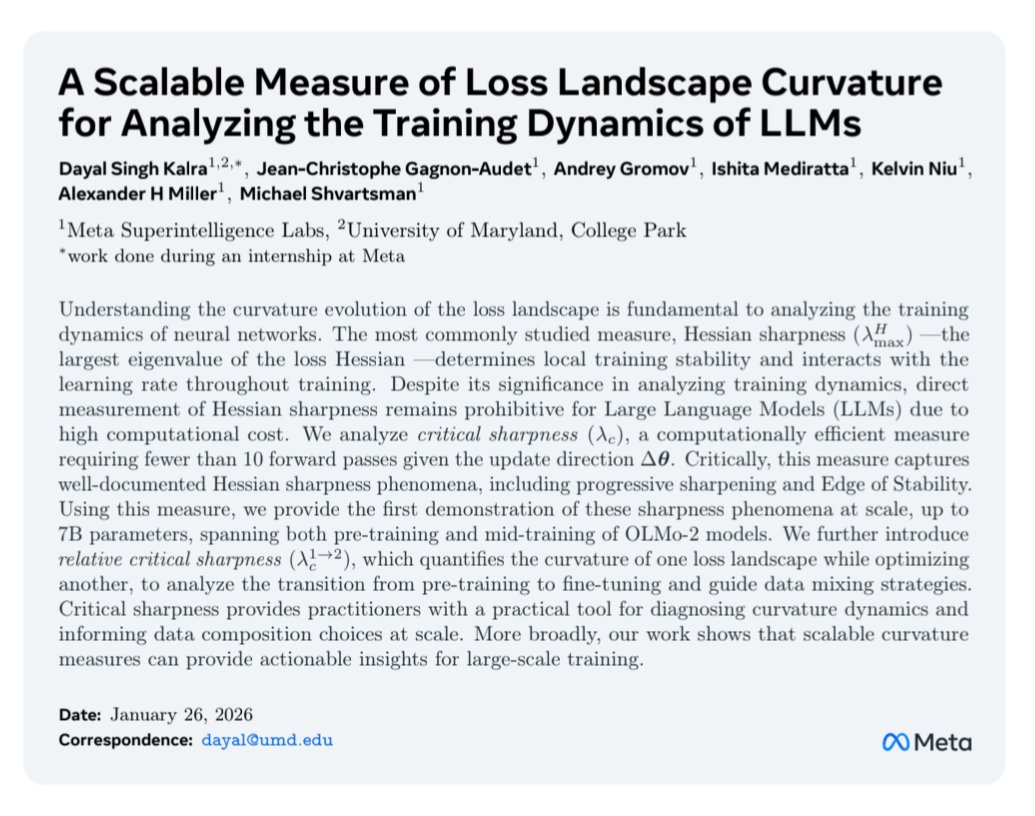

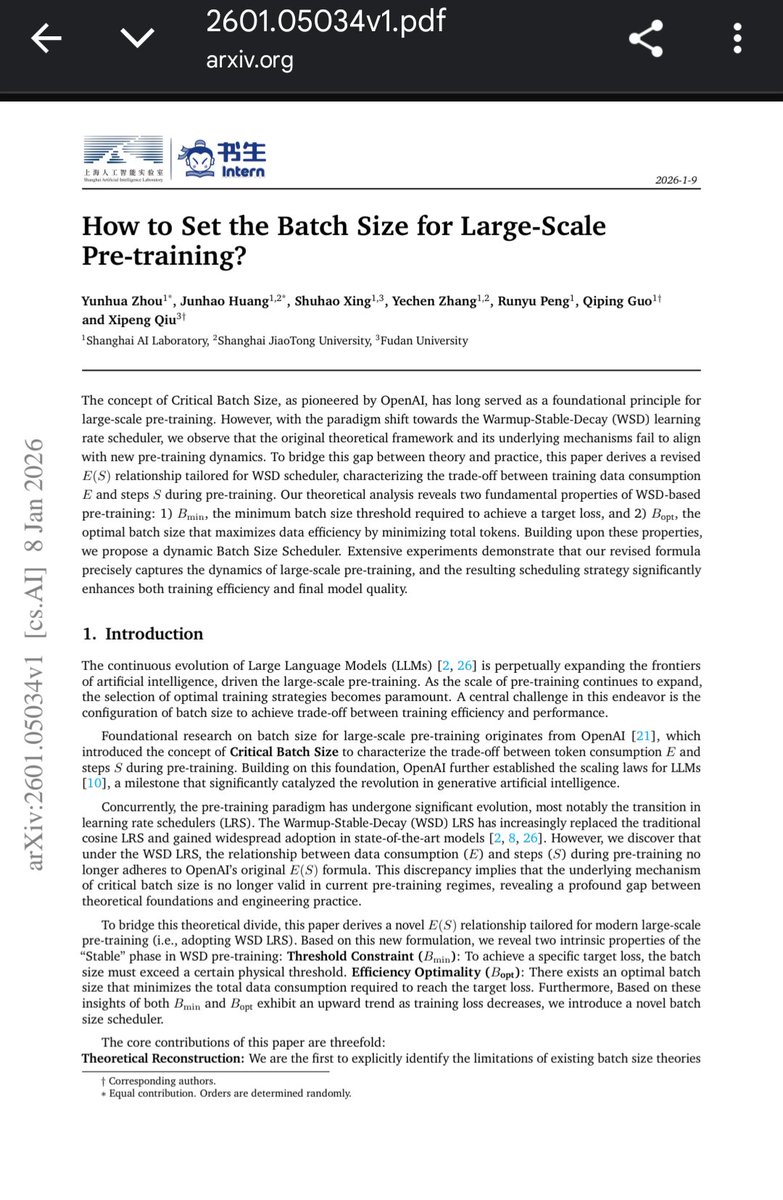

The sudden loss drop when annealing the learning rate at the end of a WSD (warmup-stable-decay) schedule can be explained without relying on non-convexity or even smoothness, a new paper shows that it can be precisely predicted by theory in the convex, non-smooth setting! 1/2