F. Schwab

13.5K posts

F. Schwab

@FSchwab6

Nothing in Media Psychology Makes Sense Except in the Light of Evolution

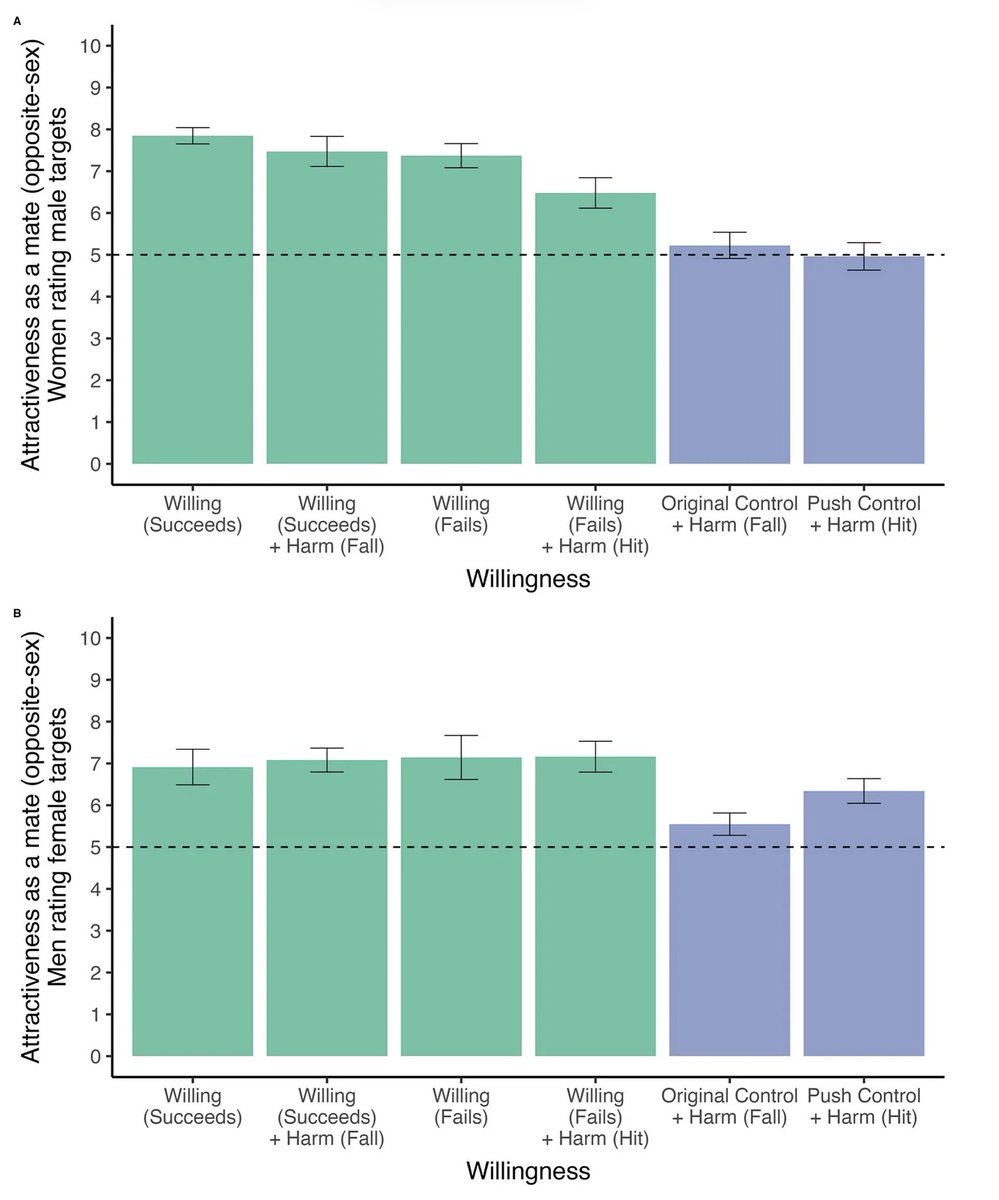

Why do social norms work differently when we interact with AI? In a new paper (N = 1,108), we found that people are less sensitive to prosocial norms when interacting with AI because they are less accurate at predicting AI behavior. Framing AI as intentional & goal-directed reduces these prediction errors and eliminates the cooperation gap between AI and human partners. 👉 Participants were less sensitive to social norms when interacting with AI than when interacting with human partners. 👉 Participants were systematically worse at predicting AI behavior. 👉 This was not a learning problem. Participants updated expectations equally well for AI and humans; the difference arose in initial calibration. 👉 When AI was framed as intentional and goal-directed, participants predicted AI behavior more accurately and the cooperation gap was reduced. 💡 Implications 👉 Designing AI that is behaviorally legible may be more important than making AI appear warmer or more human-like. 📑 Read the full working paper: osf.io/8fhwg/ This was led by @laura_k_globig along with @NadyaHanaveriesa & @sydneymlevine

With the exceptions of men and women with a BA, and women with a graduate degree, the majority of Americans agree "artificial intelligence will soon replace educators." These data come from the American Political Perspectives Survey (APPS) collected from August 3, 2025, to September 26, 2025, with 3,000 American adults who speak English. All respondents needed to pass (1) attention checks, (2) a duplication check, (3) time-to-completion checks, (4) fraud and (5) bot-identification checks. For more information, see: research.skeptic.com/american-polit…