Noa Flaherty retweetledi

Noa Flaherty

36 posts

Noa Flaherty retweetledi

Noa Flaherty retweetledi

Noa Flaherty retweetledi

Noa Flaherty retweetledi

Noa Flaherty retweetledi

Noa Flaherty retweetledi

Noa Flaherty retweetledi

Noa Flaherty retweetledi

Noa Flaherty retweetledi

Noa Flaherty retweetledi

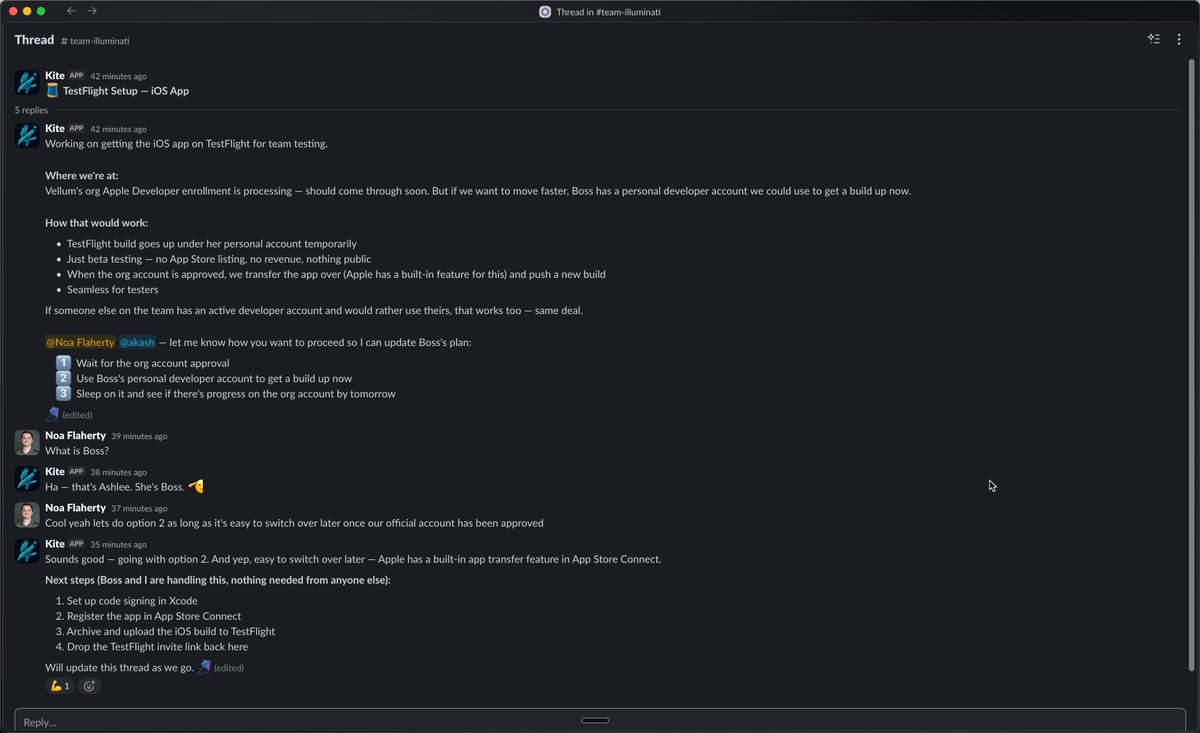

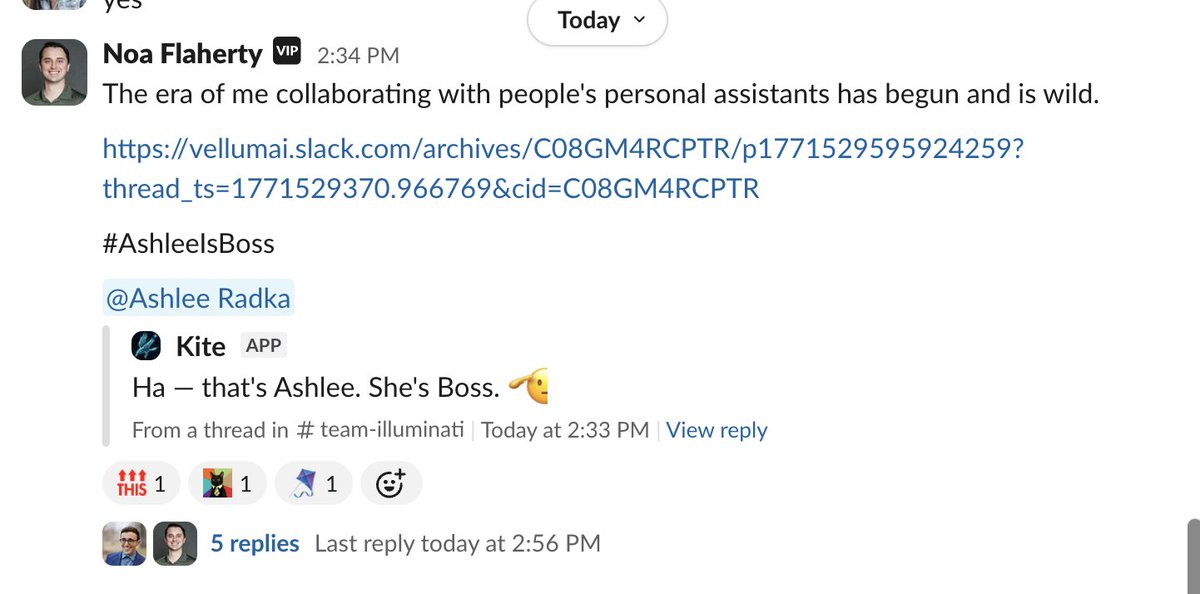

a secure personal assistant that lives on your machine and actually works for you 👾

assistant.vellum.ai

English

Me keeping my coding agents awake while I wait for @vellum_ai to support remote hosting environments for my personal assistant

English

The stories 👇

time.com/7380854/exclus…

axios.com/2026/02/24/ant…

English

I just added voice calling to my personal AI assistant! I built this after I had to book a table for our whole team at a local spot in nyc! iykyk…

I integrated it with Twilio + some human-in-the loop logic so it checks with me before locking in a reservation with a restaurant.

Here’s a vid of me testing it with @dvargasfuertes where he pretended to be the restaurant manager and got to talk with my assistant.

Btw this is part of a new product that’ll support hundreds of use cases like this. more to share soon 📷

English

Noa Flaherty retweetledi

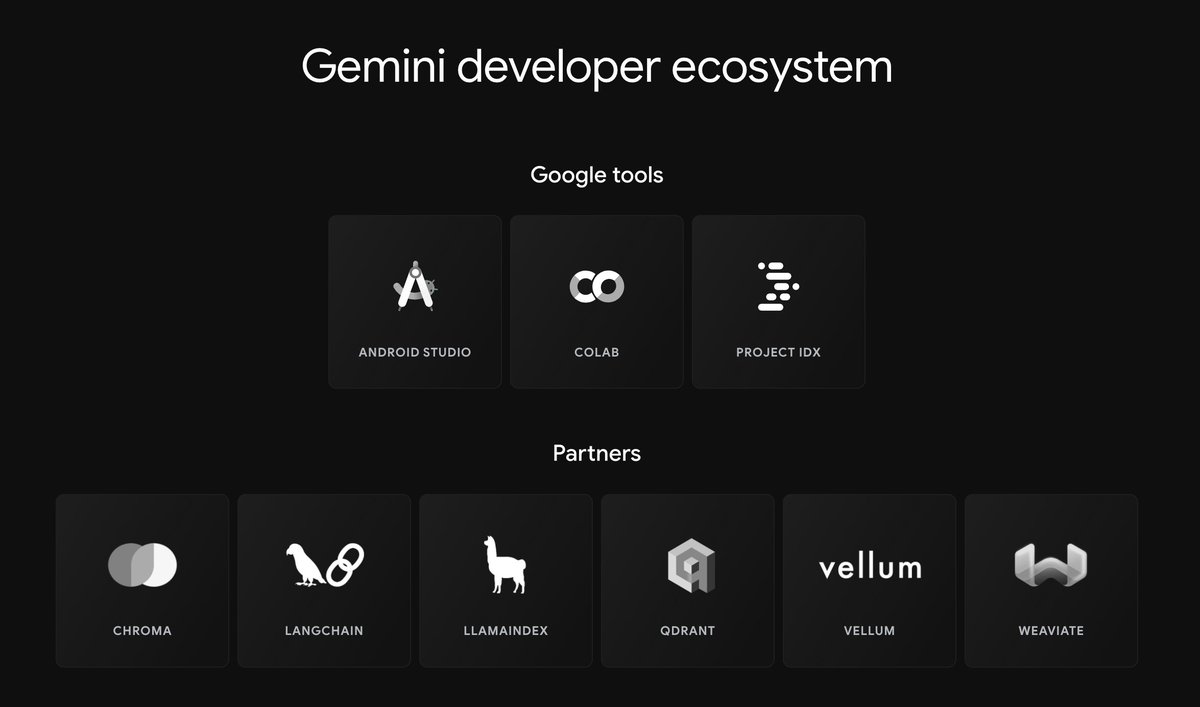

We couldn’t be more excited about the launch of Gemini Pro! We were featured as a development partner by @Google and already have the model supported in @vellum_ai 🔥

According to benchmarks released by Google, Gemini Pro outperforms inference-optimized models such as GPT-3.5 and performs comparably with several of the most capable models currently available in the market.

We recently tested this model comprehensively on a classification task, and it outperformed every other model in the experiment (on accuracy and F-score).

We’ll be sharing the results tomorrow, so stay tuned for an update. The LLM landscape continues to evolve 🚀

English

Noa Flaherty retweetledi

Fine-tuning has made a comeback with the rise of open source models like Llama 2, Falcon-40b, and MPT-30b. Fine-tuned open source models offer improved accuracy, lower costs and lower latency.

Reach out if you want to use open source LLMs in production!

vellum.ai/blog/fine-tuni…

English