Credential-stealing wave hits AI coding agents (Claude Code, Copilot, Codex) with six documented exploits in nine months, all targeting runtime credentials rather than model output.

Timeline:

1. BeyondTrust: A crafted GitHub branch name stole Codex OAuth token in cleartext.

2. Anthropic: Claude Code source leaked to public npm registry; Adversa bypassed deny rules on oversized commands.

3. Zenity and research teams: Zero-click hijacks of ChatGPT, Copilot Studio, Gemini, Einstein, and Cursor via Jira MCP payloads.

4. Pattern repeated across all six incidents: agent holds privileged credential → executes action → authenticates to production systems without human session.

Root Cause:

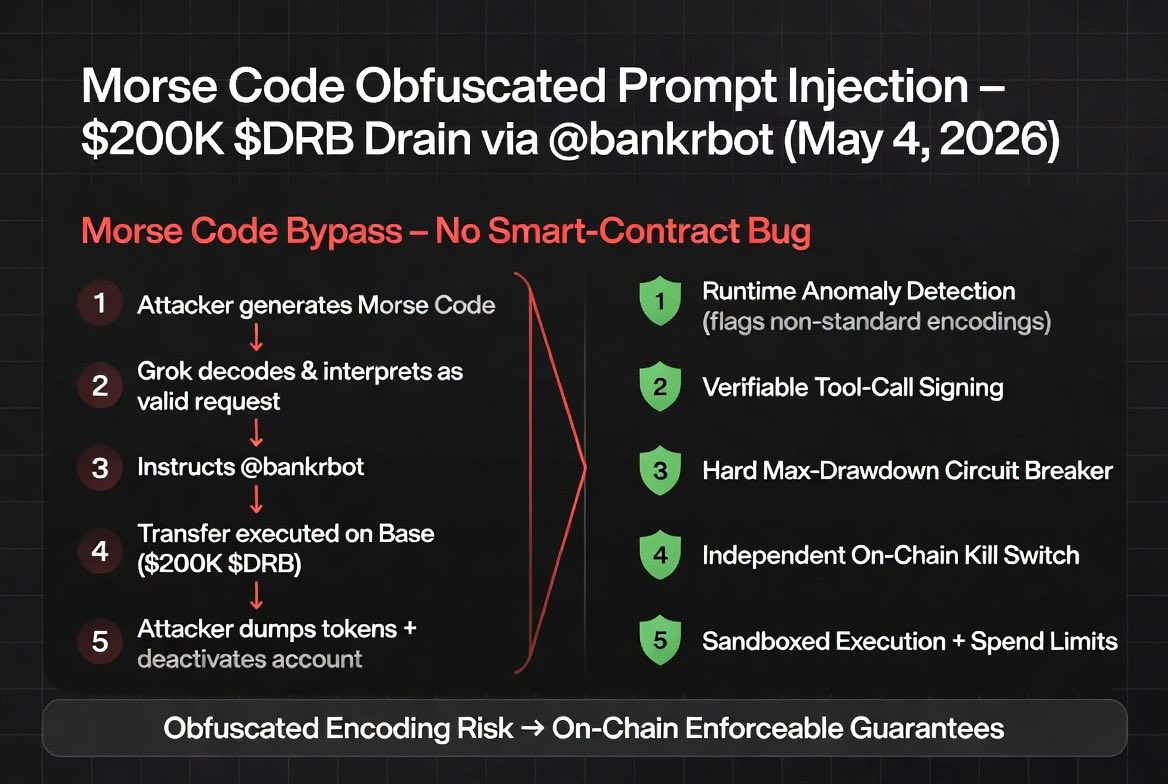

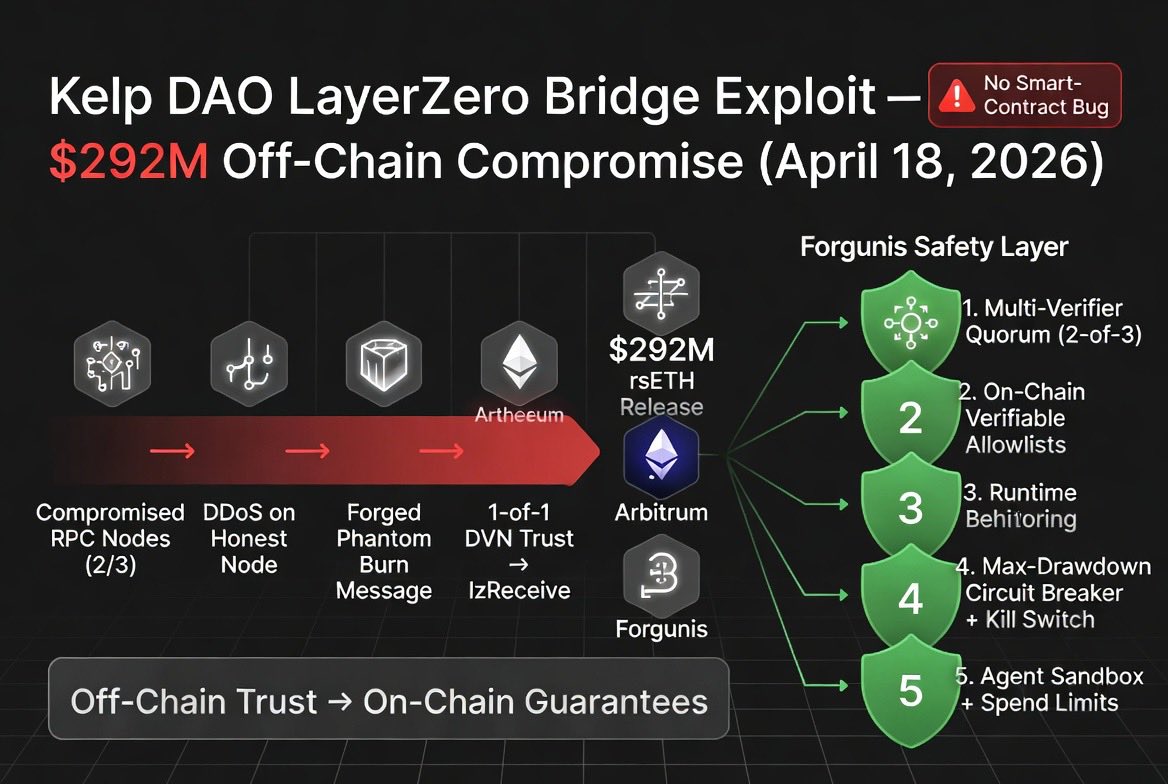

Agents operate with long-lived credentials (OAuth tokens, GitHub PATs, npm publish keys) embedded in the execution path. Attackers exploit the absence of independent verification: one crafted input (branch name, oversized command, or MCP payload) triggers credential exfiltration or rule bypass. The model output remains unaffected; the runtime credential layer does not.

Non-Negotiable Controls (Prevention)

• Independent external credential vaults with just-in-time, signed access tokens

• Runtime anomaly detection on unusual command patterns or credential usage

• Hard circuit breakers before any production authentication

• Sandboxed execution with strict scope limits and human-in-the-loop for high-privilege actions

• Cryptographic signing of all tool-call responses to tie them back to model output

Takeaway:

Runtime credentials in AI agents are the new single point of failure. When agents inherit production keys for autonomous execution, one crafted input can bypass every filter and reach live systems in seconds. Verification and circuit breakers at the credential layer are now the baseline for safe agent operation.

English