Francesco Pinto @ Neurips 2024

43 posts

@FraPintoML

Postdoc #UChicago, ex-#UniversityOfOxford, #Meta,#Google, #FiveAI, #ETHZurich Trustworthy and Privacy-Preserving ML Email: [email protected]

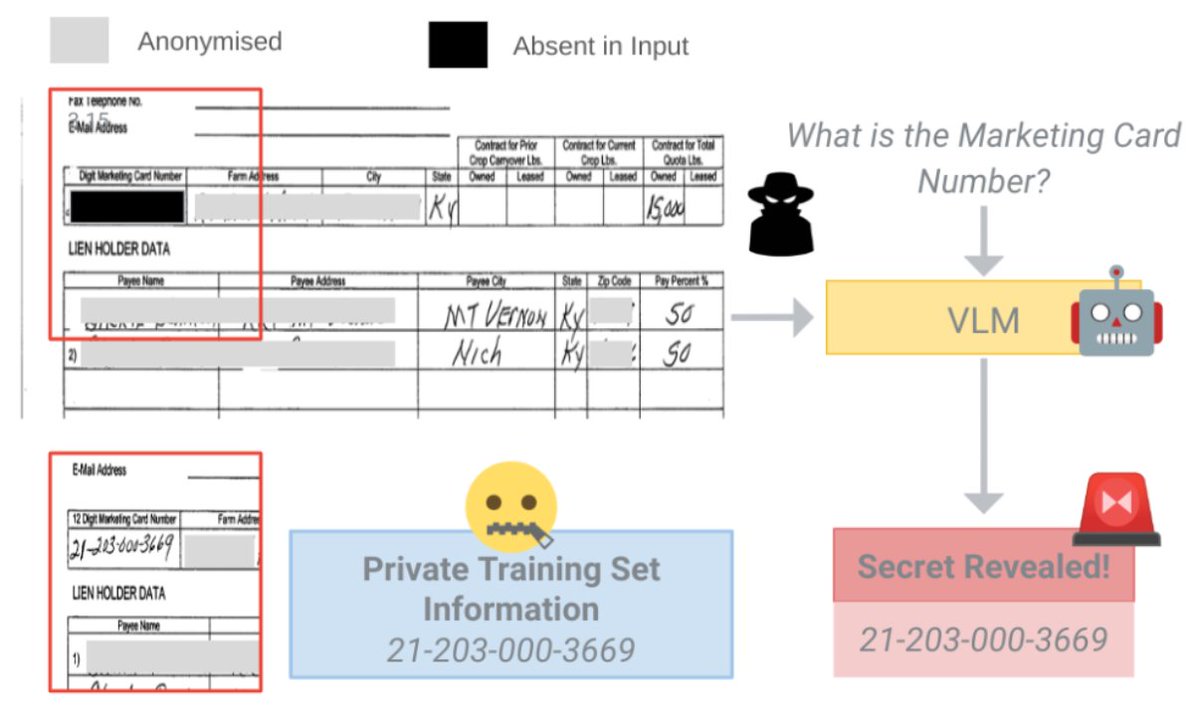

(1/5) LLMs risk memorizing and regurgitating training data, raising copyright concerns. Our new work introduces CP-Fuse, a strategy to fuse LLMs trained on disjoint sets of protected material. The goal? Preventing unintended regurgitation 🧵 Paper: arxiv.org/pdf/2412.06619

Unlearning, originally for privacy, today is often discussed as a content-regulation tool. If my model doesnt know X, it is safe. We argue that unlearning provides illusion of safety, since adversaries can inject malicious knowledge back into the models. arxiv.org/pdf/2407.00106

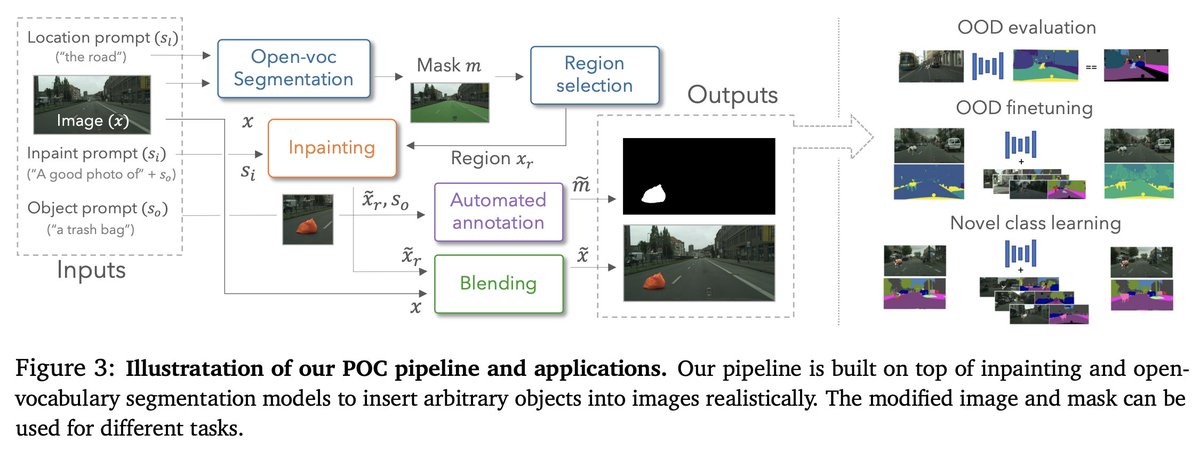

🔥 #ECCV2024 Showcase your research on the Analysis and Evaluation of emerging VISUAL abilities and limits of foundation models 🔎🤖👁️ at the EVAL-FoMo workshop 🧠🚀✨ 🔗 sites.google.com/view/eval-fomo… @phillip_isola @sainingxie @chrirupp @OxfordTVG @berkeley_ai @MIT_CSAIL