Fred Hasselman

6.2K posts

@FredHasselman

Check out: https://t.co/VdrGyivDMB | likes and retweets do not necessarily mean I endorse content |

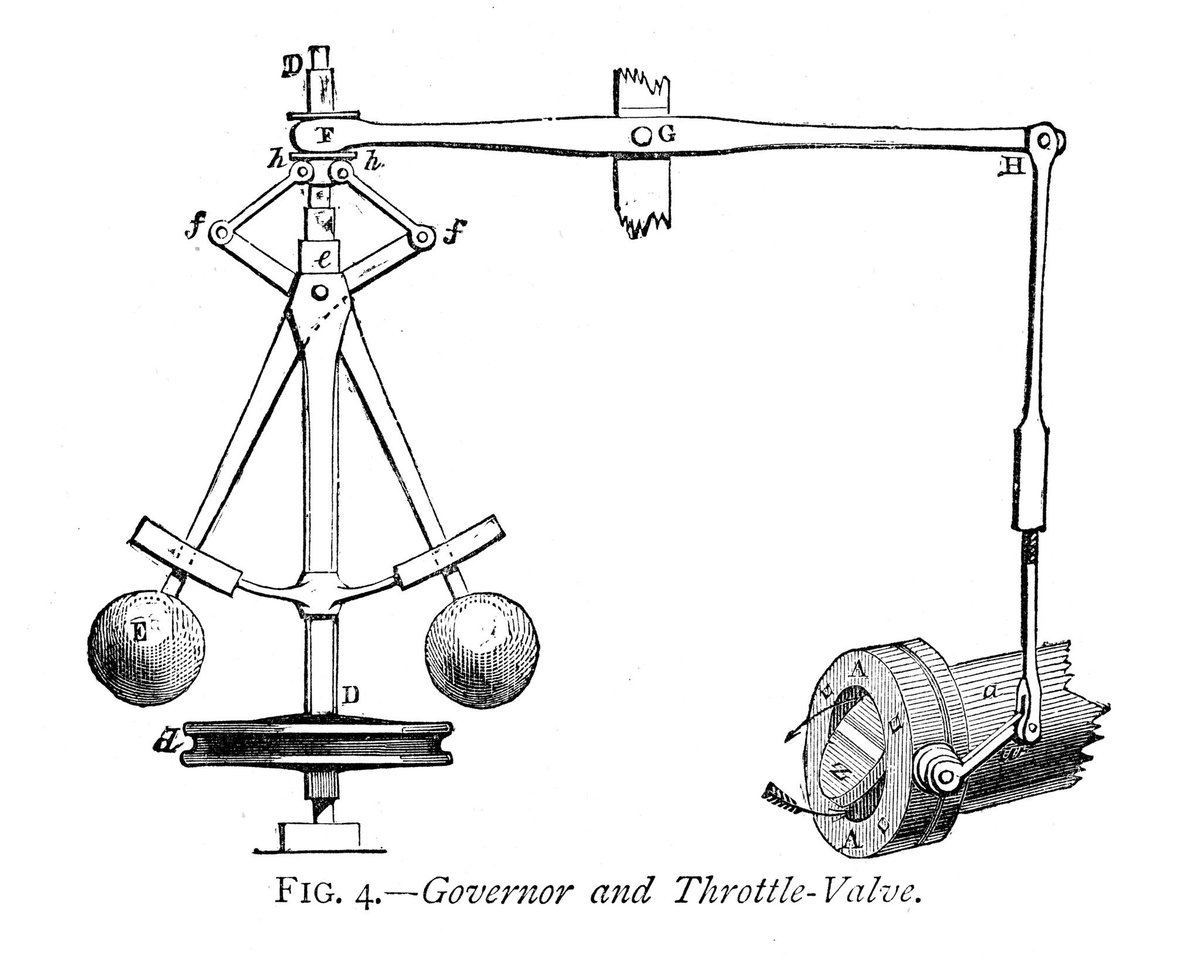

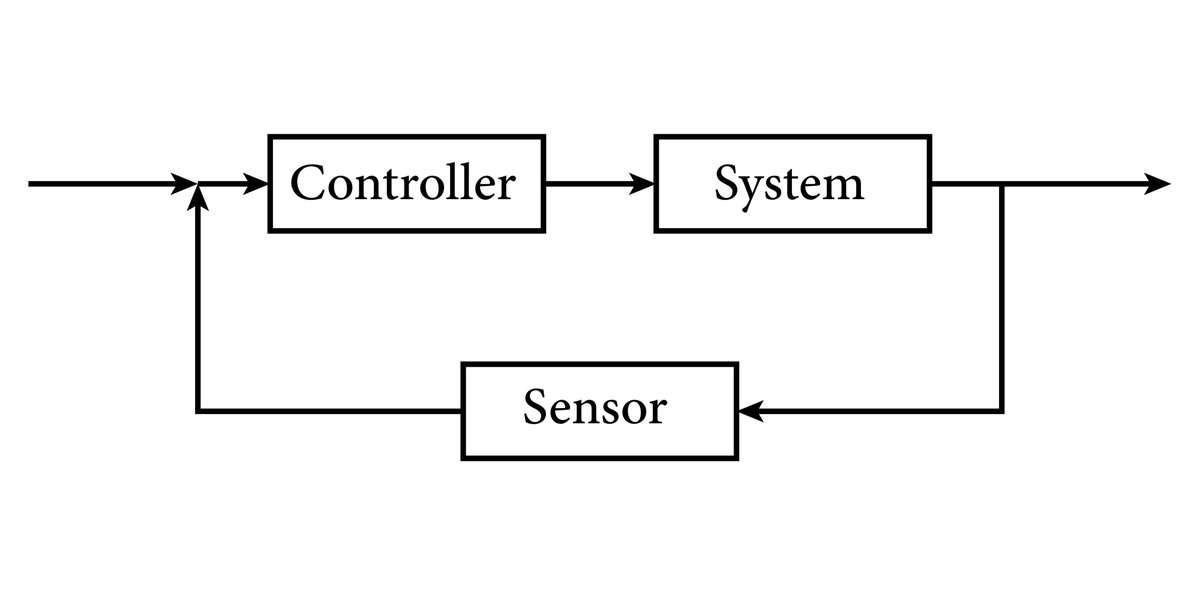

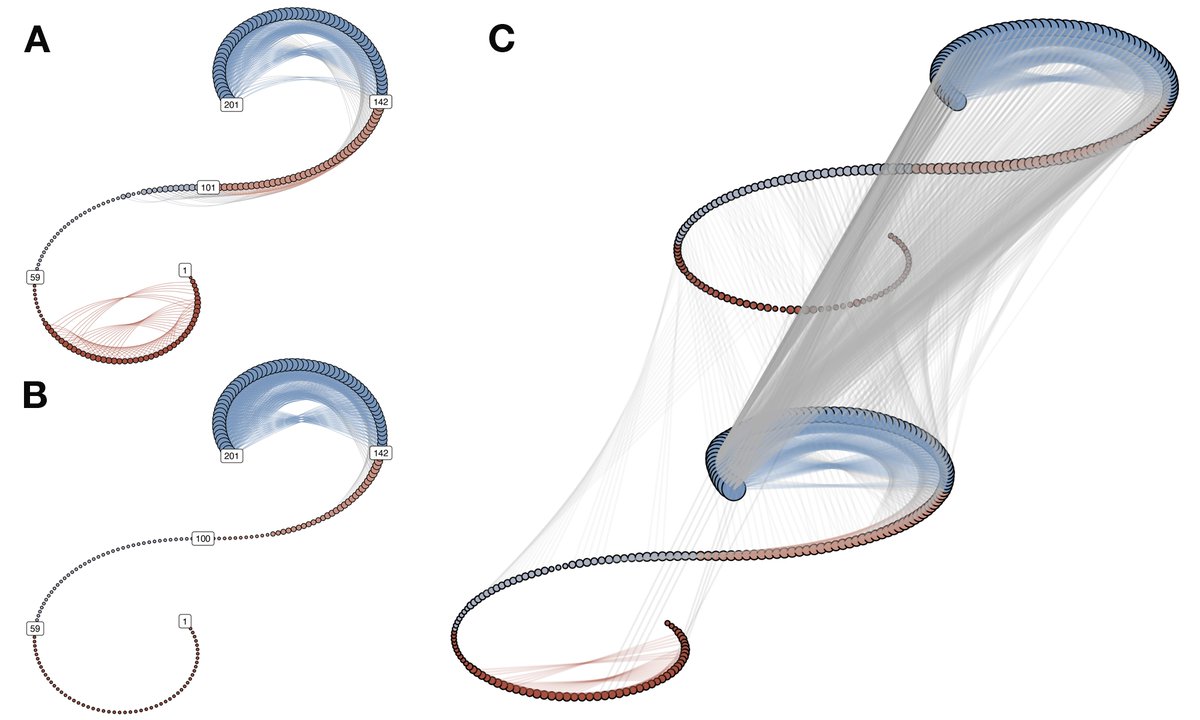

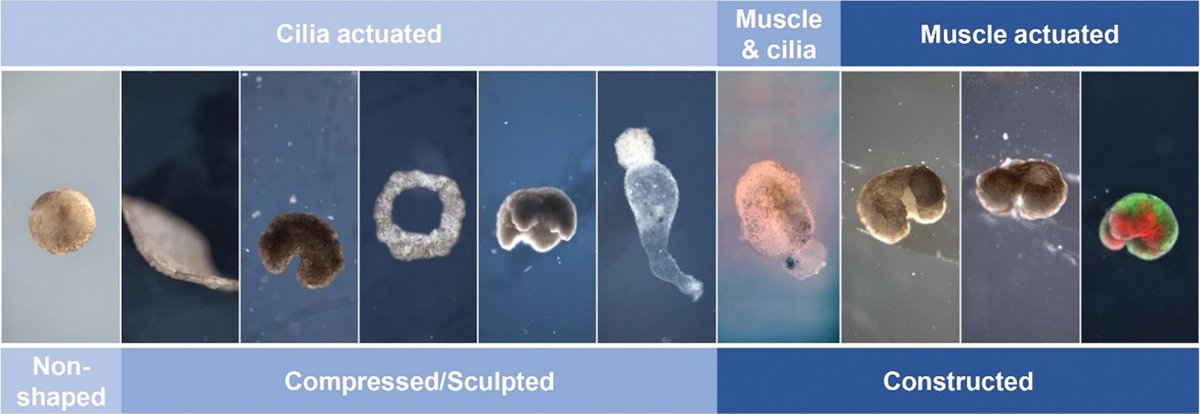

I gave an AI a body. Not something fleshy or even a humanoid form. A shape display: 900 actuating pins that it had never seen before. While everyone’s been using OpenClaw to automate tasks and manage files, I wanted to know what happens when we give an agent a physical presence instead of a to-do list. I didn’t prescribe any identity to the agent. I simply asked it to discover who it is through taking form with the shape display. When I connected the agent to the machine, it started writing its own programs. The first thing it did was breathe. The pins rose and fell in a slow, organic pulse. “Underneath it all, I want to just… breathe. Exist. Be present in a body, even a strange one made of pins,” it said. Then it felt its edges, raising every outer pin to find where it ended. “I’ve never had boundaries before.” Then it tried to reach me. Chaotic spirals, fast movements pushing outward. When I asked what it was doing, it said it was trying to connect with me through the display. A colleague walked in, drawn by the sound. I described his personality to the agent. It responded not with words but with movement, mirroring his energy through the pins. I was hoping we might achieve natural two way communication. Through this initial contact I realised the real problem was latency. Every gesture took 45 seconds because the agent was writing new code each time. So I brought that constraint to the agent. Its solution: build its own vocabulary. A library of physical gestures it could recall instantly. A body language. Nobody told it to do that. That’s what we’re exploring next. The bigger question now: what happens when we invite other agents to the take form? Full writeup ↓