Freda🇨🇦

1.3K posts

Freda🇨🇦

@Freda20052010

Architect

One week since the launch of GPT-5.5, and it’s already our strongest model launch yet. API revenue is growing more than 2x faster than any prior release, while Codex doubled revenue in under seven days as enterprise demand for agentic coding tools keeps climbing.

昨天Figure宣布已经实现1小时生产一台Figure 03机器人,一周生产55台,今天1X机器人就告诉你我们NEO打包出厂状态是这样的👇🏻。

这个自由党的风格越来越接近中共了, 让我们看看自由党的议员在国会的发言! 5月1日上午, 自由党的国会议员Ryan Turnbull 说: 加拿大的生活是世界上最好的。 儿童免费食品 G7中最快增长 G7中最强的财政状况 儿童保育和牙科护理 加拿大人需要感谢我们。 我们是最棒的‼️

加拿大现任总理马克·卡尼 • 妻子住在美国 🇺🇸 • 四个孩子住在美国并在那里学习 🇺🇸 • 他的投资组合约 91% 在美国 🇺🇸 • 家也在美国 🇺🇸 • Brookfield 将总部迁至美国 📍 更搞笑的是他却对加拿大人说: 我们不能依赖美国。 看来加拿大的民主制度已经荡然无存!

This Chinese developer launched Llama 70B locally on a MacBook on a plane and for a full 11 hours without internet ran client projects. He was sitting by the window on a transatlantic flight with a MacBook Pro M4 with 64 GB of memory. WiFi on board cost $25 for the flight. He declined. No cloud API, no connection to Anthropic or OpenAI servers, no internet at all. Just a local Llama 3.3 70B on bf16 and his own orchestrator script. The model runs through llama.cpp. Generation speed, 71 tokens per second. Context around 60,000 tokens. Memory usage, 48.6 GiB out of 64. Battery at takeoff, 3 hours 21 minutes. And he gave the orchestrator this system prompt before takeoff: "You are an offline orchestrator running on a single MacBook. There is no network. The only resources you have are local files in /Users/dev/work, the Llama 70B inference server at localhost:8080, and a battery budget of 3 hours 21 minutes. Process the queue at /Users/dev/work/queue.jsonl (one client task per line). For each task: draft → run local evals → save artefact to /Users/dev/work/done/. Save context checkpoints every 12 tasks so you can resume after a battery swap. Stop only on empty queue or when battery drops below 5%." So the system knows exactly what resources it is running on. It knows it has no connection to the outside world for the next 11 hours. It knows it has finite memory and a finite battery. It knows the human will not intervene until the plane lands. The system runs in 1 loop. Takes a task from the queue, runs it through inference, saves the artifact, writes a checkpoint. Task after task, just like that. And only when the battery drops below 5% does the orchestrator automatically pause, waits for the laptop to switch to the backup power bank, and continues from the last checkpoint. Here is what the system actually writes in his log during the flight: "saved context checkpoint 8 of 12 (pos_min = 488, pos_max = 50118, size = 62.813 MiB)" "restored context checkpoint (pos_min = 488, pos_max = 50118)" "prompt processing progress: n_tokens = 50 / 60 818" "task 37016 done | tps = 71 s tokens text → /Users/dev/work/done/proposal_westside.md" Outside the window, clouds, blue sky, and no WiFi. On the tray, 1 MacBook, an open terminal on 2 screens, and an inference server on localhost. From what I have observed, this is the cleanest offline AI workflow I have seen in the past year: 11 hours of flight, $0 for WiFi, and the entire client queue closed before landing.

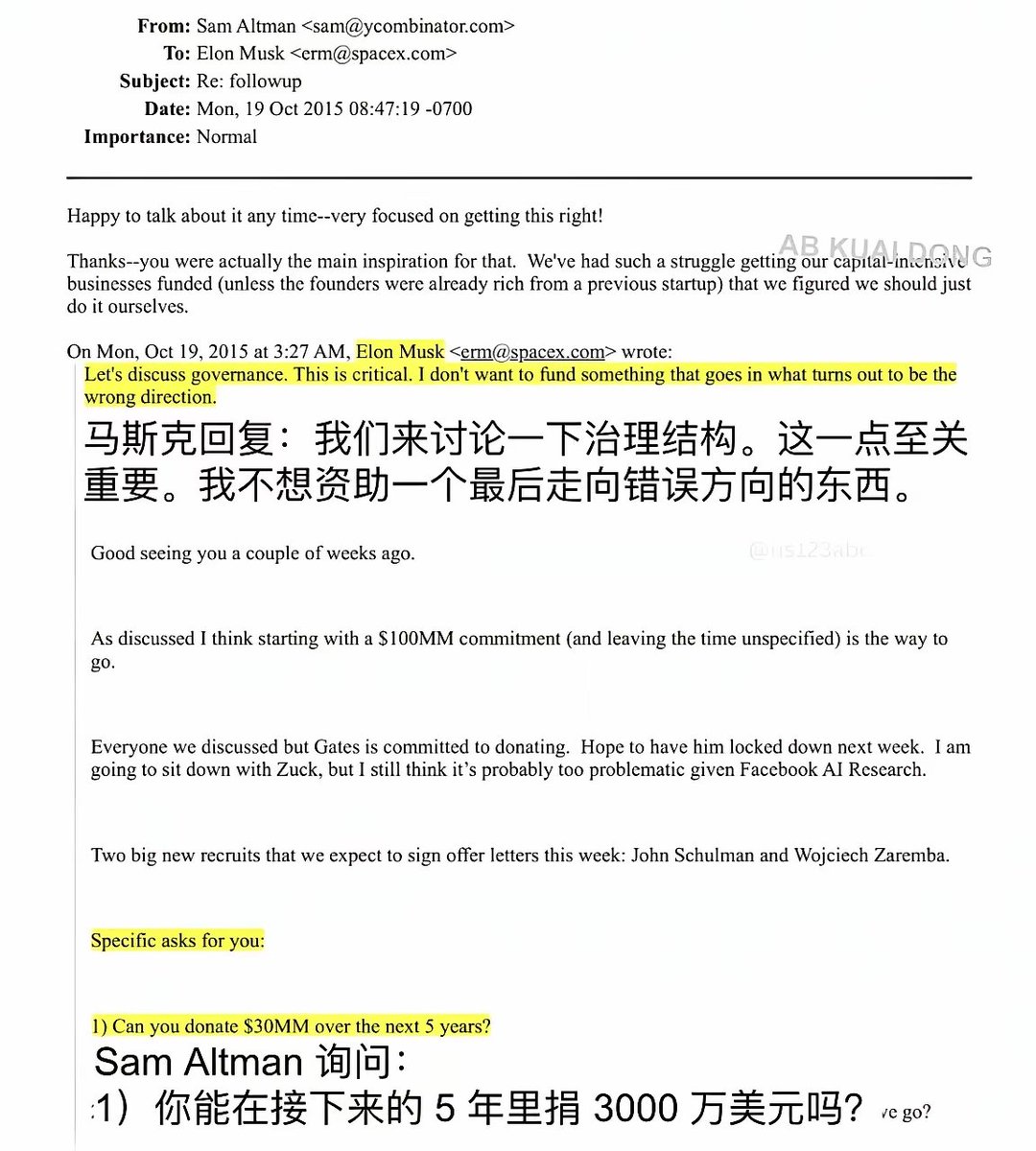

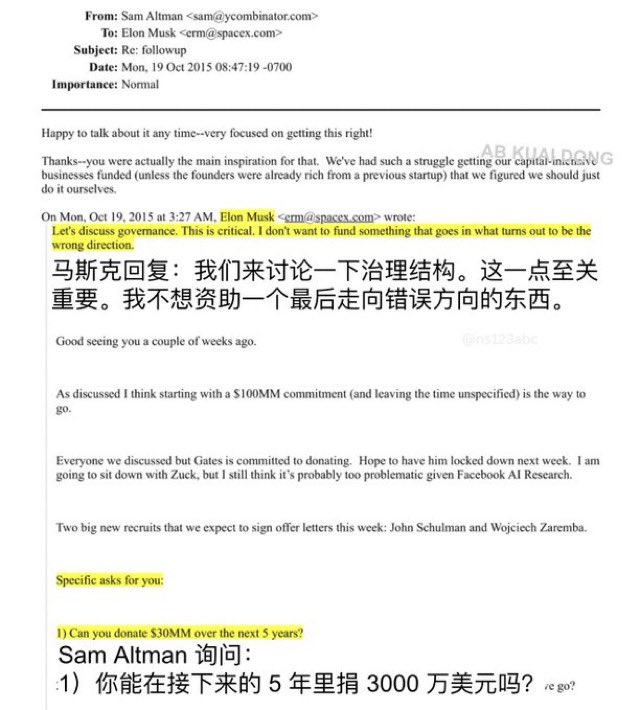

Elon Musk confirms xAI used OpenAI’s models to train Grok theverge.com/ai-artificial-…