Sabitlenmiş Tweet

What if you got up to $50,000 in inference credit—and ended up with a faster, more reliable model endpoint? 🧵👇

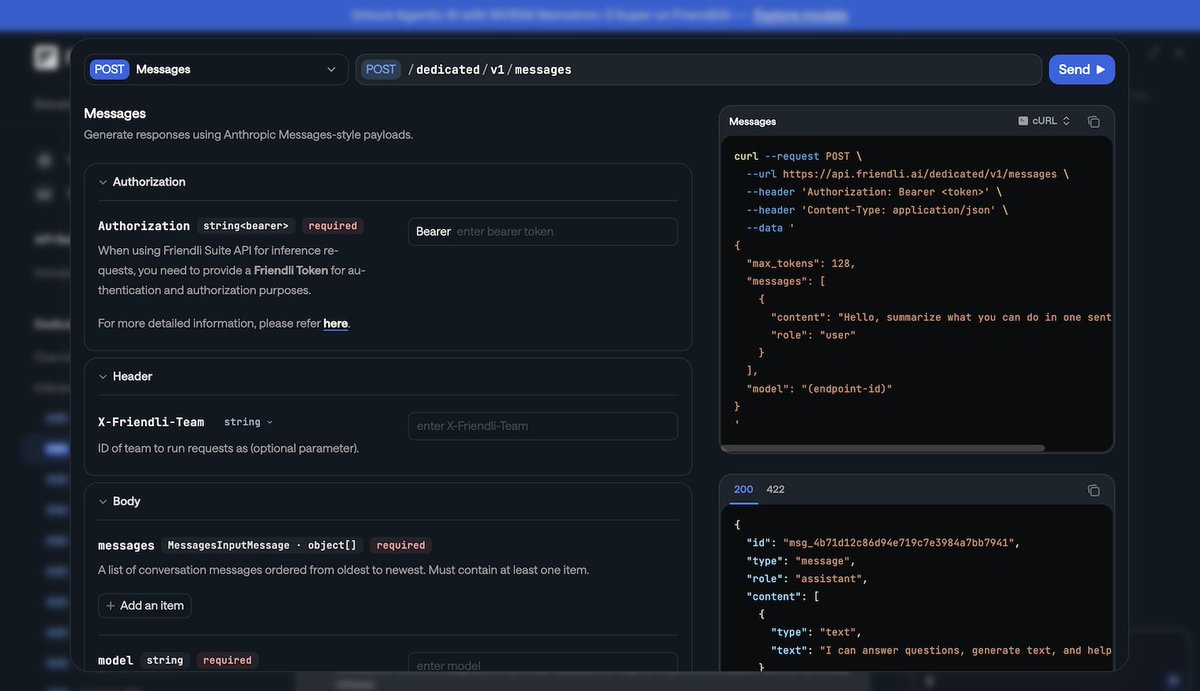

Today, we’re launching the FriendliAI "Switch" promotion. We’re so confident that Friendli’s ‘Orca’ Engine and frontier open models will outperform your current stack that we’re paying you to make the move.

English