FundaAI

1.8K posts

FundaAI

@FundaAI

FundaAI provides AI Invest OS, including AI Agents, equity research reports, and research data. https://t.co/0xRZegn2ep

Review| $GOOG / $MSFT / $AMZN : CapEx Raised — 2026 Finally Becomes the Year of Cloud Acceleration Q: Is 2026 the most pronounced year of CSP acceleration? A: Unequivocally yes. GPU prices began rising in 1Q26, with CPU prices following from 2Q26. The incremental growth this year is also coming from new vectors that weren’t material before — namely coding APIs (AWS-Bedrock, GCP-Vertex, Azure-AI Foundry) and ASICs (AWS-Trainium, GCP-TPU). Q: Will CapEx continue to be revised higher? A: Yes. As we discussed in our Weekly Meeting, the LTAs (long-term agreements) signed between CSPs and the memory makers took effect from March–April. This means all forward CapEx must be marked to the LTA floor price — implying a structural step-up regardless of demand. Critically, LTAs lock in floors but do not cap upside, so any further spot price appreciation will likely drive additional CapEx revisions. Q: Why did GCP and Azure come in fully in line with our expectations, while AWS missed? ...... Detailed Report fundaai.substack.com/p/review-goog-…

Preview|APP 1Q26: No META Impact Observed; E-Commerce Reaccelerating After 1Q26 QoQ Decline; Gaming Growth Robust We recently spoke with seven AppLovin and Unity industry experts and gathered updates on several key topics -$APP Lovin quarterly channel checks -APP e-commerce progress -META’s entry into the gaming ad market and its impact on AppLovin -$U quarterly channel checks Detailed Report fundaai.substack.com/p/previewapp-1…

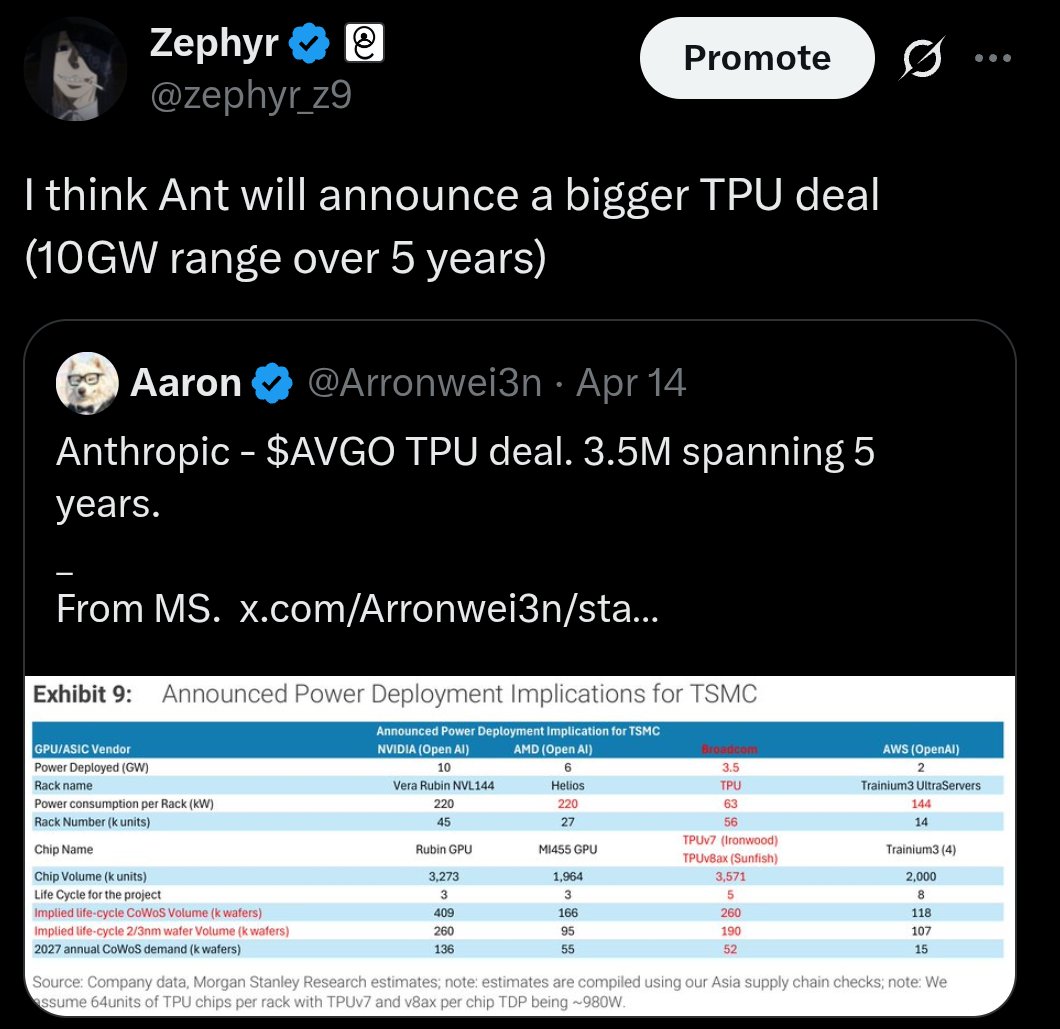

Weekly|Cloud Acceleration Confirmed, CSP Capex Raised, DeepSeek V4 Triggers NAND Inflection, Tiktok, $LITE & $COHR , TPU CoWoS Raised, $RKLB , $PLTR , $AXTI , $MSFT Earnings season delivered the print AI infra bulls needed. GOOG/MSFT/AWS all raised CapEx alongside accelerating top-line — GCP +63%, Azure +39%, AWS likely re-accelerating in 2Q once OpenAI’s reserved Blackwell capacity flows through. CapEx up with revenue accelerating is structurally healthier than CapEx up with revenue flat, and should put data-center cash-flow concerns on the back burner for at least another quarter. DeepSeek V4 was the more underappreciated story of the week — the second round of cuts (cache hit at 1/10 of the list, stacked on 75% off) is only possible because KV cache is finally migrating from DRAM/HBM onto SSD at scale, which is a structural inflection for NAND demand. On the other hand, we have received a lot of great feedback on our recent Institutional tier launch. Here at FundaAI, we focus on our Individual Plan on Substack continue to provide highly curated research alongside community engagement on Substack Chat, while the institutional tier offers the broadest research coverage with high-touch communications. We are incredibly grateful to our long-time readers here on Substack and remain committed to delivering sharper insights and more powerful AI tools to elevate your research work. Please contact sales@funda.ai for more. This Week’s Reports Review|GOOG/MSFT/AWS: CapEx Raised — 2026 Finally Becomes the Year of Cloud Acceleration. All three hyperscalers raised CapEx with revenue accelerating, and we expect further upward revisions as LTA floor pricing flows through forward planning. fundaai.substack.com/p/review-goog-… Deep|DeepSeek V4: The Inflection Point for Large-Scale NAND-Based KV Cache. V4’s cache hit was cut to 1/10 of the list because KV cache is finally migrating from DRAM/HBM onto SSD at scale — positive for SSD, exponential for NAND. fundaai.substack.com/p/deepdeepseek… Deep|DeepSeek V4: The First Model Custom-Built for Non-NVIDIA Chips, Optimized for Cost. V4’s architecture appears co-designed for Ascend, ASICs, and optical scale-up superpods — the real start of a non-NVIDIA AI ecosystem rather than just NVIDIA-substitute compatibility. fundaai.substack.com/p/deepdeepseek… Deep|TikTok 1Q26 Update: No Visible Impact on META or GOOG Yet. Agency checks across NA / Europe / APAC: TikTok ad budgets growing, but META and YouTube have not seen the cannibalization the consensus expected — the clearest loser remains Snapchat. fundaai.substack.com/p/deep-tiktok-… Preview|MSFT 1Q26: GPU Pricing Stabilizing, Azure Begins Anthropic Partnership, but Copilot Competition Remains Intense fundaai.substack.com/p/previewmsft-… Detailed Report fundaai.substack.com/p/weeklycloud-…

Jefferies/Fubon out with massive raise to FY26 TPU forecasts - ranking $CLS/ $TTMI/ $AVGO/ $ALAB by TPU upside potential

Deep|DeepSeek V4: The Inflection Point for Large-Scale NAND-Based KV Cache In our previous article we discussed DeepSeek V4’s architectural customization on non-NVIDIA hardware and the first round of API price cuts at 75% off. This article focuses on V4’s second round of cuts: DeepSeek separately took the input cache-hit tier further down to 1/10 of list, stacked on top of the 75% off from the previous round, with the floor at ¥0.025 per million tokens. This widens the cache hit / cache miss spread from 1/12 to 1/120 (cache hit ¥0.025 vs. cache miss ¥3). DeepSeek V4’s real-world cache hit rate in agent settings has reached 95%+, and based on our research, DeepSeek’s current SSD configuration and utilization have stepped up materially versus before. Behind this is V4 compressing KV cache size to 10% of V3.2’s, plus DeepSeek’s accumulated engineering work on SSD-based KV cache, which together migrate KV cache from expensive, capacity-limited DRAM / HBM onto larger and cheaper SSD at scale. We believe DeepSeek V4’s cache-hit repricing implies upside for SSD, with NAND demand set to grow exponentially. Detailed Report fundaai.substack.com/p/deepdeepseek…

JUST IN: Google to invest up to $40 billion in Anthropic as part of a deal that includes 5+ gigawatts of computing capacity over five years.

Deep|DeepSeek V4 vs Claude vs GPT-5.4: A 38-Task Benchmark Across Coding, Reasoning, and Financial Research Important note: This report is not a research report. It is an evaluation report completed by the FundaAI Engineering Team, not written by the FundaAI Analyst Team. It does not represent the views of the FundaAI Analyst Team. All test cases are based on the actual working environment of the FundaAI Platform. As of time of publication, GPT-5.5 has not yet officially released its API. Testing solely through Codex 5.5 may not fully reflect the complete performance of the API. We have currently only conducted urgent testing on DeepSeek V4, and will include GPT-5.5 test results as soon as its API becomes officially available. Key Takeaways -Claude Opus 4.6 (Thinking) and Claude Opus 4.7 tie for #1 overall (both 8.72 weighted avg). They lead for different reasons: Opus 4.6 Thinking is strongest on coding and hard reasoning, while Opus 4.7 leads writing and full-coverage multi-step work. -DeepSeek V4 Pro (Thinking) has the highest completed-task multi-step score at 8.90, ahead of Opus 4.7 at 8.87, but with partial coverage: 29/38 tasks completed because several hard coding/reasoning tasks timed out. -DeepSeek V4 Pro received the only financial research 10/10 because it produced the strongest answer to the NVDA game theory task. It did not score highly because it was long; it scored highly because it fully developed 11 players, 18 citations, and forced-move economics. -GPT-5.4 remains the fastest full-suite model (105s avg), with strong coding and reasoning, but its latest composite score is 7.88 and it no longer leads the coding table. -DeepSeek V4 has a substantially lower estimated cost than Claude in this benchmark. Flash is ~$0.007/task, Flash Thinking is ~$0.008/task, Pro is ~$0.10/task, and Pro Thinking is ~$0.15/task, all below Claude Opus per-task cost estimates. -DeepSeek V4’s relative weakness is presentation format more than analysis quality. It generally produces strong markdown research, while Claude Opus 4.5 more readily produces dashboard-ready OpenUI charts, metric cards, and data tables. -The frontier is now a three-way race between Anthropic (writing, earnings, citation rigor), DeepSeek (analytical depth, data synthesis, cost), and OpenAI (speed, incident debugging, system design). GPT-5.5 API results still need to wait until the official API becomes available. Detailed Report fundaai.substack.com/p/deepdeepseek…

Research|TPU 8t/8i and Virgo Network: the biggest networking upgrade since TPU v4 — and another major win for optics (one we called early) Going through $GOOG ’s two recent technical posts — on Virgo Network cloud.google.com/blog/products/… and cloud.google.com/blog/products/… — what stands out is how much of it aligns with what we had already outlined months ago. Deep|AI Infra 2026: Shifting from "Brain Power" Competition to "Whole-Body" Evolution fundaai.substack.com/p/deepai-infra… Deep|LITE: Google’s Newly Introduced In-rack Switch Tray is Actually a Positive for OCS and Optics fundaai.substack.com/p/deeplite-goo… Back then, in our note on the in-rack switch tray, we tried to unpack a key misconception: this upgrade wasn’t about replacing the 3D torus. The real change was happening in the DCN — the scale-out network. We put it plainly: “The in-rack switch tray represents a change in this DCN network, not in the scale-up network.” And more importantly: “Dragonfly-topology based scale up and scale out integration, with higher network bandwidth overall.” At the time, the logic made sense to some but lacked hard confirmation. Looking at Google’s own write-ups now, that confirmation is here — and if anything, the direction is even more aggressive than expected. Detailed Report fundaai.substack.com/p/researchtpu-…

Preview| $NOW 1Q26: Growth Largely In Line as Macro Stays Choppy; AI Control Tower Emerges as New Narrative We spoke with three top ServiceNow (NOW) channel partners. 1Q26 performance came in largely in line with expectations — growth moderated from the 4Q25 budget-flush quarter, but full-year 2026 targets remain intact, with pipeline visibility broadly healthy. Overall, AI adoption remains soft, but we think the most notable incremental datapoint this quarter is the customer traction of AI Control Tower (AICT), which is showing up in the majority of new pipeline deals. Early anecdotes suggest that hardware pricing inflation is squeezing software budgets to some extent. Despite generally low expectations for results following the recent sell-off, we remain cautious about the positioning of application SaaS companies as LLMs become increasingly powerful. Detailed Report fundaai.substack.com/p/previewnow-1…

Deep| $Kioxia: Not a Flash in the Pan Historically, the dominant narrative in AI infrastructure investment has been GPU-centric — more compute unlocks better models with higher benchmark scores. However, the focus has now shifted from training scaling laws to agent scaling laws, where the relevant input is not training compute. Rather, agent performance on complex multi-step tasks scales with the amount of context and memory available to each agent, the number of parallel agents that can collaborate or verify each other’s work, and the number of reasoning steps the agent can take before returning a result. Agent scaling is bottlenecked by the infrastructure surrounding the GPU — you need the agent’s working memory to be accessible fast enough, cheaply enough, and at large enough scale to support long sessions. Infrastructure layers that were historically secondary — NAND storage, DRAM, CPU orchestration, and high-bandwidth interconnects — become critical determinants of system performance and cost. In our GTC preview, we observed that “NAND should not be considered a secondary topic in GTC discussions” because it is becoming a “core architectural variable”. In an even earlier post, we noted that the investment logic for DRAM and SSD increasingly resembles “AI infrastructure growth” rather than merely “cyclical beneficiaries of price upcycles.” In this note on Kioxia, we expand on these observations. And this report is the first report that @illyquid has contributed to! Detailed Report open.substack.com/pub/fundaai/p/…

Research|TPU 8t/8i and Virgo Network: the biggest networking upgrade since TPU v4 — and another major win for optics (one we called early) Going through $GOOG ’s two recent technical posts — on Virgo Network cloud.google.com/blog/products/… and cloud.google.com/blog/products/… — what stands out is how much of it aligns with what we had already outlined months ago. Deep|AI Infra 2026: Shifting from "Brain Power" Competition to "Whole-Body" Evolution fundaai.substack.com/p/deepai-infra… Deep|LITE: Google’s Newly Introduced In-rack Switch Tray is Actually a Positive for OCS and Optics fundaai.substack.com/p/deeplite-goo… Back then, in our note on the in-rack switch tray, we tried to unpack a key misconception: this upgrade wasn’t about replacing the 3D torus. The real change was happening in the DCN — the scale-out network. We put it plainly: “The in-rack switch tray represents a change in this DCN network, not in the scale-up network.” And more importantly: “Dragonfly-topology based scale up and scale out integration, with higher network bandwidth overall.” At the time, the logic made sense to some but lacked hard confirmation. Looking at Google’s own write-ups now, that confirmation is here — and if anything, the direction is even more aggressive than expected. Detailed Report fundaai.substack.com/p/researchtpu-…