...

2.9K posts

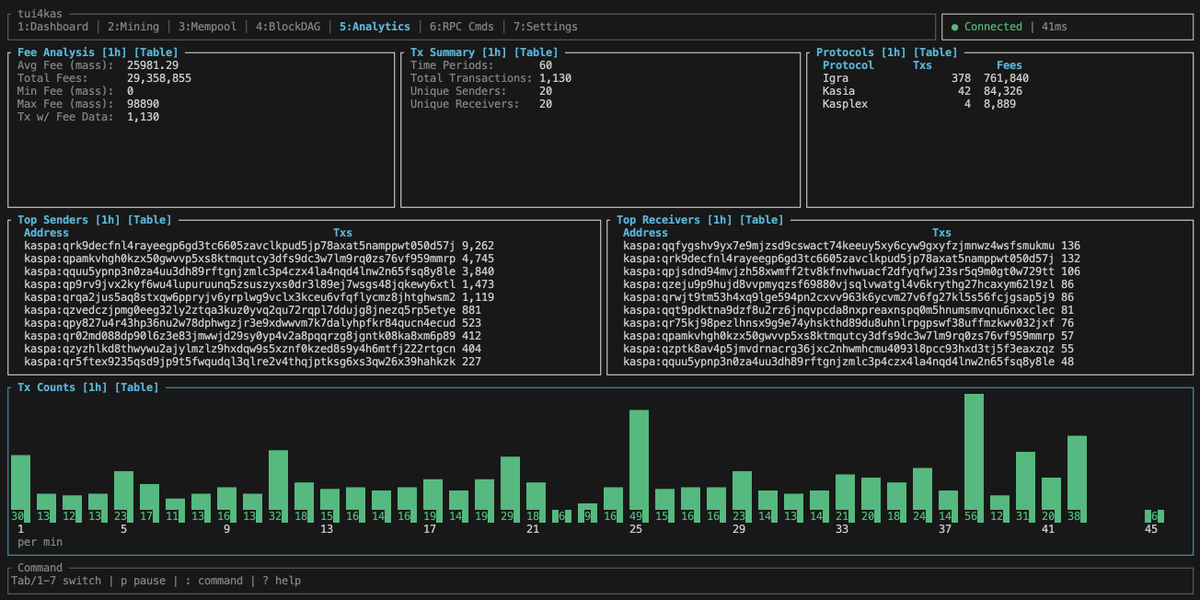

Wow. Me and 19 others just completed from what I can tell the first ever mixing pool on $KAS. We all sent 100 KAS and received 99.9 KAS after everything was paid for and completed. All this on a permission-less proof of work network. Privacy advocates where the hell are you? #PoweredByKaspa

If you, like me, just woke up, let me catch you up on the Claude Code Leak (I know nothing, all conjecture): > Someone inside Anthropic, got switched to Adaptive reasoning mode > Their Claude Code switched to Sonnet > Committed the .map file of Claude Code > Effectively leaking the ENTIRE CC Source Code > @realsigridjin was tired after running 2 south korean hackathons in SF, saw the leak > Rules in Korea are different, he cloned the repo, went to sleep > Wakes up to 25K stars, and his GF begging him to take it down (she's a copyright lawyer) > Their team decided - how about we have agents rewrite this in Python!? Surely... this is more legal > Rewrite in Py > Board a plane to SK🇰🇷 > One of the guys decides python is slow, is now rewriting ALL OF CLAUDE CODE into Rust. > Anthropic cannot take down, cannot sue > Is this "fair use?" > TL;DR - we're about to have open source Claude Code in Rust

Okay, it's time for a little update: I just finished the work on the zero knowledge part of the vprogs framework, which introduces the ability to prove arbitrary computation. It consists of the following 8 PRs that gradually introduce the necessary features: 1. ZK-framework preparations (github.com/kaspanet/vprog…): This PR cleans up the scheduler and storage layers, extends the build tooling with workspace-wide dependency checking, adds the ability to publish artifacts for transactions and batches (which will later hold the proofs), renames some core types for clarity, and introduces lifecycle events on the Processor trait that allow a VM to hook into key scheduler events like batch creation, commit, shutdown, and rollback. 2. Core Codec (github.com/kaspanet/vprog…): This PR introduces a lightweight encoding library for ZK wire formats. In a zkVM guest, every byte operation contributes to the proof cost, so the codec is designed to reinterpret data in-place rather than copying it. It includes zero-copy binary decoding (Reader, Bits) and sorted-unique encoding for deterministic key ordering. It is built for no_std so it runs inside zkVM guests. 3. Core SMT (github.com/kaspanet/vprog…): To prove state transitions, we need cryptographic state commitments. This PR adds a versioned Sparse Merkle Tree that produces a single root hash representing the entire state. It includes all state-of-the-art optimizations: shortcut leaves at higher tree levels to avoid full-depth paths for sparse regions, multi-proof compression that shares sibling hashes across multiple keys, and compact topology bit-packing to minimize proof size. It integrates into the existing storage and scheduler layers so that every batch commit updates the authenticated state root, while rollback and pruning maintain tree consistency. 4. ZK ABI (github.com/kaspanet/vprog…): Defines the wire format for communication between the host and zkVM guest programs, establishing a universal language for proof composition. It specifies how inputs, outputs, and journals are structured for two levels of proving: the transaction processor, which proves individual transaction execution against a set of resources, and the batch processor, which aggregates transaction proofs and proves the resulting state root transition. Because the ABI is backend-agnostic and no_std compatible, any zkVM backend can directly use it (non-Rust zkVMs would need to reimplement the ABI in their language). 5. ZK Transaction Prover (github.com/kaspanet/vprog…): Introduces the transaction proving worker, which receives serialized execution contexts via the ABI wire format and submits them to a backend-specific prover on a dedicated thread. The Backend trait abstracts the actual proof generation, so different zkVM backends can be swapped without changing the pipeline. 6. ZK Batch Prover (github.com/kaspanet/vprog…): Introduces the batch proving worker, which collects the individual transaction proof artifacts, pairs them with an SMT proof covering the batch's resources, and submits the combined input to a backend-specific batch prover. The result is a single proof attesting to the entire batch's state root transition. Like the transaction prover, the Backend trait abstracts proof generation so different zkVM backends can be swapped without changing the pipeline. 7. ZK VM (github.com/kaspanet/vprog…): Wires everything together by implementing the scheduler's Processor trait with ZK proving support. The VM hooks into the lifecycle events introduced in PR 1 to feed executed transactions into the transaction prover and batches into the batch prover. Proving is optional and configurable - it can be disabled entirely, run at the transaction level only, or run the full batch proving pipeline. 8. ZK Backend RISC0 (github.com/kaspanet/vprog…): Provides the first concrete zkVM backend using risc0. It implements the transaction and batch Backend traits, includes two pre-compiled guest programs (one for transaction processing, one for batch aggregation), and ships with an integration test suite that verifies the full pipeline end-to-end - from transaction execution through batch proof generation to state root verification. TL;DR: While the early version of the framework focused on maximizing the parallelizability of execution, this feature focuses on extending this capability to maximizing the parallelizability of proof production. If you're a builder: this is the first version of the framework that lets you write guest programs with a Solana-like API (resources, instructions, program contexts) and have them proven in a zkVM. The current milestone uses a single hardcoded guest program - composability across multiple programs and bridging assets in and out of the L1 are part of the upcoming milestones, but if you're eager to start tinkering, the execution and proving pipeline is fully functional and provides a minimal environment to build and test guest logic today. Once we add user-deployed guests, they will move one logical layer down: the current transaction processor will become a hardcoded-circuit that handles invocation and access delegation to user programs, similar to how SUI handles programmable transactions (including linear type safety at the program boundary). In practice, this means guest programs will be invoked with a very similar API but scoped to a subset of resources, so the basic programming model won't change. Note that guests currently handle their own access authentication (e.g. signature checks) - the framework will eventually manage this automatically. If you want to contribute, two areas where community involvement would be especially impactful: - An Anchor-like DSL for writing guest programs -- the ABI is stable enough to build on, and a good developer experience layer would make this accessible to a much wider audience. - A second zkVM backend (e.g. SP1) - the Backend traits are designed for this, and a second implementation would prove out the abstraction. One thing I find particularly interesting in the context of PoW: the block hash provides an unpredictable, unbiasable random input that is revealed after transaction sequencing. This gives guest programs native access to on-chain randomness without oracles or additional infrastructure - something traditionally hard to achieve in smart contract platforms. PS: I am also planning to start with the promised regular hangouts but since I will visit my family over easter and want to get a better understanding of the open questions next week (it's good to have some problems to wrestle during that slower time 😅), I decided to start with that once I am back (12th of April). Generally speaking, is there a day that people would prefer for these hangouts? I guess monday would be bad as there is already another community event (write your preferences in the comments if you have a strong opinion).

It is hard to communicate how much programming has changed due to AI in the last 2 months: not gradually and over time in the "progress as usual" way, but specifically this last December. There are a number of asterisks but imo coding agents basically didn’t work before December and basically work since - the models have significantly higher quality, long-term coherence and tenacity and they can power through large and long tasks, well past enough that it is extremely disruptive to the default programming workflow. Just to give an example, over the weekend I was building a local video analysis dashboard for the cameras of my home so I wrote: “Here is the local IP and username/password of my DGX Spark. Log in, set up ssh keys, set up vLLM, download and bench Qwen3-VL, set up a server endpoint to inference videos, a basic web ui dashboard, test everything, set it up with systemd, record memory notes for yourself and write up a markdown report for me”. The agent went off for ~30 minutes, ran into multiple issues, researched solutions online, resolved them one by one, wrote the code, tested it, debugged it, set up the services, and came back with the report and it was just done. I didn’t touch anything. All of this could easily have been a weekend project just 3 months ago but today it’s something you kick off and forget about for 30 minutes. As a result, programming is becoming unrecognizable. You’re not typing computer code into an editor like the way things were since computers were invented, that era is over. You're spinning up AI agents, giving them tasks *in English* and managing and reviewing their work in parallel. The biggest prize is in figuring out how you can keep ascending the layers of abstraction to set up long-running orchestrator Claws with all of the right tools, memory and instructions that productively manage multiple parallel Code instances for you. The leverage achievable via top tier "agentic engineering" feels very high right now. It’s not perfect, it needs high-level direction, judgement, taste, oversight, iteration and hints and ideas. It works a lot better in some scenarios than others (e.g. especially for tasks that are well-specified and where you can verify/test functionality). The key is to build intuition to decompose the task just right to hand off the parts that work and help out around the edges. But imo, this is nowhere near "business as usual" time in software.

place your bets: can a full, trustless chess server be deployed purely on native Kaspa covenants (TN12 / mainnet post-HF), or will script-size limits prove decisive?

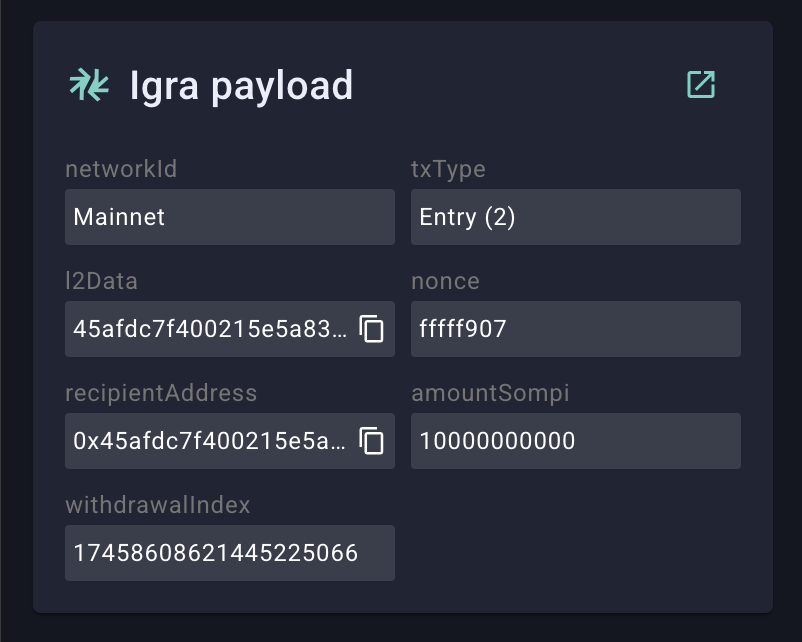

🚨 New Episode w @emdin & @argonmining from @Igra_Labs "Right NOW Kaspa NEEDS IgraLabs!" In this episode, Ankit sits down with @emdin and @argonmining to dive deep into Igra mainnet and role of Igra with the $KAS Ecosystem. One year after their first conversation on @xximpod, @Igra_Labs has gone from an unknown project to a live Layer 2 on #Kaspa and the conversation is as exciting as ever. The episode covers what's been built and what's coming: 🔷 Mainnet is live 🔷 Attesters & Node Economics 🔷 Ultra Light Nodes 🔷 Ecosystem Deep Dive 🔷 The ZAP Token Auction 🔷 Exchange Listings 🔷 Igra's Role in the Kaspa Ecosystem CC - Projects we discussed @ZealousSwap @AppKaskad @KaspaCom @KaspaFinance @KastleWallet @AporiaExchange @Kaspa_KAT @VivoorKAS @overpassfi @asaefstroem #KASWARE Did we miss anyone @argonmining ? Please if you could tag below :) ------------------------ DISCLAIMER This video is for educational and informational purposes only and is NOT financial or investment advice. We do not recommend you to buy or sell any assets. Opinions of guests are their own and do not constitute endorsements. Cryptocurrency and blockchain investments are highly risky and can result in total loss of capital. Do your own research and consult licensed professionals. The channel and its hosts are not liable for any investment decisions or losses.