coderofstuff

1.8K posts

coderofstuff

@coderofstuff_

There are 10 types of people in the world - those who know binary and those who don’t | coding DAGKnight https://t.co/WZfxjGEEgq

@DesheShai @coderofstuff_ But June 2026 Toccata Hard Fork implements the August 2025 proposal to transition Kaspa to hashed addresses (P2PKH), ensuring public keys remain hidden and protected against quantum threats until their first transaction.

Tried my hand at writing a short article about something that was always very interesting to me in $KAS - the burn address. Check it out at @coderofstuff/kaspas-peculiar-burn-address-7dac2e5a4ed6" target="_blank" rel="nofollow noopener">medium.com/@coderofstuff/…

Hopefully someone finds this bit of trivia interesting!

@xximpod @DesheShai Quantum computers cannot crack a $KAS address that has never sent a transaction because the public key is not yet visible on the network.

Vijayk, you’re new here and still pretty retarded when it comes to your understanding of how these networks actually work. You see things at a surface level which is why you’re easily fooled by this shitcoin. You need to dig deeper and think more critically about why this design can’t scale in a censorship resistant way.

@realvijayk What does it take to solo mine in Kaspa and consistently get a daily reward? If you have 2TH in hashrate this simulation says that in a period of 30 days you’d only have about 2-3 days that you might not get rewards while the rest of the days you solo mine at least one block.

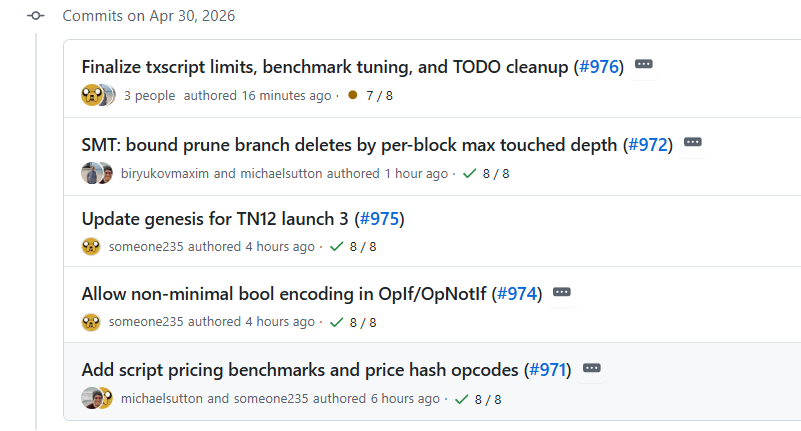

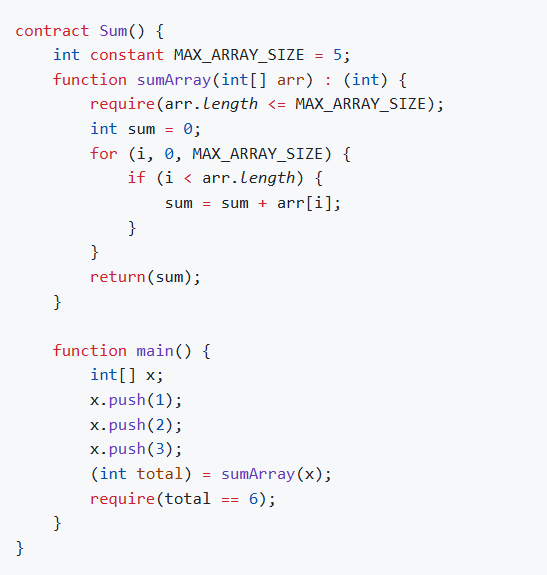

place your bets: can a full, trustless chess server be deployed purely on native Kaspa covenants (TN12 / mainnet post-HF), or will script-size limits prove decisive?