FuzzingLabs

810 posts

@FuzzingLabs

Research-oriented Cybersecurity startup specializing in #fuzzing, Vulnerability Research & Offensive security on Mobile, Browser, AI/LLM, Network & Blockchain.

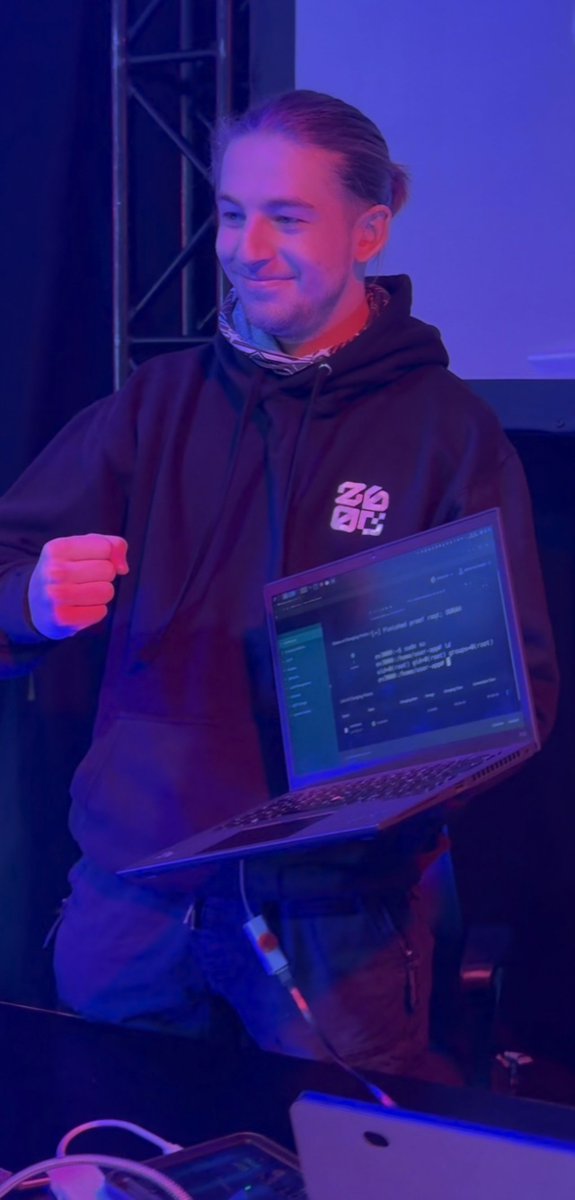

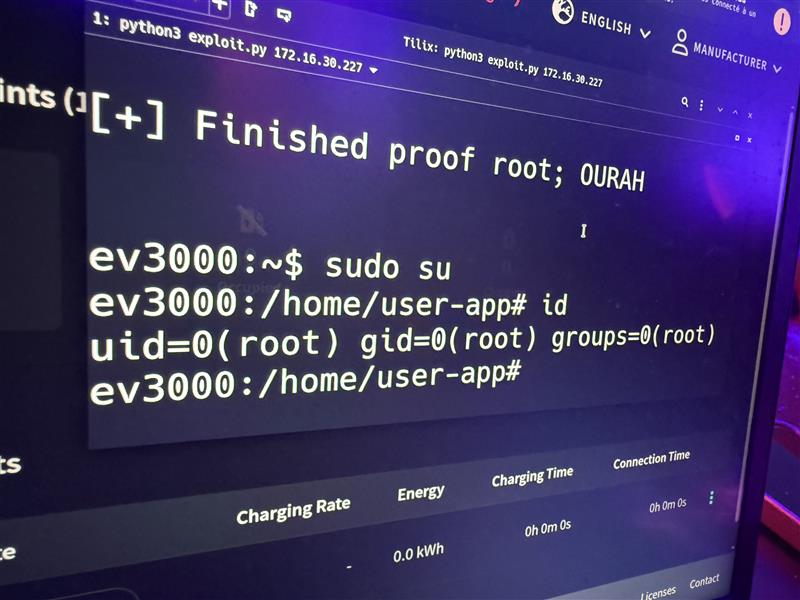

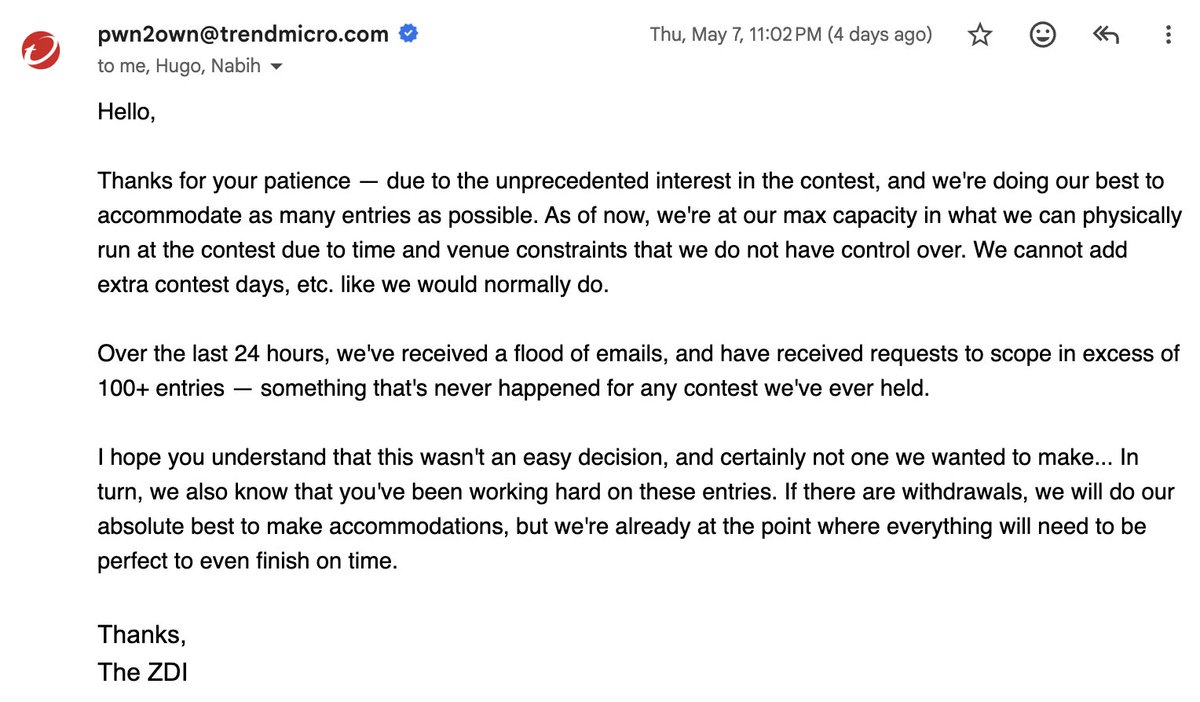

‼️🚨 Pwn2Own Berlin 2026 just hit a wall. For the first time in 19-years, ZDI rejected dozens of working zero-day RCE submissions because organizers ran out of contest slots. Rejected hackers are now going public with PoC demos and direct vendor disclosures, breaking Pwn2Own's usual secrecy. ▪️ AI surfaces a massive wave of 0-day RCEs. ▪️ Submissions overwhelm ZDI past max capacity. ▪️ Slots run out. Researchers with working chains get rejected. ▪️ "Revenge disclosures" begin. ← we are here. Confirmed casualties so far: ▪️ @xchglabs : 86 vulnerabilities prepared (PyTorch, NVIDIA, Linux KVM, Oracle, Docker, Ollama, Chroma, LiteLLM, llama.cpp). All rejected. Now reporting directly to vendors with writeups dropping as patches land. ▪️ @ggwhyp : full-chain Firefox RCE on Windows. Rejected. Publicly demoed (HTML page → cmd.exe → calc.exe). Responsibly disclosed to Mozilla. ▪️ @yunsu_dev : working RCE chain, rejected. Submitting elsewhere. ▪️ @ryotkak : tried to register for 3+ weeks. ZDI confirmed "at maximum capacity, can't add extra contest days." Considered canceling flight and hotel. ▪️ @anzuukino2802 : Claude Code RCE PoC. Rejected. ▪️ @desckimh : 0-day RCEs in Ollama and LM Studio. Rejected. Reported impact: a community-estimated 150+ researchers tried to register. Accepted contestants are now being warned about collisions. Rejected vulnerabilities going to bug bounty programs may trigger pre-event patches that invalidate the work of those who got in. ZDI has not publicly addressed the capacity issue. The event still runs May 14-16 in Berlin.